Inspecting finished components in real time

MARQUIS software recognizes both the component itself, and also any discrepancies from the target dimensions.

© Fraunhofer IGD

Delivered components have to undergo an incoming goods inspection to ensure they are correctly dimensioned and everything else is just right. MARQUIS – a software solution comprising machine learning and augmented reality – will be able to inspect components and their assemblies while on the move and in real time. Researchers at the Fraunhofer Institute for Computer Graphics Research IGD are set to showcase the technology at the Hannover Messe from April 12-16, 2021.

Quality control is key to the manufacturing industry, in the automotive sector for example. Does a physical component fail to meet the requirements specified in the CAD data? Until now, employees have carried out visual inspections to find out. Researchers at Fraunhofer IGD in Darmstadt are now developing a much more precise alternative using MARQUIS: This software combines augmented reality with machine learning methods to allow comparisons to be made between a CAD specification and the real product. “The system recognizes the component and also identifies any discrepancies from the target dimensions,” says Holger Graf, scientist at Fraunhofer IGD, explaining the technology.

“As well as individual components, it can also inspect assemblies made of multiple parts. A machine learning procedure, another of our developments, detects the position of the components in space.” Has the cross member, for example, been attached at the right angle? The system does this by relying on a predecessor project: a stationary system consisting of multiple cameras that precisely measures the components. The extraordinary feature of the new system: It’s mobile – workers simply pull out their smartphone or tablet and point it at the component concerned.

Generating training data for artificial intelligence

At the heart of MARQUIS is machine learning. The system uses this technology to detect not only the individual components, but also their position in space. But it takes gigantic data sets to train this artificial intelligence. This is best explained by way of example: Let’s take the automated recognition of cats on photographs. The software can reliably recognize the animals only if we show it numerous images of cats in various situations and positions, and also suggest which pixels on the image belong to the cat and which do not. There are plenty of images that tell us all about cats. When it comes to producing components, however, such training images simply do not exist or have to be generated using complex processes.

“Producing these data from photos would take years, so our aim was to create the training data purely from the CAD data that accompany every production process,” says Graf. The researchers therefore need to generate synthetic images that act like real photos. They do this by simulating multiple camera setups in space to view the CAD model from various directions – these cameras take “photos” from every perspective and the images are set against an arbitrary background. While CAD data are usually shown in blue, green and yellow, the components on the artificial images created by photorealistic rendering consist of various different materials, gray shimmering metal for example. The approach is working as hoped: Through the deep learning approach, the system is effectively trained using the synthetic images and is then able to recognize a real component without ever having seen one before. And all very swiftly: For ten different, unknown components in a complex product setup, the researchers need only a few hours to train the artificial intelligence networks if the CAD data are in place.

Automatic component inspection in real time

Once the system has been trained to recognize the components concerned, it can inspect them in real time. The system recognizes the component itself, its position in space and goes on to precisely superimpose the CAD data. Do they both match up? The researchers use augmented reality to find out.

The research team will be demonstrating its development at the Hannover Messe from April 12-16, 2021 using an assembled front axle. In a project funded by the state of Hesse under the Löwe program (project number 928/20-85), the Fraunhofer researchers are collaborating closely with Visometry to transfer machine learning processes, along with the associated workflows and networks, into this spin-off’s product portfolio.

Weitere Informationen:

https://www.fraunhofer.de/en/press/research-news/2021/march-2021/inspecting-fini…

Media Contact

All latest news from the category: Trade Fair News

Newest articles

A universal framework for spatial biology

SpatialData is a freely accessible tool to unify and integrate data from different omics technologies accounting for spatial information, which can provide holistic insights into health and disease. Biological processes…

How complex biological processes arise

A $20 million grant from the U.S. National Science Foundation (NSF) will support the establishment and operation of the National Synthesis Center for Emergence in the Molecular and Cellular Sciences (NCEMS) at…

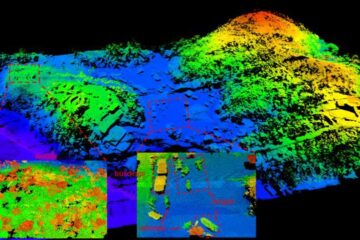

Airborne single-photon lidar system achieves high-resolution 3D imaging

Compact, low-power system opens doors for photon-efficient drone and satellite-based environmental monitoring and mapping. Researchers have developed a compact and lightweight single-photon airborne lidar system that can acquire high-resolution 3D…