ECO Computer by Solar Battery– Leading edge multicore technology

MULTICORE – FROM CELL PHONES TO SUPER COMPUTERS

A processor (the computer’s “brain”, a circuit that runs programs) is embedded in all the IT devices used in daily life – cell phones, game devices, digital TVs, car navigation systems, personal computers, automobiles, robots and super computers. The way processors are made is now going through a change because computational speed can no longer be increased through improvements at the semiconductor integration level (the increased number of transistors that can be integrated on a chip by improving micro-fabrication techniques) utilizing the standard method of integrating one processor in one semiconductor chip. When the integration level improves, more arithmetic units (circuits that execute addition or multiplication) can be added to the processor, but we can neither generate an efficient program to run those units simultaneously nor cool the computer to an operable temperature using forced-air cooling. (As the integration level improves, the processor emits tremendous amounts of heat (power consumption). A well-known theory states that when the semi-conductor integration level increases, the chip’s surface temperature reaches almost the same temperature as the surface of the sun.)

A multi-core scheme is now attracting attention. This is a technology that controls power consumption (heat) while improving the integration level and processing capability; multiple processors (i.e., processor cores if they are integrated in a chip) are integrated in one chip. (See Figure 1: 1 cm x 1cm chip with 8 integrated processor cores)

INDUSTRY-GOVERNMENT-ACADEMIC COLLABORATIVE RESEARCH IN A GOVERNMENT PROJECT

In the multi-core scheme, the aim is to speed operation by concurrently running multiple processors while the processor cores are run at a lower speed (operation frequency); less power is consumed thereby increasing control of power consumption. Power consumption increases relative to operation frequency and square of operation voltage. In the multi-core scheme, lowering the frequency allows for decreases in the voltage required to run the processors and reduce the power consumption. However, it is difficult to generate a program that runs multiple processors simultaneously. For example, you can divide one task into as many as eight smaller tasks for eight people and then complete the total task in one-eighth the time if all eight people work desperately and finish at the same time. These subtasks, however, are not “uniform”; for example, some sub-tasks must be done by just one person, or others require output from another subtask, etc. It is rather problematic to divide the tasks so that all subtasks can be efficiently finished in the shortest time.

For more than 20 years, we have been involved in this particular research and development, that is, dividing tasks (i.e. computer programs) and developing software (a parallelizing compiler) that automatically allocates tasks to processors. We have succeeded in developing parallelizing compiler technology for a government project (“Multi-core for Real-time IT Appliance”) sponsored by the Ministry of Economy, Trade and Industry/NEDO. (This 3-year project begun in 2005). We designed multi-core hardware (multi-core chip) that utilizes this compiler technology; you can automatically divide calculations (e.g. scientific calculations, calculations to display videos on digital TVs or one-segment TV, calculations that compress music for portable music players (technology that “shrinks” data while maintaining audio quality)), allocate “divided calculation” tasks to multiple processors and perform calculations in minimum processing time. Figure 2 shows the processing capabilities in which a music compression program is automatically divided and allocated in up to eight processors. If the program is divided into four processors, the processing speed is 3.6 times faster than one processor; for eight processors it is 5.8 times.

It is also possible to apply this parallelizing compiler to parallelizing (dividing/allocating) a program not only to a developed multi-core chip but also to other types of multiple processors, such as widely available commercial servers (high-end fast computers) or multi-core personal computers. Intel provides a 4-core integrated multi-core (Quad-core Xeon) and IBM provides a 2-core integrated multi-core (Power 5+). We have implemented an 8-processer server (p550Q) to which the multi-core's from Intel and IBM have been connected and run world standard computer performance evaluation programs (SPEC, CFP95 and 2000). Our compiler demonstrated the world’s fastest processing speed to be twice as fast as the average for the parallelization compilers sold by both companies.

The research and development of this multi-core chip and the parallelization compiler (automatically generates a parallelized program for the multi-core processors provided by the companies above) has been successful because of strong support from Katsuhiko Shirai, President of Waseda University and the close collaboration of Associate Professor Keiji Kimura and students in the department’s KASAHARA Laboratory and KIMURA Laboratory who provided critical input; Hitachi and Renesas Technology partici-pants who actually developed the chips and PCB (Figure 3: Major participants and the author receiving the second prize in the LSI-Of-The-Year award on July 4, 2008); participants from Fujitsu, Toshiba, Matsushita and NEC who contributed to the discussion on the standard architectures (structure methods) and methods (API: Application Program Interface) to run the compiler in these multi-core processors.

“COOL EARTH” BY “COOL” MULTI-CORE CHIP

We also focused on significantly reducing power consumption in addition to improving processor performance. For example, adding a fan to cool a cell phone’s processor is not effective since the fan may be too noisy for people to talk. Our goal was to develop a multi-core chip that consumes 3 watts or less and which can be cooled by natural air. It is estimated that the power consumption of a super computer will be at the level of several 10s of millions of watts in several years and at several 100s of millions of watts in 10 years. One whole power plant will be needed to cool one super computer. The situation is critical.

In view of this background, we have developed a parallelization compiler that can instantaneously cut the power to a processor which is idle after the compiler has assigned tasks to the processors; you can slow the operation speed (frequency) to 1/2, 1/4 or 1/8 or 0 (i.e., to “sleep”) for those idle processors and you can control the voltage to 1.4V, 1.2V and 1.0V. This has allowed us to succeed in controlling the multi-core power by the parallelization compiler for the first time in the world; this can be sum-marized as shown in Figure 4, where power consumption can be reduced 74%, from 5.7W to 1.5W, in a calculation to display video in digital TV and one-segmentation TV, or for 88%, from 5.7W to 0.7W of the music compression program.

Cooling a “hot” chip (power consumption) requires more electrical power in the air-cooling or water-cooling mechanism. This “cool” chip, as introduced in the “Council for Science and Technology Policy, Cabinet Office” on April 10, 2008, would go a long way in realizing Japan’s goal of a “Cool Earth”.

In addition, as shown in Figure 5, the multi-core chip developed can be run at lower power, allowing the chip to be run by a solar panel (solar battery).

In the future, we hope we can use the advanced multi-core processor to create high-performance cell phones driven by solar batteries, automobiles that are safer, more comfortable and energy-saving, desktop super-computers that are quiet and small, and food generating robots driven by sunlight.

Hironori Kasahara, Professor

Faculty of Science and Engineering

Waseda University

Media Contact

All latest news from the category: Power and Electrical Engineering

This topic covers issues related to energy generation, conversion, transportation and consumption and how the industry is addressing the challenge of energy efficiency in general.

innovations-report provides in-depth and informative reports and articles on subjects ranging from wind energy, fuel cell technology, solar energy, geothermal energy, petroleum, gas, nuclear engineering, alternative energy and energy efficiency to fusion, hydrogen and superconductor technologies.

Newest articles

How complex biological processes arise

A $20 million grant from the U.S. National Science Foundation (NSF) will support the establishment and operation of the National Synthesis Center for Emergence in the Molecular and Cellular Sciences (NCEMS) at…

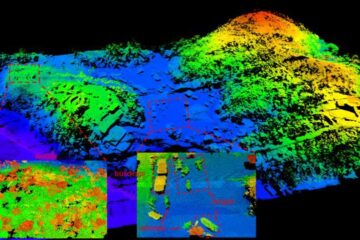

Airborne single-photon lidar system achieves high-resolution 3D imaging

Compact, low-power system opens doors for photon-efficient drone and satellite-based environmental monitoring and mapping. Researchers have developed a compact and lightweight single-photon airborne lidar system that can acquire high-resolution 3D…

Simplified diagnosis of rare eye diseases

Uveitis experts provide an overview of an underestimated imaging technique. Uveitis is a rare inflammatory eye disease. Posterior and panuveitis in particular are associated with a poor prognosis and a…