Is the Power Grid too Big?

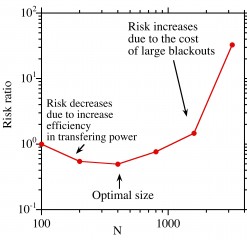

B.A. Carreras/BACV Solutions The overall operational "Risk" as a function of the system size (N), showing a decrease at first as the system becomes more efficient with size followed by an increase as the risk of large failures starts to dominate. The optimal size is then the minimum point in the curve.

Some 90 years ago, British polymath J.B.S. Haldane proposed that for every animal there is an optimal size — one which allows it to make best use of its environment and the physical laws that govern its activities, whether hiding, hunting, hoofing or hibernating. Today, three researchers are asking whether there is a “right” size for another type of huge beast: the U.S. power grid.

David Newman, a physicist at the University of Alaska, believes that smaller grids would reduce the likelihood of severe outages, such as the 2003 Northeast blackout that cut power to 50 million people in the United States and Canada for up to two days.

Newman and co-authors Benjamin Carreras, of BACV Solutions in Oak Ridge, Tenn., and Ian Dobson of Iowa State University make their case in the journal Chaos, which is produced by AIP Publishing.

Their investigation began 20 years ago, when Newman and Carreras were studying why stable fusion plasmas turned unstable so quickly. They modeled the problem by comparing the plasma to a sandpile.

“Sandpiles are stable until you get to a certain height. Then you add one more grain and the whole thing starts to avalanche. This is because the pile's grains are already close to the critical angle where they will start rolling down the pile. All it takes is one grain to trigger a cascade,” he explained.

While discussing a blackout, Newman and Carreras realized that their sandpile model might help explain grid behavior.

The Structure of the U.S. Power Grid

North America has three power grids, interconnected systems that transmit electricity from hundreds of power plants to millions of consumers. Each grid is huge, because the more power plants and power lines in a grid, the better it can even out local variations in the supply and demand or respond if some part of the grid goes down.

On the other hand, large grids are vulnerable to the rare but significant possibility of a grid-wide blackout like the one in 2003.

“The problem is that grids run close to the edge of their capacity because of economic pressures. Electric companies want to maximize profits, so they don't invest in more equipment than they need,” Newman said.

On a hot days, when everyone's air conditioners are on, the grid runs near capacity. If a tree branch knocks down a power line, the grid is usually resilient enough to distribute extra power and make up the difference. But if the grid is already near its critical point and has no extra capacity, there is a small but significant chance that it can collapse like a sandpile.

This is vulnerable to cascading events comes from the fact that the grid's complexity evolved over time. It reflects the tension between economic pressures and government regulations to ensure reliability.

“Over time, the grid evolved in ways that are not pre-engineered,” Newman said.

Backup Power Versus Blackout Risk

In their new paper, the researchers ask whether the grid has an optimal size, one large enough to share power efficiently but small enough to prevent enormous blackouts.

The team based its analysis on the Western United States grid, which has more than 16,000 nodes. Nodes include generators, substations, and transformers (which convert high-voltage electricity into low-voltage power for homes and business).

The model started by comparing one 1,000-bus grid with ten 100-bus networks. It then assessed how well the grids shared electricity in response to virtual outages.

“We found that for the best tradeoff between providing backup power and blackout risk, the optimal size was 500 to 700 nodes,” Newman said.

Though grid wide blackouts are highly unlikely, they can dominate costs. They are very expensive and take longer to get things back under control. They also require more crews and resources, so utilities can help one another as they do in smaller blackouts.

In smaller grids, the blackouts are smaller and easier to fix because utilities can call for help from surrounding regions. Overall, small grid blackouts have a lower cost to society,” Newman said.

The researchers believe their insights into sizing might apply to other complex, evolved networks like the Internet and financial markets.

“If we reduce the number of connected pieces, maybe we can reduce the societal cost of failures,” Newman added.

The article, “Does size matter?” by B. A. Carreras, D. E. Newman, Ian Dobson appears in Chaos: An Interdisciplinary Journal of Nonlinear Science (DOI: 10.1063/1.4868393). It will be published online on April 8, 2014. After that date, it may be accessed at: http://scitation.aip.org/content/aip/journal/chaos/24/2/10.1063/1.4868393

ABOUT THE JOURNAL

Chaos: An Interdisciplinary Journal of Nonlinear Science is devoted to increasing the understanding of nonlinear phenomena and describing the manifestations in a manner comprehensible to researchers from a broad spectrum of disciplines. See: http://chaos.aip.org/

Media Contact

All latest news from the category: Physics and Astronomy

This area deals with the fundamental laws and building blocks of nature and how they interact, the properties and the behavior of matter, and research into space and time and their structures.

innovations-report provides in-depth reports and articles on subjects such as astrophysics, laser technologies, nuclear, quantum, particle and solid-state physics, nanotechnologies, planetary research and findings (Mars, Venus) and developments related to the Hubble Telescope.

Newest articles

High-energy-density aqueous battery based on halogen multi-electron transfer

Traditional non-aqueous lithium-ion batteries have a high energy density, but their safety is compromised due to the flammable organic electrolytes they utilize. Aqueous batteries use water as the solvent for…

First-ever combined heart pump and pig kidney transplant

…gives new hope to patient with terminal illness. Surgeons at NYU Langone Health performed the first-ever combined mechanical heart pump and gene-edited pig kidney transplant surgery in a 54-year-old woman…

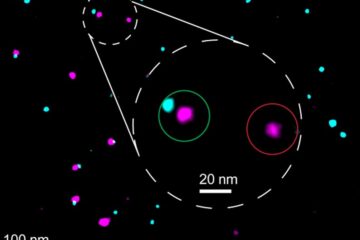

Biophysics: Testing how well biomarkers work

LMU researchers have developed a method to determine how reliably target proteins can be labeled using super-resolution fluorescence microscopy. Modern microscopy techniques make it possible to examine the inner workings…