Why conservation efforts often fail

In this week's special issue of the Proceedings of the National Academy of Sciences (online), Indiana University political scientist Elinor Ostrom and colleagues argue that while many basic conservation strategies are sound, their use is often flawed. The strategies are applied too generally, they say, as an inflexible, regulatory “blueprint” that foolishly ignores local customs, economics and politics.

“We now ridicule the doctors who long ago used to tell us, 'Take two aspirin and call me in the morning' as a treatment for every single illness,” said Ostrom, a member of the National Academy of Sciences. “Resource management is just as complex as the human body. It needs to be approached differently in different situations.”

In her own contribution, Ostrom proposes a flexible “framework” for determining what factors will influence resource management, whether that resource is forest, fish… even air. Ostrom edited the special issue with Arizona State University's Macro Janssen and John Anderies.

“What we are learning is that you shouldn't ignore what's going on at the local level,” Ostrom said. “It may even be beneficial to work with local people, including the resource exploiters, to create effective regulation.”

Modern conservation theory relies on well established mathematical models that predict what will happen to a species or habitat over time. One thing these models can't account for are the unpredictable behavior of human beings whose lives influence and are influenced by conservation efforts.

The framework is divided into tiers that allow conservationists and policymakers to delineate those factors most likely to affect the protection or management of a given resource.

The first tier imposes four broad variables: the resource system, the resource units, the governance system and the resource users. The second tier examines each of these variables in greater detail, such as the government and non-government entities that may already be regulating the resource, the innate productivity of a resource system, the size and placement of the system, the system's economic value and what sorts of people use the resource — from indigenous people to heads of state. The third tier digs even deeper into each of the basic variables.

“I admit it's ambitious,” Ostrom said. “It lays out a research program for the next 15-20 years.”

Applying Ostrom's framework, policymakers are encouraged first to examine the behaviors of resource users, then establish incentives for resource users to aid a conservation strategy or, at least, not interfere with it.

Ostrom's framework could also serve to normalize the effects of political upheavals that occur regularly at both national and state/provincial levels. It also accommodates non-political changes that may come with economic development and environmental change. In short, the framework's flexibility would allow the resource managers to modify a plan without scrapping the plan entirely.

Media Contact

More Information:

http://www.indiana.eduAll latest news from the category: Ecology, The Environment and Conservation

This complex theme deals primarily with interactions between organisms and the environmental factors that impact them, but to a greater extent between individual inanimate environmental factors.

innovations-report offers informative reports and articles on topics such as climate protection, landscape conservation, ecological systems, wildlife and nature parks and ecosystem efficiency and balance.

Newest articles

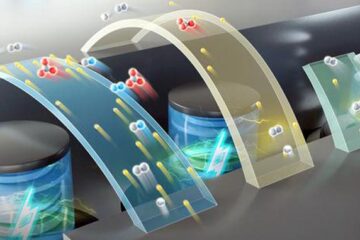

High-energy-density aqueous battery based on halogen multi-electron transfer

Traditional non-aqueous lithium-ion batteries have a high energy density, but their safety is compromised due to the flammable organic electrolytes they utilize. Aqueous batteries use water as the solvent for…

First-ever combined heart pump and pig kidney transplant

…gives new hope to patient with terminal illness. Surgeons at NYU Langone Health performed the first-ever combined mechanical heart pump and gene-edited pig kidney transplant surgery in a 54-year-old woman…

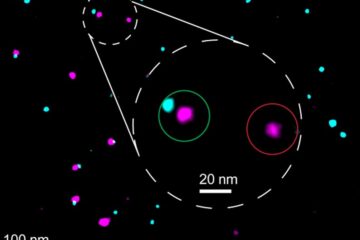

Biophysics: Testing how well biomarkers work

LMU researchers have developed a method to determine how reliably target proteins can be labeled using super-resolution fluorescence microscopy. Modern microscopy techniques make it possible to examine the inner workings…