Landsat Satellites Find the 'Sweet Spot' for Crops

Farmer Gary Wagner walks into his field where the summer leaves on the sugar beet plants are a rich emerald hue — not necessarily a good color when it comes to sugar beets, either for the environment or the farmer. That hue tells Wagner that he's leaving money in the field in unused nitrogen fertilizer, which if left in the soil can act as a pollutant when washed into waterways, and in unproduced sugar, the ultimate product from his beets.

The leaf color Wagner is looking for is yellow. Yellow means the sugar beets are stressed, and when the plants are stressed, they use more nitrogen from the soil and store more sugar. Higher sugar content means that when Wagner and his family bring the harvest in, their farm, A.W.G. Farms, Inc., in northern Minnesota, makes more dollars per acre, and they can better compete on the world crop market.

To find where he needs to adjust his fertilizer use—apply it here or withhold it there—Wagner uses a map of his 5,000 acres that span 35 miles. The map was created using free data from NASA and the U.S. Geological Survey's Landsat satellites and tells him about growing conditions. When he plants a different crop species the following year, Wagner's map will tell him which areas of the fields are depleted in nitrogen so he can apply fertilizer judiciously instead of all over.

A farmer needs to monitor his fields for potential yield and for variability of yield, Wagner says. Knowing how well the plants are growing by direct measurement has an obvious advantage over statistically calculating what should be there based on spot checks as he walks his field. That's where remote sensing comes in, and NASA and the U.S. Geological Survey's Landsat satellites step into the spotlight.

The Sensors in the Sky “Trip the Light Fantastic”

Providing the longest, continuous record of observations of Earth from space, Landsat images are critical to anyone—scientist or farmer—who relies on month-to-month and year-to-year data sets of Earth's changing surface. Landsat 1 launched in 1972. The Landsat Data Continuity Mission (LDCM), the eighth satellite in the series, will launch in 2013 and will bring two sensors—the Operational Land Imager (OLI) and the Thermal Infrared Sensor (TIRS)—into low orbit over the Earth to continue the work of their predecessors as they image our planet's land surface.

Land features tell the sensors their individual characteristics through energy. Everything on the land surface reflects and radiates energy—you, your backyard trees, that rocky outcropping, and a field where a farmer is growing a crop of sugar beets. The sensors on LDCM will measure energy at wavelengths both within the visible spectrum—what people can see—and at wavelengths that only the sensors, and some other lucky species, such as bees and spiders, can see.

OLI will measure energy in nine visible, near infrared, and short wave infrared portions, or bands, of the electromagnetic spectrum, and TIRS will measure energy in two thermal infrared bands. And that's what makes them such powerful tools.

Jim Irons, NASA Project Scientist for LDCM at NASA's Goddard Space Flight Center, Greenbelt, Md., says that the instruments will deliver data-rich images that tell a deeper story than your average photograph of how the land changes over time.

ROY G. BIV — Color me phenomenal

All objects send out radiation in different amounts and at different wavelengths in what is handily understood as the electromagnetic spectrum. The portion of the electromagnetic spectrum that people can see is the visible spectrum, what we also call in everyday speech, light. However, light is bigger than what's perceived by human vision. Light, when bent through a prism or through water droplets in the sky, breaks into the colorful display of a rainbow. Red is energy radiating at a longer wavelength, and the wavelengths grow shorter as the light colors toward violet. Outside of visible violet lies the shorter wavelengths of ultraviolet growing shorter still all the way to gamma rays. Outside of visible red lies the longer wavelengths of infrared, which grow longer from near infrared to short wave infrared, to even longer wavelength thermal infrared all the way to long, long radio waves.

Wagner's map—a special kind of map known as a zone map—shows the difference between healthy and stressed plants by representing the amount of light they're reflecting in different bands of the electromagnetic spectrum. To display this information on his map, the visible colors of light—red, green, and blue—are each assigned to a different band. Red, for example, is assigned to the near-infrared band that isn't visible to humans. Healthy leaves strongly reflect the invisible, near-infrared energy. Therefore green, lush sugar beets pop out in bright red on Wagner's map while the yellow-leaved stressed plants appear as a duller red. Wagner can use this map to track and document changes in his crop’s condition throughout the season and between seasons. As a tool, this map supports and enhances his on-the-ground crop analyses with independent and scientific observations from space.

Different band combinations tell farmers—and scientists, insurance agents, water managers, foresters, mapmakers, and many other types of users—different information. Additionally, since the Landsat data is digital, computers can be trained to use all the bands to rapidly recognize and differentiate features across the landscape and to recognize change over time with multiple images.

“Therein lies the power of the Landsat data archive,” says Irons. “It is a multi-band analysis across the landscape and over a 40-year time span.”

Both OLI and TIRS use new “push-broom” technology, in which a sensor uses long arrays of light-sensitive detectors to collect information across the field of view, as opposed to older sensors that sweep mirrors side-to-side. The new technology improves on earlier instruments because the sensors have fewer moving parts, which will improve their reliability.

OLI will also be more sensitive to electromagnetic radiation than previous Landsat sensors, which is akin to giving users access to a new and improved ruler with markings down to one-sixty-fourth of an inch versus markings at every quarter inch. For Wagner, this means that next summer with LDCM in orbit, he will be able to better discriminate the degree of stress on his sugar beets, giving him a more finely tuned view of what his plants need across the field.

The View of the Field is the Right Fit for its Purpose

Each step of the way, OLI will look at Earth with a 15-meter (49 foot) panchromatic and a 30-meter (98 foot) multispectral spatial resolution along a ground swath that is 185 kilometers (115 miles) wide. TIRS will measure two thermal infrared spectral bands with a spatial resolution of 100 meters (328 feet) and cover the same size swath as OLI.

Different scale resolutions—low, moderate, and high—deliver different levels of detail in remote sensing images, and each has its purpose. The 30-meter (98 foot) resolution of the Landsat images Wagner uses allows him to see what is happening on his spread, quarter-acre by quarter-acre. He doesn't need a view so narrow that the high resolution image tells him who's sitting in the combine parked in his field, or a view so big that it shows him smoke from forest fires drifting over the North American continent with no detail on his farm.

The moderate resolution also means Landsat satellites are able to fly over the same piece of real estate more frequently than high resolution satellites. Once every sixteen days, Landsat 7 in orbit now or LDCM after it launches, will revisit Wagner's farm, and every other place on Earth, too, for global coverage. “We're looking forward to having a real quality instrument in space,” says Irons, who is excited about having OLI and TIRS come online. He says the Landsat 30-meter resolution has been assessed in the scientific literature as being a suitable resolution for observing land cover and land use change at the scale in which humans interact with and manage land. The sensors will record 400 scenes a day, giving users 150 more scenes than previous instruments. Data from both of the sensors will be combined in each image.

The Legacy in the Landsat Mission is its Continuity

In daily operations on his farm, Wagner has used Landsat data in near real time. He's anxious for the launch of LDCM and NASA's newest sensors, OLI and TIRS, because not having the remote sensing data really puts him in a bind. A lack of current satellite data disrupts Wagner's understanding of what his plants need, what the soil needs, the long-term performance history of his place, and his budget.

For now, with his zone map in hand, Wagner adjusts his care for his sugar beet crop, allowing the plants to deplete fertilizer in the soil so he can change the bright red on the satellite image to the yellow of sweet beets in his field.

Lisa-Natalie Anjozian

NASA's Goddard Space Flight Center, Greenbelt, Md.

Media Contact

All latest news from the category: Agricultural and Forestry Science

Newest articles

Simplified diagnosis of rare eye diseases

Uveitis experts provide an overview of an underestimated imaging technique. Uveitis is a rare inflammatory eye disease. Posterior and panuveitis in particular are associated with a poor prognosis and a…

Targeted use of enfortumab vedotin for the treatment of advanced urothelial carcinoma

New study identifies NECTIN4 amplification as a promising biomarker – Under the leadership of PD Dr. Niklas Klümper, Assistant Physician at the Department of Urology at the University Hospital Bonn…

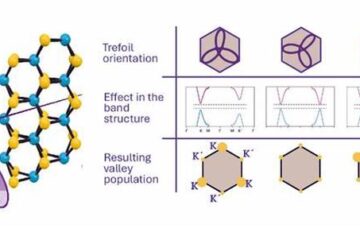

A novel universal light-based technique

…to control valley polarization in bulk materials. An international team of researchers reports in Nature a new method that achieves valley polarization in centrosymmetric bulk materials in a non-material-specific way…