Patients improve speech by watching 3-D tongue images

Technology recently allowed researchers to switch from 2-D to the Opti-Speech technology, which shows the 3-D images of the tongue. Credit: University of Texas at Dallas

A new study done by University of Texas at Dallas researchers indicates that watching 3-D images of tongue movements can help individuals learn speech sounds.

According to Dr. William Katz, co-author of the study and professor at UT Dallas' Callier Center for Communication Disorders, the findings could be especially helpful for stroke patients seeking to improve their speech articulation.

“These results show that individuals can be taught consonant sounds in part by watching 3-D tongue images,” said Katz, who teaches in the UT Dallas School of Behavioral and Brain Sciences. “But we also are seeking to use visual feedback to get at the underlying nature of apraxia and other related disorders.”

The study, which appears in the journal Frontiers in Human Neuroscience, was small but showed that participants became more accurate in learning new sounds when they were exposed to visual feedback training.

Katz is one of the first researchers to suggest that the visual feedback on tongue movements could help stroke patients recover speech.

“People with apraxia of speech can have trouble with this process. They typically know what they want to say but have difficulty getting their speech plans to the muscle system, causing sounds to come out wrong,” Katz said.

“My original inspiration was to show patients their tongues, which would clearly show where sounds should and should not be articulated,” he said.

Technology recently allowed researchers to switch from 2-D technology to the Opti-Speech technology, which shows the 3-D images of the tongue. A previous UT Dallas research project determined that the Opti-Speech visual feedback system can reliably provide real-time feedback for speech learning.

Part of the new study looked at an effect called compensatory articulation — when acoustics are rapidly shifted and subjects think they are making a certain sound with their mouths, but hear feedback that indicates they are making a different sound.

Katz said people will instantaneously shift away from the direction that the sound has pushed them. Then, if the shift is turned off, they'll overshoot.

“In our paradigm, we were able to visually shift people. Their tongues were making one sound but, little by little, we start shifting it,” Katz said. “People changed their sounds to match the tongue image.”

Katz said the research results highlight the importance of body visualization as part of rehabilitation therapy, saying there is much more work to be done.

“We want to determine why visual feedback affects speech,” Katz said. “How much is due to compensating, versus mirroring (or entrainment)? Do some of the results come from people visually guiding their tongue to the right place, then having their sense of 'mouth feel' take over? What parts of the brain are likely involved?

“3-D imaging is opening an entirely new path for speech rehabilitation. Hopefully this work can be translated soon to help patients who desperately want to speak better.”

###

The Opti-Speech study was co-authored by Sonya Mehta, a doctoral student in Communication Sciences and Disorders, and was funded by the UT Dallas Office of Sponsored Projects, the Callier Center Excellence in Education Fund, and a grant awarded by the National Institute on Deafness and Other Communication Disorders.

Media Contact

All latest news from the category: Health and Medicine

This subject area encompasses research and studies in the field of human medicine.

Among the wide-ranging list of topics covered here are anesthesiology, anatomy, surgery, human genetics, hygiene and environmental medicine, internal medicine, neurology, pharmacology, physiology, urology and dental medicine.

Newest articles

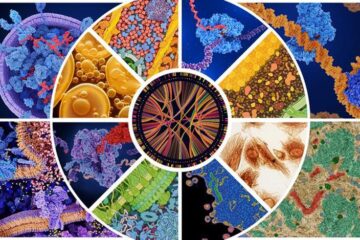

A universal framework for spatial biology

SpatialData is a freely accessible tool to unify and integrate data from different omics technologies accounting for spatial information, which can provide holistic insights into health and disease. Biological processes…

How complex biological processes arise

A $20 million grant from the U.S. National Science Foundation (NSF) will support the establishment and operation of the National Synthesis Center for Emergence in the Molecular and Cellular Sciences (NCEMS) at…

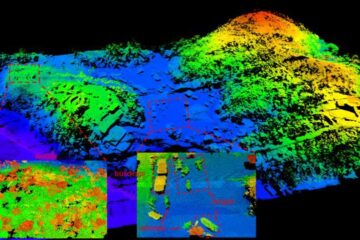

Airborne single-photon lidar system achieves high-resolution 3D imaging

Compact, low-power system opens doors for photon-efficient drone and satellite-based environmental monitoring and mapping. Researchers have developed a compact and lightweight single-photon airborne lidar system that can acquire high-resolution 3D…