Researchers Demonstrate a Better Way for Computers to ‘See’

Why it matters: The neural processing involved in visually recognizing even the simplest object in a natural environment is profound—and profoundly difficult to mimic. Neuroscientists have made broad advances in understanding the visual system, but much of the inner workings of biologically-based systems remain a mystery.

Using Graphics Processing Units (GPUs) — the same technology video game designers use to render life-like graphics – MIT and Harvard researchers are now making progress faster than ever before. “We made a powerful computing system that delivers over hundred fold speed-ups relative to conventional methods,” said Nicolas Pinto, a PhD candidate in James DiCarlo’s lab at the McGovern Institute for Brain Research at MIT. “With this extra computational power, we can discover new vision models that traditional methods miss.” Pinto co-authored the PLoS study with David Cox of the Visual Neuroscience Group at the Rowland Institute at Harvard.

How they did it: Harnessing the processing power of dozens of high-performance NVIDIA graphics cards and PlayStation 3s gaming devices, the team designed a high-throughput screening process to tease out the best parameters for visual object recognition tasks. The resulting model outperformed a crop of state-of-the-art vision systems across a range of tests — more accurately identifying a range of objects on random natural backgrounds with variation in position, scale, and rotation. Had the team used conventional computational tools, the one-week screening phase would have taken over two years to complete.

Next steps: The researchers say that their high-throughput approach could be applied to other areas of computer vision, such as face identification, object tracking, pedestrian detection for automotive applications, and gesture and action recognition. Moreover, as scientists better understand what components make a good artificial vision system, they can use these hints to better understand the human brain as well.

Watch how the MIT/Harvard researchers are finding a better way for computers to 'see' : http://www.rowland.harvard.edu/rjf/cox/plos_video.html

Source: Pinto N, Doukhan D, DiCarlo JJ, Cox DD. A high-throughput screening approach to good forms of biologically-inspired visual representation. PLoS Computational Biology. Nov 26 2009. Read the article here: http://www.ploscompbiol.org/doi/pcbi.1000579

Funding: National Institutes of Health, McKnight Endowment for Neuroscience, Jerry and Marge Burnett, the McGovern Institute for Brain Research at MIT, and the Rowland Institute at Harvard. Hardware support provided by the NVIDIA Corporation.

Media Contact

More Information:

http://www.mit.eduAll latest news from the category: Information Technology

Here you can find a summary of innovations in the fields of information and data processing and up-to-date developments on IT equipment and hardware.

This area covers topics such as IT services, IT architectures, IT management and telecommunications.

Newest articles

A universal framework for spatial biology

SpatialData is a freely accessible tool to unify and integrate data from different omics technologies accounting for spatial information, which can provide holistic insights into health and disease. Biological processes…

How complex biological processes arise

A $20 million grant from the U.S. National Science Foundation (NSF) will support the establishment and operation of the National Synthesis Center for Emergence in the Molecular and Cellular Sciences (NCEMS) at…

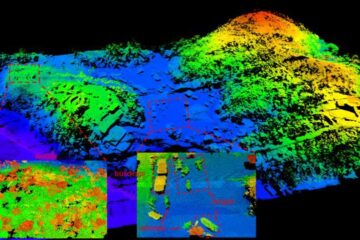

Airborne single-photon lidar system achieves high-resolution 3D imaging

Compact, low-power system opens doors for photon-efficient drone and satellite-based environmental monitoring and mapping. Researchers have developed a compact and lightweight single-photon airborne lidar system that can acquire high-resolution 3D…