Uncovering decades of questionable investments

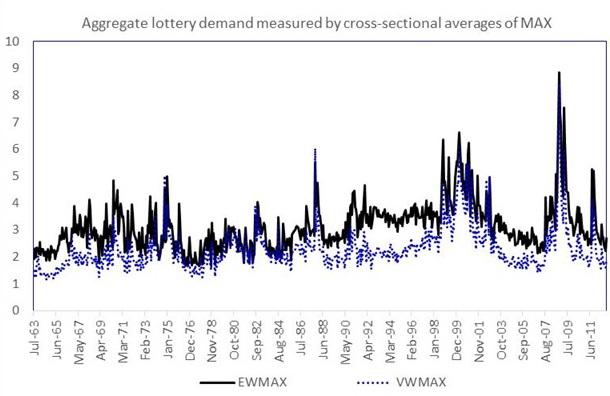

The plot shows the time-series of aggregate lottery demand. Aggregate lottery demand in any month t is measured as the equal-weighted (EWMAX) or value-weighted (VWMAX) average value of MAX across all stocks in the sample in month t. Credit: Murray, Bali, Brown and Tang

One of the key principles in asset pricing — how we value everything from stocks and bonds to real estate — is that investments with high risk should, on average, have high returns.

“If you take a lot of risk, you should expect to earn more for it,” said Scott Murray, professor of finance at George State University. “To go deeper, the theory says that systematic risk, or risk that is common to all investments” — also known as 'beta' — “is the kind of risk that investors should care about.”

This theory was first articulated in the 1960s by Sharpe (1964), Lintner (1965), and Mossin (1966). However, empirical work dating as far back as 1972 didn't support the theory. In fact, many researchers found that stocks with high risk often do not deliver higher returns, even in the long run.

“It's the foundational theory of asset pricing but has little empirical support in the data. So, in a sense, it's the big question,” Murray said.

ISOLATING THE CAUSE

In a recent paper in the Journal of Financial and Quantitative Analysis, Murray and his co-authors Turan Bali (Georgetown University), Stephen Brown (Monash University) and Yi Tang (Fordham University), argue that the reason for this 'beta anomaly' lies in the fact that stocks with high betas also happen to have lottery-like properties – that is, they offer the possibility of becoming big winners. Investors who are attracted to the lottery characteristics of these stocks push their prices higher than theory would predict, thereby lowering their future returns.

To support this hypothesis, they analyzed stock prices from June 1963 to December 2012. For every month, they calculated the beta of each stock (up to 5,000 stocks per month) by running a regression– a statistical way of estimating the relationships among variables — of the stock's return on the return of the market portfolio. They then sorted the stocks into 10 groups based on their betas and examined the performance of stocks in the different groups.

“Theory predicts that stocks with high betas do better in the long run than stocks with low betas,” Murray said. “Doing our analysis, we find that there really isn't a difference in the performance of stocks with different betas.”

They next analyzed the data again and, for each stock month, calculated how lottery-like each stock was. Once again, they sorted the stocks into 10 groups based on their betas and then repeated the analysis. This time, however, they implemented a constraint that required each of the 10 groups to have stocks with similar lottery characteristics. By making sure the stocks in each group had the same lottery properties, they controlled for the possibility that their failure to detect a difference in performance between in their original tests was because the stocks in different beta groups have different lottery characteristics.

“We found that after controlling for lottery characteristics, the seminal theory is empirically supported,” Murray said.

In other words: price pressure from investors who want lottery-like stocks is what causes the theory to fail. When this factor is removed, asset pricing works according to theory.

IDENTIFYING THE SOURCE

Other economists had pointed to a different factor — leverage constraints — as the main cause of this market anomaly. They believed that large investors like mutual funds and pensions that are not allowed to borrow money to buy large amounts of lower-risk stocks are forced to buy higher-risk ones to generate large profits, thus distorting the market.

However, an additional analysis of the data by Murray and his collaborators found that the lottery-like stocks were most often held by individual investors. If leverage constraints were the cause of the beta anomaly, mutual funds and pensions would be the main owners driving up demand.

The team's research won the prestigious Jack Treynor Prize, given each year by the Q Group, which recognizes superior academic working papers with potential applications in the fields of investment management and financial markets.

The work is in line with ideas like prospect theory, first articulated by Nobel-winning behavioral economist Daniel Kahneman, which contends that investors typically overestimate the probability of extreme events — both losses and gains.

“The study helps investors understand how they can avoid the pitfalls if they want to generate returns by taking more risks,” Murray said.

To run the systematic analyses of the large financial datasets, Murray used the Wrangler supercomputer at the Texas Advanced Computing Center (TACC). Supported by a grant from the National Science Foundation, Wrangler was built to enable data-driven research nationwide. Using Wrangler significantly reduced the time-to-solution for Murray.

“If there are 500 months in the sample, I can send one month to one core, another month to another core, and instead of computing 500 months separately, I can do them in parallel and have reduced the human time by many orders of magnitude,” he said.

The size of the data for the lottery-effect research was not enormous and could have been computed on a desktop computer or small cluster (albeit taking more time). However, with other problems that Murray is working on – for instance research on options – the computational requirements are much higher and require super-sized computers like those at TACC.

“We're living in the big data world,” he said. “People are trying to grapple with this in financial economics as they are in every other field and we're just scratching the surface. This is something that's going to grow more and more as the data becomes more refined and technologies such as text processing become more prevalent.”

Though historically used for problems in physics, chemistry and engineering, advanced computing is starting to be widely used — and to have a big impact — in economics and the social sciences.

According to Chris Jordan, manager of the Data Management & Collections group at TACC, Murray's research is a great example of the kinds of challenges Wrangler was designed to address.

“It relies on database technology that isn't typically available in high-performance computing environments, and it requires extremely high-performance I/O capabilities. It is able to take advantage of both our specialized software environment and the half-petabyte flash storage tier to generate results that would be difficult or impossible on other systems,” Jordan said. “Dr. Murray's work also relies on a corpus of data which acts as a long-term resource in and of itself — a notion we have been trying to promote with Wrangler.”

Beyond its importance to investors and financial theorists, the research has a broad societal impact, Murray contends.

“For our society to be as prosperous as possible, we need to allocate our resources efficiently. How much oil do we use? How many houses do we build? A large part of that is understanding how and why money gets invested in certain things,” he explained. “The objective of this line of research is to understand the trade-offs that investors consider when making these sorts of decisions.”

Media Contact

All latest news from the category: Business and Finance

This area provides up-to-date and interesting developments from the world of business, economics and finance.

A wealth of information is available on topics ranging from stock markets, consumer climate, labor market policies, bond markets, foreign trade and interest rate trends to stock exchange news and economic forecasts.

Newest articles

Microscopic basis of a new form of quantum magnetism

Not all magnets are the same. When we think of magnetism, we often think of magnets that stick to a refrigerator’s door. For these types of magnets, the electronic interactions…

An epigenome editing toolkit to dissect the mechanisms of gene regulation

A study from the Hackett group at EMBL Rome led to the development of a powerful epigenetic editing technology, which unlocks the ability to precisely program chromatin modifications. Understanding how…

NASA selects UF mission to better track the Earth’s water and ice

NASA has selected a team of University of Florida aerospace engineers to pursue a groundbreaking $12 million mission aimed at improving the way we track changes in Earth’s structures, such…