More power for brain simulations

Within the new institution, the “Bernstein Facility Simulation and Database Technology” will be established that is integrated into the National Bernstein Network Computational Neuroscience, funded by the Federal Ministry for Education and Research (BMBF). Providing advisory support to the network’s scientists, the new facility will be optimally integrated into the field of Computational Neuroscience in Germany.

Computer simulations and theoretical models are increasingly important tools for understanding the complex processes of our brain. The Simulation Laboratory supports neuroscientists from all over Europe in the optimal use of the Jülich supercomputers. It will also spur the development of theoretical models and standardisation in the field of brain research.

The most powerful computer in the world sits in our head. About 100 billion nerve cells interact in the brain. The rules by which the cells and brain areas communicate with each other and how they are altered by neurological diseases are increasingly being investigated in simulations. But the more realistic the simulations are, the more computationally intensive they are, too. In addition, neuroscientific methods that produce very large amount of data in a very short time are gaining in importance. Such high-throughput methods require new approaches to data processing. Therefore, the researchers at Europe’s largest computing center in Jülich will also develop methods that enable the analysis of ever larger data sets of neuroscience.

To fully exploit the performance of the Jülich supercomputer such as JUGENE, it is necessary to adapt the simulations of brain processes to their specific needs and opportunities. “Today's supercomputers consist of hundreds of thousands of cores. To efficiently distribute a simulation via these processors, we need completely new data structures and communication algorithms as compared to those that we used for smaller systems,” explains Markus Diesmann, Professor for Computational Neuroscience at the Research Center Jülich.

With the support of experts in computational neuroscience, data analysis, anatomy, virtual reality and supercomputing, neuroscientists have the possibility to adapt and optimise their programs. By improved standardisation of the model description, the researchers hope to achieve both better comparability as well as a simplified combination of different sub-models.

By integrating the “Bernstein Facility Simulation and Database Technology” into the National Bernstein Network Computational Neuroscience, the facility is from the outset well connected to the German neuroscience research landscape. The Bernstein Network connects more than 200 research groups. Here, large amounts of relevant neurobiological data are collected and complex models and simulations are used. The latter rely on the long term availability and development of simulation-software andsome of them are only processable at the Jülich supercomputers. The cooperation with the Bernstein Network is an excellent example of how long-term institutional funding of the Helmholtz Association and BMBF project funding can complement each other towards a common goal: understanding the brain.

For further information please contact:

Prof. Dr. Markus Diesmann

diesmann@fz-juelich.de

Institute of Neuroscience and Medicine (INM-6)

Computational and Systems Neuroscience

Research Center Jülich

52425 Jülich

Tel: +49 2461 61-9301

Media Contact

All latest news from the category: Health and Medicine

This subject area encompasses research and studies in the field of human medicine.

Among the wide-ranging list of topics covered here are anesthesiology, anatomy, surgery, human genetics, hygiene and environmental medicine, internal medicine, neurology, pharmacology, physiology, urology and dental medicine.

Newest articles

A universal framework for spatial biology

SpatialData is a freely accessible tool to unify and integrate data from different omics technologies accounting for spatial information, which can provide holistic insights into health and disease. Biological processes…

How complex biological processes arise

A $20 million grant from the U.S. National Science Foundation (NSF) will support the establishment and operation of the National Synthesis Center for Emergence in the Molecular and Cellular Sciences (NCEMS) at…

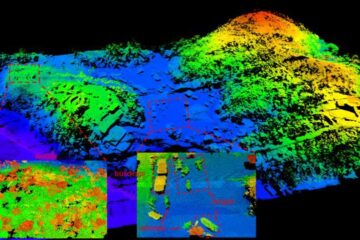

Airborne single-photon lidar system achieves high-resolution 3D imaging

Compact, low-power system opens doors for photon-efficient drone and satellite-based environmental monitoring and mapping. Researchers have developed a compact and lightweight single-photon airborne lidar system that can acquire high-resolution 3D…