Learning by osmosis

When you listen to someone speaking, it may seem like the words are segmented by pauses, much like the words on this page are separated by spaces. But in reality, you hear a continuous stream of sounds that your brain must organize into meaningful chunks.

One process that mediates this ability is called statistical learning, by which the brain automatically keeps track of how often events, such as sounds, occur together. Now a team of RIKEN scientists has found a signature pattern of brain activity that can predict a person’s degree of achievement in this type of task1.

The team led by Kazuo Okanoya presented volunteers with a 20-minute recording of an artificial language, which they heard passively in three 6.6-minute sessions. While the recording played, participants’ brain activity was measured using an imaging technique called electroencephalograms or EEGs. The researchers then analyzed how the EEG patterns related to events in the recorded language.

This language, instead of being composed of pronounceable syllables, contained only tones, similar to keyboard notes. “We used nonsense tone words to detect basic perceptual processes that are independent of linguistic faculty,” explains team-member Dilshat Abla. This way, the researchers were able to focus on the brain-activity signature of general statistical learning, rather than the specific example of language. The recording heard by the participants consisted of six ‘words’ containing three tones each, but since they were played together without gaps, the word composition would not have been immediately obvious. The participants were told to relax and listen to the streaming sound, and at the end of the experiment, they were tested on which tone triplets came from their recording and which were randomly generated.

The participants succeeded in this discrimination, which revealed to the researchers that they had performed statistical learning without exerting conscious effort. Those who earned average scores in this test showed a distinctive pattern of brain activity in the third recording session. These electric signatures, known as event-related potentials or ERPs, tended to occur 400 milliseconds after the start of a new tone word. Those who scored the lowest did not exhibit these ERPs in any session, suggesting they were not segmenting the start of each word as effectively.

The highest-scoring volunteers did show these ERPs, but only in their first session. Abla explains that the effect is “largest during the discovery phase of the statistical structure,” and represents the process rather than the result of statistical learning.

1. Abla, D., Katahira, K., & Okanoya, K. On-line assessment of statistical learning by event-related potentials. Journal of Cognitive Neuroscience 20, 952–964 (2008).

The corresponding author for this highlight is based at the RIKEN Laboratory for Biolinguistics

Media Contact

All latest news from the category: Health and Medicine

This subject area encompasses research and studies in the field of human medicine.

Among the wide-ranging list of topics covered here are anesthesiology, anatomy, surgery, human genetics, hygiene and environmental medicine, internal medicine, neurology, pharmacology, physiology, urology and dental medicine.

Newest articles

A universal framework for spatial biology

SpatialData is a freely accessible tool to unify and integrate data from different omics technologies accounting for spatial information, which can provide holistic insights into health and disease. Biological processes…

How complex biological processes arise

A $20 million grant from the U.S. National Science Foundation (NSF) will support the establishment and operation of the National Synthesis Center for Emergence in the Molecular and Cellular Sciences (NCEMS) at…

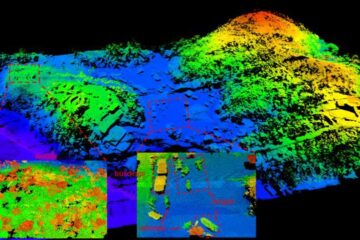

Airborne single-photon lidar system achieves high-resolution 3D imaging

Compact, low-power system opens doors for photon-efficient drone and satellite-based environmental monitoring and mapping. Researchers have developed a compact and lightweight single-photon airborne lidar system that can acquire high-resolution 3D…