New smartphone app automatically tags photos

The system works by taking advantage of the multiple sensors on a mobile phone, as well as those of other mobile phones in the vicinity.

Dubbed TagSense, the new app was developed by students from Duke University and the University of South Carolina (USC) and unveiled at the ninth Association for Computing Machinery's International Conference on Mobile Systems, Applications and Services (MobiSys), being held in Washington, D.C.

“In our system, when you take a picture with a phone, at the same time it senses the people and the context by gathering information from all the other phones in the area,” said Xuan Bao, a Ph.D. student in computer science at Duke who received his master's degree at Duke in electrical and computer engineering.

Bao and Chuan Qin, a visiting graduate student from USC, developed the app working with Romit Roy Choudhury, assistant professor of electrical and computer engineering at Duke's Pratt School of Engineering. Qin and Bao are currently involved in summer internships at Microsoft Research.

“Phones have many different kinds of sensors that you can take advantage of,” Qin said. “They collect diverse information like sound, movement, location and light. By putting all that information together, you can sense the setting of a photograph and describe its attributes.”

By using information about the environment of a photograph, the students believe they can achieve a more accurate tagging of a particular photograph than could be achieved by facial recognition alone. Such information about a photograph's entirety provides additional details that can then be searched at a later time.

For example, the phone's built-in accelerometer can tell if a person is standing still for a posed photograph, bowling or even dancing. Light sensors in the phone's camera can tell if the shot is being taken indoors or outdoors on a sunny or cloudy day. The sensors can also approximate environmental conditions – such as snow or rain — by looking up the weather conditions at that time and location. The microphone can detect whether or not a person in the photograph is laughing, or quiet. All of these attributes are then assigned to each photograph, the students said.

Bao pointed out that with multiple tags describing more than just a particular person's name, it would be easier to not only organize an album of photographs for future reference, but find particular photographs years later. With the exploding number of digital pictures in the cloud and in our personal computers, the ability to easily search and retrieve desired pictures will be valuable in the future, he said.

“So, for example, if you've taken a bunch of photographs at a party, it would be easy at a later date to search for just photographs of happy people dancing,” Qin said. “Or more specifically, what if you just wanted to find photographs only of Mary dancing at the party and didn't want to look through all the photographs of Mary?”

These added details of automatic tagging could help complement existing tagging applications, according to senior researcher Roy Choudhury.

“While facial recognition programs continue to improve, we believe that the ability to identify photographs based on the setting of the photograph can lead to a richer, more detailed way to tag photographs,” Roy Choudhury said. “TagSense was compared to Apple's iPhoto and Google's Picasa, and showed that it can provide greater sophistication in tagging photographs.”

The students envision that TagSense would most likely be adopted by groups of people, such as friends, who would “opt in,” allowing their mobile phone capabilities to be harnessed when members of the group were together. Importantly, Roy Choudhury added, TagSense would not request sensed data from nearby phones that do not belong to this group, thereby protecting users' privacy.

The experiments were conducted using eight Google Nexus One mobile phones on more than 200 photos taken at various locations across the Duke campus, including classroom buildings, gyms and the art museum.

The current application is a prototype, and the researchers believe that a commercial product could be available in a few years.

Srihari Nelakuditi, associate professor of computer science and engineering at USC, was also a member of the research team. The research is supported by the National Science Foundation. Roy Choudhury's Systems Networking Research Group is also supported by Microsoft, Nokia, Verizon, and Cisco.

Media Contact

More Information:

http://www.duke.eduAll latest news from the category: Information Technology

Here you can find a summary of innovations in the fields of information and data processing and up-to-date developments on IT equipment and hardware.

This area covers topics such as IT services, IT architectures, IT management and telecommunications.

Newest articles

A universal framework for spatial biology

SpatialData is a freely accessible tool to unify and integrate data from different omics technologies accounting for spatial information, which can provide holistic insights into health and disease. Biological processes…

How complex biological processes arise

A $20 million grant from the U.S. National Science Foundation (NSF) will support the establishment and operation of the National Synthesis Center for Emergence in the Molecular and Cellular Sciences (NCEMS) at…

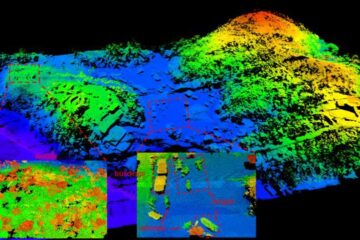

Airborne single-photon lidar system achieves high-resolution 3D imaging

Compact, low-power system opens doors for photon-efficient drone and satellite-based environmental monitoring and mapping. Researchers have developed a compact and lightweight single-photon airborne lidar system that can acquire high-resolution 3D…