Shift in simulation superiority

Science and engineering are advancing rapidly in part due to ever more powerful computer simulations, yet the most advanced supercomputers require programming skills that all too few U.S. researchers possess. At the same time, affordable computers and committed national programs outside the U.S. are eroding American competitiveness in number of simulation-driven fields.

These are some of the key findings in the International Assessment of Research and Development in Simulation-Based Engineering and Science, released on Apr. 22, 2009, by the World Technology Evaluation Center (WTEC).

“The startling news was how quickly our assumptions have to change,” said Phillip Westmoreland, program director for combustion, fire and plasma systems at the National Science Foundation (NSF) and one of the sponsors of the report. “Because computer chip speeds aren't increasing, hundreds and thousands of chips are being ganged together, each one with many processors. New ways of programming are necessary.”

Like other WTEC studies, this study was led by a team of leading researchers from a range of simulation science and engineering disciplines and involved site visits to research facilities around the world.

The nearly 400-page, multi-agency report highlights several areas in which the U.S. still maintains a competitive edge, including the development of novel algorithms, but also highlights endeavors that are increasingly driven by efforts in Europe or Asia, such as the creation and simulation of new materials from first principles.

“Some of the new high-powered computers are as common as gaming computers, so key breakthroughs and leadership could come from anywhere in the world,” added Westmoreland. “Last week's research-directions workshop brought together engineers and scientists from around the country, developing ideas that would keep the U.S. at the vanguard as we face these changes.”

Sharon Glotzer of the University of Michigan chaired the panel of experts that executed the studies of the Asian, European and U.S. simulation research activities. Peter Cummings of both Vanderbilt University and Oak Ridge National Laboratory co-authored the report with Glotzer and seven other panelists, and the two co-chaired the Apr. 22-23, 2009, workshop with Glotzer that provided agencies initial guidance on strategic directions.

“Progress in simulation-based engineering and science holds great promise for the pervasive advancement of knowledge and understanding through discovery,” said Clark Cooper, program director for materials and surface engineering at NSF and also a sponsor of the report. “We expect future developments to continue to enhance prediction and decision making in the presence of uncertainty.”

The WTEC study was funded by the National Science Foundation, Department of Defense, National Aeronautics and Space Administration, National Institutes of Health, National Institute of Standards and Technology and the Department of Energy

Media Contact

More Information:

http://www.nsf.govAll latest news from the category: Information Technology

Here you can find a summary of innovations in the fields of information and data processing and up-to-date developments on IT equipment and hardware.

This area covers topics such as IT services, IT architectures, IT management and telecommunications.

Newest articles

Simplified diagnosis of rare eye diseases

Uveitis experts provide an overview of an underestimated imaging technique. Uveitis is a rare inflammatory eye disease. Posterior and panuveitis in particular are associated with a poor prognosis and a…

Targeted use of enfortumab vedotin for the treatment of advanced urothelial carcinoma

New study identifies NECTIN4 amplification as a promising biomarker – Under the leadership of PD Dr. Niklas Klümper, Assistant Physician at the Department of Urology at the University Hospital Bonn…

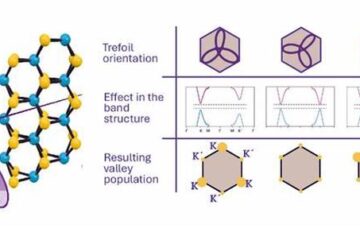

A novel universal light-based technique

…to control valley polarization in bulk materials. An international team of researchers reports in Nature a new method that achieves valley polarization in centrosymmetric bulk materials in a non-material-specific way…