SWAN system to help blind and firefighters navigate environment

Imagine being blind and trying to find your way around a city you've never visited before — that can be challenging for a sighted person. Georgia Tech researchers are developing a wearable computing system called the System for Wearable Audio Navigation (SWAN) designed to help the visually impaired, firefighters, soldiers and others navigate their way in unknown territory, particularly when vision is obstructed or impaired. The SWAN system, consisting of a small laptop, a proprietary tracking chip, and bone-conduction headphones, provides audio cues to guide the person from place to place, with or without vision.

“We are excited by the possibilities for people who are blind and visually impaired to use the SWAN auditory wayfinding system,” said Susan B. Green, executive director, Center for the Visually Impaired in Atlanta. “Consumer involvement is crucial in the design and evaluation of successful assistive technology, so CVI is happy to collaborate with Georgia Tech to provide volunteers who are blind and visually impaired for focus groups, interviews and evaluation of the system.”

Collaboration

In an unusual collaboration, Frank Dellaert, assistant professor in the Georgia Tech College of Computing and Bruce Walker, assistant professor in Georgia Tech's School of Psychology and College of Computing, met five years ago at new faculty orientation and discussed how their respective areas of expertise — determining location of robots and audio interfaces — were complimentary and could be married in a project to assist the blind. The project progressed slowly as the researchers worked on it as time allowed and sought funding. Early support came through a seed grant from the Graphics, Visualization and Usability (GVU) Center at Georgia Tech, and recently Walker and Dellaert received a $600,000 grant from the National Science Foundation to further develop SWAN.

Dellaert's artificial intelligence research focuses on tracking and determining the location of robots and developing applications to help robots determine where they are and where they need to go. There are similar challenges when it comes to tracking and guiding robots and people. Dellaert's robotics research usually focuses on military applications since that is where most of the funding is available.

“SWAN is a satisfying project because we are looking at how to use technology originally developed for military use for peaceful purposes,” says Dellaert. “Currently, we can effectively localize the person outdoors with GPS data, and we have a working prototype using computer vision to see street level details not included in GPS, such as light posts and benches. The challenge is integrating all the information from all the various sensors in real time so you can accurately guide the user as they move toward their destination.”

Walker's expertise in human computer interaction and interface design includes developing auditory displays that indicate data through sonification or sound.

“By using a modular approach in building a system useful for the visually impaired, we can easily add new sensing technologies, while also making it flexible enough for firefighters and soldiers to use in low visibility situations,” says Walker. “One of our challenges has been designing sound beacons easily understood by the user but that are not annoying or in competition with other sounds they need to hear such as traffic noise.”

SWAN System Overview

The current SWAN prototype consists of a small laptop computer worn in a backpack, a tracking chip, additional sensors including GPS (global positioning system), a digital compass, a head tracker, four cameras and light sensor, and special headphones called bone phones. The researchers selected bone phones because they send auditory signals via vibrations through the skull without plugging the user's ears, an especially important feature for the blind who rely heavily on their hearing. The sensors and tracking chip worn on the head send data to the SWAN applications on the laptop which computes the user's location and in what direction he is looking, maps the travel route, then sends 3-D audio cues to the bone phones to guide the traveler along a path to the destination.

The 3-D cues sound like they are coming from about 1 meter away from the user's body, in whichever direction the user needs to travel. The 3-D audio, a well-established sound effect, is created by taking advantage of humans' natural ability to detect inter-aural time differences. The 3-D sound application schedules sounds to reach one ear slightly faster than the other, and the human brain uses that timing difference to figure out where the sound originated.

The 3-D audio beacons for navigation are unique to SWAN. Other navigation systems use speech cues such as “walk 100 yards and turn left,” which Walker feels is not user friendly.

“SWAN consists of two types of auditory displays – navigational beacons where the SWAN user walks directly toward the sound, and secondary sounds indicating nearby items of possible interests such as doors, benches and so forth,” says Walker. “We have learned that sound design matters. We have spent a lot of time researching which sounds are more effective, such as a beep or a sound burst, and which sounds provide information but do not interrupt users when they talk on their cell phone or listen to music.”

The researchers have also learned that SWAN would supplement other techniques that a blind person might already use for getting around such as using a cane to identify obstructions in the path or a guide dog.

Next Steps

The researchers' next step is to transition SWAN from outdoors-only to indoor-outdoor use. Since GPS does not work indoors, the computer vision system is being refined to bridge that gap. Also, the research team is currently revamping the SWAN applications to run on PDAs and cell phones, which will be more convenient and comfortable for users. The team plans to add an annotation feature so that a user can add other useful annotations to share with other users such as nearby coffee shops, a location of a puddle after recent rains, and perhaps even the location of a park in the distance. There are plans to commercialize the SWAN technology after further refinement, testing and miniaturizing of components for the consumer market.

Media Contact

More Information:

http://www.icpa.gatech.eduAll latest news from the category: Information Technology

Here you can find a summary of innovations in the fields of information and data processing and up-to-date developments on IT equipment and hardware.

This area covers topics such as IT services, IT architectures, IT management and telecommunications.

Newest articles

A universal framework for spatial biology

SpatialData is a freely accessible tool to unify and integrate data from different omics technologies accounting for spatial information, which can provide holistic insights into health and disease. Biological processes…

How complex biological processes arise

A $20 million grant from the U.S. National Science Foundation (NSF) will support the establishment and operation of the National Synthesis Center for Emergence in the Molecular and Cellular Sciences (NCEMS) at…

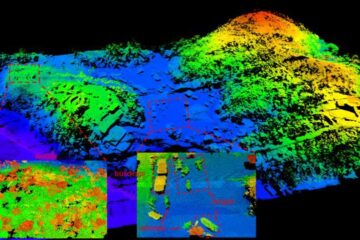

Airborne single-photon lidar system achieves high-resolution 3D imaging

Compact, low-power system opens doors for photon-efficient drone and satellite-based environmental monitoring and mapping. Researchers have developed a compact and lightweight single-photon airborne lidar system that can acquire high-resolution 3D…