Massive California fires consistent with climate change

“This is exactly what we’ve been projecting to happen, both in short-term fire forecasts for this year and the longer term patterns that can be linked to global climate change,” said Ronald Neilson, a professor at Oregon State University and bioclimatologist with the USDA Forest Service.

“You can’t look at one event such as this and say with certainty that it was caused by a changing climate,” said Neilson, who was also a contributor to publications of the Intergovernmental Panel on Climate Change, a co-recipient earlier this month of the 2007 Nobel Peace Prize.

“But things just like this are consistent with what the latest modeling shows,” Neilson said, “and may be another piece of evidence that climate change is a reality, one with serious effects.”

The latest models, Neilson said, suggest that parts of the United States may be experiencing longer-term precipitation patterns – less year-to-year variability, but rather several wet years in a row followed by several that are drier than normal.

“As the planet warms, more water is getting evaporated from the oceans and all that water has to come down somewhere as precipitation,” said Neilson. “That can lead, at times, to heavier vegetation loads popping up and creation of a tremendous fuel load. But the warmth and other climatic forces are also going to create periodic droughts. If you get an ignition source during these periods, the fires can just become explosive.”

The problems can be compounded, Neilson said, by El Niño or La Nina events. A La Niña episode that’s currently under way is probably amplifying the Southern California drought, he said. But when rains return for a period of years, the burned vegetation may inevitably re-grow to very dense levels.

“In the future, catastrophic fires such as those going on now in California may simply be a normal part of the landscape,” said Neilson.

Fire forecast models developed by Neilson’s research group at OSU and the Forest Service rely on several global climate models. When combined, they accurately predicted both the Southern California fires that are happening and the drought that has recently hit parts of the Southeast, including Georgia and Florida, causing crippling water shortages.

In studies released five years ago, Neilson and other OSU researchers predicted that the American West could become both warmer and wetter in the coming century, conditions that would lead to repeated, catastrophic fires larger than any in recent history.

At that time, the scientists suggested that periodic increases in precipitation, in combination with higher temperatures and rising carbon dioxide levels, would spur vegetation growth and add even further to existing fuel loads caused by decades of fire suppression.

Droughts or heat waves, the researchers said in 2002, would then lead to levels of wildfire larger than most observed since European settlement. The projections were based on various “general circulation” models that showed both global warming and precipitation increases during the 21st century.

Media Contact

More Information:

http://www.oregonstate.eduAll latest news from the category: Ecology, The Environment and Conservation

This complex theme deals primarily with interactions between organisms and the environmental factors that impact them, but to a greater extent between individual inanimate environmental factors.

innovations-report offers informative reports and articles on topics such as climate protection, landscape conservation, ecological systems, wildlife and nature parks and ecosystem efficiency and balance.

Newest articles

A universal framework for spatial biology

SpatialData is a freely accessible tool to unify and integrate data from different omics technologies accounting for spatial information, which can provide holistic insights into health and disease. Biological processes…

How complex biological processes arise

A $20 million grant from the U.S. National Science Foundation (NSF) will support the establishment and operation of the National Synthesis Center for Emergence in the Molecular and Cellular Sciences (NCEMS) at…

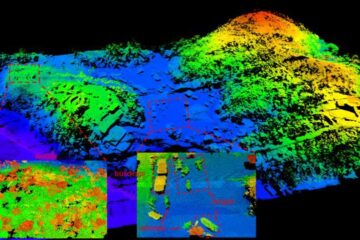

Airborne single-photon lidar system achieves high-resolution 3D imaging

Compact, low-power system opens doors for photon-efficient drone and satellite-based environmental monitoring and mapping. Researchers have developed a compact and lightweight single-photon airborne lidar system that can acquire high-resolution 3D…