Power plants are major influence in regional mercury emissions

Xuhui Lee, professor of meteorology, and Jeffrey Sigler, a recent Yale Ph.D. and now a postdoctoral researcher at the University of New Hampshire, co-authored the Yale study “Recent Trends in Anthropogenic Mercury Emission in the Northeast United States.” They found that between 2000 and 2002 the emission rate of mercury decreased by 50 percent, but between 2002 and 2004 the rate increased between 50 and 75 percent. During that five-year period, overall emissions declined by 20 percent.

The dramatic annual changes in mercury emissions, the study's authors say, cannot be explained climatologically by air flow patterns that would bring either clean or polluted air into the region.

Mild winters and a correspondent decrease in the need for regional power plants to burn coal could partially explain the decline in mercury emissions, according to the authors. The study, published this summer in the Journal of Geophysical Research-Atmospheres, estimates that power plants account for up to 40 percent of total emissions in New Jersey, New York and Pennsylvania and in New England.

“The study highlights just how important power plants are in influencing regional mercury emission,” said Sigler. “We should not forget other source categories when formulating abatement policies, since they also contribute significant amounts to the total emissions,” Lee added.

Mercury, which converts to highly toxic methyl mercury in ground water, is found in fish and can cause neurological problems in developing fetuses and dementia and organ failure in adults who eat fish in large amounts and over long periods.

The Yale study was conducted at Great Mountain Forest in northwestern Connecticut. The measurements were restricted to wintertime so data on carbon dioxide that comes from the same combustion sources as mercury would not be distorted by photosynthesis. The researchers used carbon dioxide to trace mercury back to its sources with a unique method called “tracer analysis.”

“To our knowledge, using the carbon dioxide to trace mercury over a long time period hasn't been done before,” said the authors. “We started with actual mercury that's in the atmosphere, worked back to sources that emit it, then calculated the emission rate.”

The U.S. Environmental Protection Agency, which does not regulate mercury emissions, determines the mercury emission rate by taking an inventory of existing sources. “Although the EPA's approach is highly useful, it requires accurate measurements of mercury emitted from the smokestack per ton of fuel burned,” said Sigler. “These data are hard to come by. Our top-down technique circumvents those rather cumbersome problems and allows for much more timely estimates of mercury emission. It's difficult to get annual changes in the emission rate with the inventory approach.”

Media Contact

More Information:

http://www.yale.eduAll latest news from the category: Earth Sciences

Earth Sciences (also referred to as Geosciences), which deals with basic issues surrounding our planet, plays a vital role in the area of energy and raw materials supply.

Earth Sciences comprises subjects such as geology, geography, geological informatics, paleontology, mineralogy, petrography, crystallography, geophysics, geodesy, glaciology, cartography, photogrammetry, meteorology and seismology, early-warning systems, earthquake research and polar research.

Newest articles

A universal framework for spatial biology

SpatialData is a freely accessible tool to unify and integrate data from different omics technologies accounting for spatial information, which can provide holistic insights into health and disease. Biological processes…

How complex biological processes arise

A $20 million grant from the U.S. National Science Foundation (NSF) will support the establishment and operation of the National Synthesis Center for Emergence in the Molecular and Cellular Sciences (NCEMS) at…

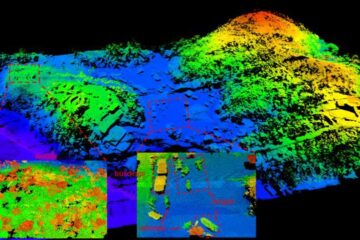

Airborne single-photon lidar system achieves high-resolution 3D imaging

Compact, low-power system opens doors for photon-efficient drone and satellite-based environmental monitoring and mapping. Researchers have developed a compact and lightweight single-photon airborne lidar system that can acquire high-resolution 3D…