Human Influence on the 21St Century Climate: One Possible Future for the Atmosphere

New computer modeling work shows that by 2100, if society wants to limit carbon dioxide in the atmosphere to less than 40 percent higher than it is today, the lowest cost option is to use every available means of reducing emissions. This includes more nuclear and renewable energy, choosing electricity over fossil fuels, reducing emissions through technologies that capture and store carbon dioxide, and even using forests to store carbon.

Researchers from the Joint Global Change Research Institute introduced the work, called the RCP 4.5 scenario, in a special July 29 online issue of the journal Climatic Change. The scenario is one of four that scientists will use worldwide to independently study how the climate might respond to different increases of greenhouse gases and how much of the sun's energy they trap in the atmosphere. It can also be used to study possible ways to slow climate change and adapt to it.

The team used the PNNL Global Change Assessment Model, or GCAM, to generate the scenario. GCAM uses market forces to reach a specified target by allowing global economics to put a price on carbon. And unlike similar models, it includes carbon stored in forests, causing forest acreage to increase — even as energy systems change to include fuels generated from bioenergy crops and crop waste.

“The RCP 4.5 scenario assumes that action will be taken to limit emissions. Without any action, the emissions, and the heat trapped in the atmosphere, would be much higher, leading to more severe climate change,” said lead author Allison Thomson, a scientist at JGCRI, a collaboration between the Department of Energy's Pacific Northwest National Laboratory in Richland, Wash., and the University of Maryland.

“This scenario and the other three produced in this project will provide a common thread for climate change research across many different science communities,” Thomson said.

The Forested Future

Five years ago, the Intergovernmental Panel on Climate Change asked the climate science community to provide scenarios of greenhouse gas emissions and land use change to guide computer models that simulate potential changes to the Earth's climate.

Researchers decided on four possible targets that span a wide range of possible levels of man-made greenhouse gas emissions over the next century. These future scenarios are currently being used by climate modeling groups worldwide in a coordinated effort to compare models and advance the science of climate projections.

The researchers assigned each of the scenarios a specific target amount of the sun's energy that gets trapped in the atmosphere, a property called radiative forcing. Because of differences between the scenarios, each one will produce slightly different degrees of warming.

The RCP 4.5 scenario shoots for 4.5 Watts per square meters radiative forcing in 2100 and lets economics reveal how to achieve that goal the cheapest way possible. The scenario's 4.5 W/m2 means roughly 525 parts per million carbon dioxide in the atmosphere (currently, it hovers around 390 parts per million). It also means approximately 650 parts per million carbon dioxide-equivalents, which includes greenhouse gases besides carbon dioxide.

Unlike the other three scenarios, RCP 4.5 includes carbon in forests in the carbon market. This affects how people use land, as cutting down forests emits carbon dioxide but expanding forests stores it. An earlier modeling study showed that without placing such a value, forests could get cut down for use as biofuels and the land on which they stood used for crops.

The Greenhouse Race

Starting with the world as it looked in 2005 and setting the endpoint at 2100, the team let the model simulate the greenhouse gas emissions and land use change over the next century. They also ran the model without any explicit greenhouse gas control policy or carbon price to compare how such a future might turn out.

Without any emission controls, carbon dioxide concentrations in the atmosphere doubled by 2100. By design, RCP 4.5 limits them to about 35 percent higher than 2005 levels.

The conditions to limit emissions did not specify how to go about doing that, only that carbon from all sources had economic value. Under limiting conditions, carbon dioxide prices rose steadily until they reached $85 per ton of carbon dioxide by 2100, in 2005 dollars.

In the scenario, the price of carbon stimulated a rise in nuclear power and renewable energy use. Also, it became cheaper to implement technologies that capture and store emissions from fossil- and bio-fuel based electricity than to emit carbon dioxide. Buildings and industry became more energy efficient and used cleaner electricity for their energy needs.

Additionally, carbon dioxide emissions from man-made sources peaked around 2040 at 42 gigatons per year (currently, emissions are at 30 gigatons per year), decreased with about the same speed as they rose, then levelled out after 2080 at around 15 gigatons per year.

Resolving Power

Also, the team converted the results of the scenario to match the resolution of the climate models that are using the results. That way, scientists can more easily integrate RCP 4.5 with climate models. Economies, for example, occur on national scales, but chemical reactions of gas in the air occur in much smaller spaces.

This change in scale to accommodate climate models reveals important regional details. For example, although globally methane emissions change little over the century, their geographic origins shift around. As the century wears on, South America and Africa put out more methane and the industrialized nations less.

In addition, the percentage of people's income that they're spending on food goes down even though food prices rise. The researchers attribute this result to a shift from agricultural practices with high carbon footprints to lower ones, as shown in previous work.

While introducing this scenario to climate researchers, the PNNL researchers provide comparisons to other scenarios with similar emissions limits, as well as to scenarios of the other three radiative forcing targets covered by this community activity. The special issue of Climatic Change features papers documenting those other three scenarios as well as several papers reviewing specific parts of the entire scenario exercise.

“It's very important that the climate community has this resource so that they all work from the same data. This common thread will help researchers and policymakers address the problems that climate change will bring us,” said Thomson.

Data and results from RCP 4.5 studies are Open Access and are available from JGCRI's website here: http://www.globalchange.umd.edu/gcamrcp/

Reference: Allison M. Thomson, Katherine V. Calvin, Steven J. Smith, G. Page Kyle, April Volke, Pralit Patel, Sabrina Delgado-Arias, Ben Bond-Lamberty, Marshall A. Wise, Leon E. Clarke and James A. Edmonds, RCP 4.5: A Pathway for Stabilization of Radiative Forcing by 2100, Climatic Change, July 29, 2011, DOI 10.1007/s10584-011-0151-4 (http://www.springerlink.com/content/70114wmj1j12j4h2/).

This work was supported by the U.S. Department of Energy Office of Science

Pacific Northwest National Laboratory is a Department of Energy Office of Science national laboratory where interdisciplinary teams advance science and technology and deliver solutions to America's most intractable problems in energy, the environment and national security. PNNL employs 4,900 staff, has an annual budget of nearly $1.1 billion, and has been managed by Ohio-based Battelle since the lab's inception in 1965. Follow PNNL on Facebook, LinkedIn and Twitter.

The Joint Global Change Research Institute is a unique partnership formed in 2001 between the Department of Energy's Pacific Northwest National Laboratory and the University of Maryland. The PNNL staff associated with the center are world renowned for expertise in energy conservation and understanding of the interactions between climate, energy production and use, economic activity and the environment.

Media Contact

More Information:

http://www.pnnl.govAll latest news from the category: Earth Sciences

Earth Sciences (also referred to as Geosciences), which deals with basic issues surrounding our planet, plays a vital role in the area of energy and raw materials supply.

Earth Sciences comprises subjects such as geology, geography, geological informatics, paleontology, mineralogy, petrography, crystallography, geophysics, geodesy, glaciology, cartography, photogrammetry, meteorology and seismology, early-warning systems, earthquake research and polar research.

Newest articles

A universal framework for spatial biology

SpatialData is a freely accessible tool to unify and integrate data from different omics technologies accounting for spatial information, which can provide holistic insights into health and disease. Biological processes…

How complex biological processes arise

A $20 million grant from the U.S. National Science Foundation (NSF) will support the establishment and operation of the National Synthesis Center for Emergence in the Molecular and Cellular Sciences (NCEMS) at…

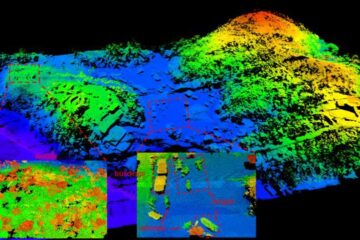

Airborne single-photon lidar system achieves high-resolution 3D imaging

Compact, low-power system opens doors for photon-efficient drone and satellite-based environmental monitoring and mapping. Researchers have developed a compact and lightweight single-photon airborne lidar system that can acquire high-resolution 3D…