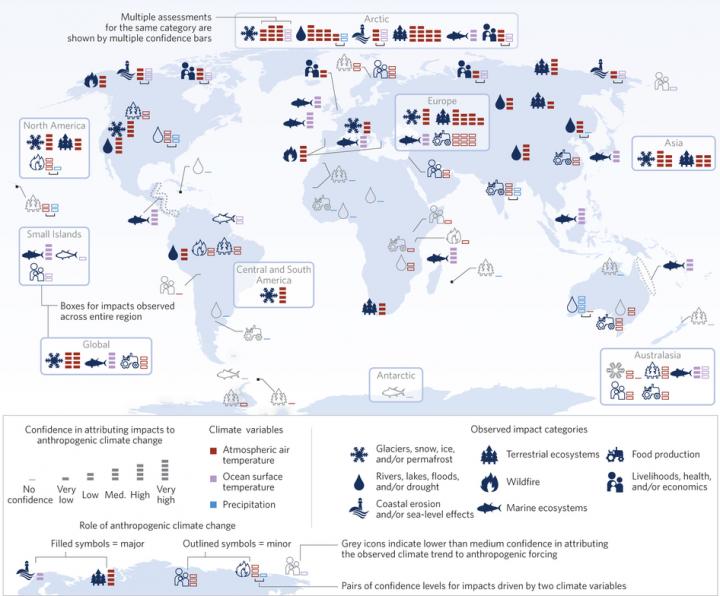

Assessing the impact of human-induced climate change

This image shows confidence in attributing observed impacts to regional climate trends, irrespective of the cause for those climate trends. Blue symbols indicate impacts where the observed climate trend has been attributed to anthropogenic forcing with at least medium confidence in a major or minor role. The confidence bars indicate the combined confidence of the impact and climate attribution step, so confidence can be lower than medium for icons in color as a result of low confidence in impact attribution. The respective climate driver is indicated by the color of the confidence bars (red, atmospheric air temperature; violet, ocean surface temperature; blue, precipitation). Impacts corresponding to regional climate trends with no, very low or low confidence in attribution to anthropogenic forcing are shown in grey. A low confidence in climate attribution results mainly from lack of monitoring, lack of a clear precipitation response, and inconsistency between the direction of reported trends and trends documented in global observational products over the default period. Credit: Gerrit Hansen, Potsdam Institute for Climate Impact Research

The past century has seen a 0.8°C (1.4°F) increase in average global temperature, and according to the Intergovernmental Panel on Climate Change (IPCC), the overwhelming source of this increase has been emissions of greenhouse gases and other pollutants from human activities.

Scientists have also observed that many of Earth's glaciers, ecosystems and other systems are already being impacted by rising regional temperatures and altered rainfall amounts and patterns.

What remains unclear is precisely what fraction of the observed changes in these climate-sensitive systems can confidently be attributed to human-related influences, rather than mere natural regional fluctuations in climate.

So Gerrit Hansen of the Potsdam Institute for Climate Impact Research in Germany and Dáithí Stone of Lawrence Berkeley National Laboratory (Berkeley Lab) developed and applied a novel methodology for answering this challenging question. Their work was published in Nature Climate Change on December 21, 2015.

Their computer modeling-based study focused on various particular regional impacts around the world identified in the last IPCC report (such as melting glaciers and snow ice in Europe, changes in terrestrial ecosystems in Asia, wildfires in the state of Alaska, etc.).

The IPCC report listed over 100 such impacts of various kinds in various regions across the globe. The Hansen-Stone study focused on the regional climate trends relevant to these impacts over the 40-year period 1971-2010.

Using a sophisticated algorithm, the study essentially required satisfaction of three distinct types of tests. First, the algorithm assessed the adequacy of the available climate data–the so-called observational record–related to the particular regional impact over the 40-year period. Was the data sufficient to provide a basis for understanding what actually had been taking place?

Next, the algorithm determined whether the climate models the researchers used provided sufficient resolution or detail concerning regional climate so as to be considered an appropriate source of information. Finally, the researchers examined collections of model simulations with and without human emissions factored in to understand to what degree human emissions were responsible for a given impact, by comparing these simulations against observed trends.

The result of each test of data set quality or of observation-simulation agreement was expressed as a numerical score, and then these scores were merged into an overall measure of confidence in the hypothesis that human-generated emissions have affected the regional climate, ranging from “none” to “very high”.

“There are many ways we could combine the scores”, says Stone, “but we found that it didn't matter which plausible method we used–the results all pointed to the same conclusions.”

Their analysis revealed that almost two-thirds of the listed impacts related specifically to the warming over land and near the surface of the ocean could confidently be attributed to human-generated emissions. However, the researchers could not find the same kind of link for trends in precipitation.

According to Stone, cases where the link between human-generated greenhouse gas emissions and local warming trends were weak were often due to the fact that the climate observational record was insufficient in those regions to build a clear picture about what has been happening over the past several decades.

“Previous analyses linking observed impacts to climate change have been generic in nature, addressing whether there is an influence of human-related warming on impacts globally, without an inference to individual impacts,” says Hansen. “Our analysis is the first to bridge these gaps for a large range of impacts, by assessing the role of human-related emissions in each impact individually, including impacts related to trends in precipitation and sea ice.”

“Studies linking emissions to climate change impacts provide the most stringent test available for evaluating the accuracy and confidence of our projections of impacts in a future warmer world,” says Wolfgang Cramer, Director of the Mediterranean Institute for Marine and Terrestrial Biodiversity and Ecology in Aix-en-Provence, France.

“With these tests, we can be much more confident in our calculations of how a 4°C world will differ from a 1.5°C world. It is crucial that we continue to develop and maintain observational efforts around the world in order to continue documenting how the world is responding to our greenhouse emissions, as well as to agreed reductions in those emissions.”

###

Stone and Hansen's work was partially supported by the Department of Energy's Office of Science and the German Ministry of Education and Research.

Lawrence Berkeley National Laboratory addresses the world's most urgent scientific challenges by advancing sustainable energy, protecting human health, creating new materials, and revealing the origin and fate of the universe. Founded in 1931, Berkeley Lab's scientific expertise has been recognized with 13 Nobel prizes. The University of California manages Berkeley Lab for the U.S. Department of Energy's Office of Science. For more, visit http://www.

DOE's Office of Science is the single largest supporter of basic research in the physical sciences in the United States, and is working to address some of the most pressing challenges of our time. For more information, please visit the Office of Science website at science.energy.gov/.

Media Contact

All latest news from the category: Earth Sciences

Earth Sciences (also referred to as Geosciences), which deals with basic issues surrounding our planet, plays a vital role in the area of energy and raw materials supply.

Earth Sciences comprises subjects such as geology, geography, geological informatics, paleontology, mineralogy, petrography, crystallography, geophysics, geodesy, glaciology, cartography, photogrammetry, meteorology and seismology, early-warning systems, earthquake research and polar research.

Newest articles

A universal framework for spatial biology

SpatialData is a freely accessible tool to unify and integrate data from different omics technologies accounting for spatial information, which can provide holistic insights into health and disease. Biological processes…

How complex biological processes arise

A $20 million grant from the U.S. National Science Foundation (NSF) will support the establishment and operation of the National Synthesis Center for Emergence in the Molecular and Cellular Sciences (NCEMS) at…

Airborne single-photon lidar system achieves high-resolution 3D imaging

Compact, low-power system opens doors for photon-efficient drone and satellite-based environmental monitoring and mapping. Researchers have developed a compact and lightweight single-photon airborne lidar system that can acquire high-resolution 3D…