Carnegie Mellon University-led team conducts most detailed cosmological simulation to date

By incorporating the physics of black holes into a highly sophisticated model running on a powerful supercomputing system, an international team of scientists has produced an unprecedented simulation of cosmic evolution that verifies and deepens our understanding of relationships between black holes and the galaxies in which they reside.

Called BHCosmo, the simulation shows that black holes are integral to the structure of the cosmos and may help guide users of future telescopes, showing them what to look for as they aim to locate the earliest cosmic events and untangle the history of the universe. The research team is led by Carnegie Mellon University and includes scientists from the Harvard-Smithsonian Center for Astrophysics and the Max Planck Institute for Astrophysics in Germany. The research is in press with The Astrophysical Journal (http://lanl.arxiv.org/abs/0705.2269).

“Ours is the first simulation to incorporate black hole physics,” said Tiziana Di Matteo, a theoretical cosmologist and associate professor of physics at the Mellon College of Science at Carnegie Mellon. “It is very computationally challenging, involving more calculations than any prior similar modeling of the cosmos, and the result offers us the best picture to date of how the cosmos formed.”

Di Matteo performed her simulation using the Cray XT3 system at the Pittsburgh Supercomputing Center (PSC), the most powerful “tightly coupled” system available via the National Science Foundation’s TeraGrid. Di Matteo’s collaborators included Jörg Colberg at Carnegie Mellon, Volker Springel and Debora Sijacki at the Max Planck Institute for Astrophysics, and Lars Hernquist at Harvard.

Experimental observations reveal that black holes are important regulators of galaxy formation and, ultimately, the fabric of today’s universe, according to Di Matteo. Nevertheless, previous simulations didn’t take black holes into account because the computing demand was prohibitive.

“Including black holes in computer simulations is critical. The galaxies we see today look the way they do because of black hole physics,” added Springel, junior research group leader at Max Planck. “We must do simulations to understand the role black holes have played in forming structures of both the early universe and today.”

The largest black holes, called supermassive black holes, lie at the center of each galaxy. They can arise initially when the first stars collapse under their own gravity. Surrounded by dense gas in their central locations, they consume surrounding material, both gas and stars, and rapidly grow to become monstrous in size, some with masses a billion times that of our sun. But evidence suggests that supermassive black holes are self-regulators — they don’t feast forever and they never swallow a whole galaxy, Di Matteo said.

In her cosmic simulation, as in reality, galaxies routinely collide. The supermassive black holes embedded at the center of these galaxies choreograph the dynamics of galaxy collision. The result is a tremendous belch of energy produced as the merging black holes form a luminous state called a quasar. “Quasar formation really captures when the fun happens in a galaxy,” Di Matteo said. “You can only use a simulation to follow a complex, nonlinear history like this to understand how quasars and other cosmic structures come about.”

Di Matteo’s simulation covered multiple scales of time and space up to 100 million light years. It was impossible to do without a powerful supercomputer. “The XT3 is ideal for this simulation because it has incredibly fast built-in communication,” she noted.

Di Matteo set up the simulation’s initial conditions to reflect observed cosmic microwave background radiation produced at the birth of the universe. Then she seeded the simulation with a quarter of a billion particles that represented everyday measurable matter. For the simulation, Di Matteo used fluid spheres to represent chunks of matter such as cooling gas. This step was essential so the investigators could calculate all the physical forces on these chunks. She also factored in gravity exerted by dark matter, the unseen material that comprises 90 percent of the universe. Additionally, her calculations accounted for the forces associated with various cosmic phenomena, including cooling gas, growing black holes and exploding stars.

To make the computation possible, the scientists used 2,000 processors — the whole system — of the parallel Cray XT3 during four weeks of computing time. Even with this vast computing power, special techniques were needed just to be able to compute all the gravitational forces involved. For example, a “tree” was built-up in which nearby cosmic particles lay in the same “branch” and nearby branches were linked. By computing the forces on particles from this entire tree structure, the number of computations required was reduced by a factor of a few million to something manageable.

The results were impressive. Di Matteo’s simulation allows a scientist to easily follow the collapse of galaxies. She has also resolved spatial scales that range from structures inside a single galaxy to the filamentary structures that the galaxies inhabit, which are tens of millions of light years long. “We believe that our work has profound implications for cosmology,” Di Matteo said. “We have found that the most massive black holes early on are not the most massive black holes we see today, so simulating the dynamic evolution of these structures is critical to understanding cosmic history. I want us to be bold enough to model the whole universe to scales observed with Sloan Digital Sky Survey (SDSS).” The SDSS is the largest light survey of the cosmos, which has catalogued nearly 100 million galaxies to date.

“With our simulations, we can predict what next-generation telescopes should see as they peer back 13 billion years to the time just after the Big Bang. We should be able to answer whether we are getting the universe right with our simulations and how it evolves as we go back in time,” Di Matteo said. Computing constraints currently hamper future work, but Di Matteo expects to run her next simulations on more powerful computers. She is also working with faculty from Carnegie Mellon’s School of Computer Science to develop even faster ways of combining the physics of the very large with the very small in the same calculations using a set of tools called dynamic meshing.

Media Contact

More Information:

http://www.psc.eduAll latest news from the category: Physics and Astronomy

This area deals with the fundamental laws and building blocks of nature and how they interact, the properties and the behavior of matter, and research into space and time and their structures.

innovations-report provides in-depth reports and articles on subjects such as astrophysics, laser technologies, nuclear, quantum, particle and solid-state physics, nanotechnologies, planetary research and findings (Mars, Venus) and developments related to the Hubble Telescope.

Newest articles

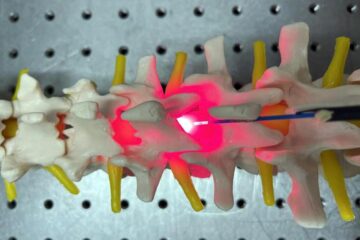

Red light therapy for repairing spinal cord injury passes milestone

Patients with spinal cord injury (SCI) could benefit from a future treatment to repair nerve connections using red and near-infrared light. The method, invented by scientists at the University of…

Insect research is revolutionized by technology

New technologies can revolutionise insect research and environmental monitoring. By using DNA, images, sounds and flight patterns analysed by AI, it’s possible to gain new insights into the world of…

X-ray satellite XMM-newton sees ‘space clover’ in a new light

Astronomers have discovered enormous circular radio features of unknown origin around some galaxies. Now, new observations of one dubbed the Cloverleaf suggest it was created by clashing groups of galaxies….