Gene Analysis & Biostatistics – 3 Is Better Than 2 But Not Than 4

This finding from a current project by the Austrian Science Fund FWF was presented at the 5th International Conference on Multiple Comparison Procedures (MCP2007) in Vienna, which recently drew to a close. The conference, held at the Medical University, focused on the increasingly important issue of boosting the efficiency of medical studies in statistical terms.

30,000 – this is the number of genes that can be analysed simultaneously with state-of-the-art instruments. Such analyses provide a means of identifying whether individual genes have a decisive impact in the course of a disease or therapy. However, the more genes that are examined in a study, the greater the probability of incorrectly identifying a gene as a factor when, in reality, it has no influence.

MORE CONCENTRATION – LESS ERRORS

Dr. Sonja Zehetmayer from the Department of Medical Statistics, Medical University of Vienna, says: “The problem of identifying factors incorrectly could be countered by a very high number of repetition. However, repetition normally needs to be kept to a minimum, owing to high costs. A more innovative approach to solve this problem is offered by multi-stage methods. These involve preselecting genes following the first examination stage. In subsequent stages, only these selected genes are subject to further analysis. Concentrating on fewer genes thereby cuts error probability.”

The question of exactly how many stages are needed to deliver an optimal cost-benefit ratio has until now remained unresolved. The answer has now been calculated, published, and discussed by Dr. Zehetmayer and her colleagues at the MCP2007 held in Vienna from 8 to 11 July. In actual fact, the solution turned out to be unexpectedly straightforward – three stages deliver the optimal ratio between the accuracy of the results obtained and the costs necessary for this. Although a fourth stage would offer greater accuracy, the resources this would require are out of all proportion to the additional accuracy gained.

TEST DESIGN

Dr. Zehetmayer also found surprising results when she compared two different test designs with each other: “Multi-stage series of tests can be analysed either by integrating the results of all levels or by analysing the results of only the last stage. While the choice of test design for four-stage methods has a marked effect on its statistical characteristics, this effect is mitigated in the case of a three-stage method.”

Dr. Zehetmayer’s colleague Alexandra Goll presented a further aspect contributing to the optimum configuration of test methods at the MCP2007. She showed that the individual stages of multi-stage test methods can be configured very differently without having a major detrimental effect on the accuracy of the end result. This means that initial stages can clearly be more cost-effective if more accurate and more expensive methods are used for the following stages (involving fewer genes).

There is good reason why the latest trends in the statistical analysis of clinical data are being initiated and analysed at the Department of Medical Studies at the Medical University of Vienna. Prof. Peter Bauer published a paper there in 1989 refuting a basic principle of biostatistics that the test design in an ongoing study must not be changed until the end. This evidence is and remains the basis for multi-stage adaptive analytical methods that have recently attracted worldwide research interest due to cost pressures in healthcare and are supported by the FWF in Austria.

Image and text will be available online from Monday, 16th July 2007, 09.00 a.m. CET onwards:

http://www.fwf.ac.at/en/public_relations/press/pv200707-en.html

Media Contact

More Information:

http://www.fwf.ac.at/en/public_relations/press/pv200707-en.htmlAll latest news from the category: Life Sciences and Chemistry

Articles and reports from the Life Sciences and chemistry area deal with applied and basic research into modern biology, chemistry and human medicine.

Valuable information can be found on a range of life sciences fields including bacteriology, biochemistry, bionics, bioinformatics, biophysics, biotechnology, genetics, geobotany, human biology, marine biology, microbiology, molecular biology, cellular biology, zoology, bioinorganic chemistry, microchemistry and environmental chemistry.

Newest articles

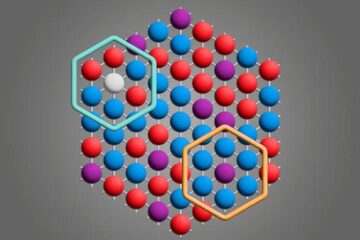

Microscopic basis of a new form of quantum magnetism

Not all magnets are the same. When we think of magnetism, we often think of magnets that stick to a refrigerator’s door. For these types of magnets, the electronic interactions…

An epigenome editing toolkit to dissect the mechanisms of gene regulation

A study from the Hackett group at EMBL Rome led to the development of a powerful epigenetic editing technology, which unlocks the ability to precisely program chromatin modifications. Understanding how…

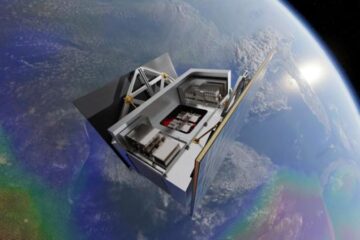

NASA selects UF mission to better track the Earth’s water and ice

NASA has selected a team of University of Florida aerospace engineers to pursue a groundbreaking $12 million mission aimed at improving the way we track changes in Earth’s structures, such…