Carnegie Mellon student uses skin as input for mobile devices

The technology, called Skinput, was developed by Chris Harrison, a third-year Ph.D. student in Carnegie Mellon University's Human-Computer Interaction Institute (HCII), along with Desney Tan and Dan Morris of Microsoft Research. Harrison will describe the technology in a paper to be presented on Monday, April 12, at CHI 2010, the Association for Computing Machinery's annual Conference on Human Factors in Computing Systems in Atlanta, Ga.

Skinput, www.chrisharrison.net/projects/skinput/, could help people take better advantage of the tremendous computing power now available in compact devices that can be easily worn or carried. The diminutive size that makes smart phones, MP3 players and other devices so portable, however, also severely limits the size and utility of the keypads, touchscreens and jog wheels typically used to control them.

“With Skinput, we can use our own skin — the body's largest organ — as an input device,” Harrison said “It's kind of crazy to think we could summon interfaces onto our bodies, but it turns out to make a lot of sense. Our skin is always with us, and makes the ultimate interactive touch surface”

In a prototype developed while Harrison was an intern at Microsoft Research last summer, acoustic sensors are attached to the upper arm. These sensors capture sound generated by such actions as flicking or tapping fingers together, or tapping the forearm. This sound is not transmitted through the air, but by transverse waves through the skin and by longitudinal, or compressive, waves through the bones.

Harrison and his colleagues found that the tap of each fingertip, a tap to one of five locations on the arm, or a tap to one of 10 locations on the forearm produces a unique acoustic signature that machine learning programs could learn to identify. These computer programs, which improve with experience, were able to determine the signature of each type of tap by analyzing 186 different features of the acoustic signals, including frequencies and amplitude.

In a trial involving 20 subjects, the system was able to classify the inputs with 88 percent accuracy overall. Accuracy depended in part on proximity of the sensors to the input; forearm taps could be identified with 96 percent accuracy when sensors were attached below the elbow, 88 percent accuracy when the sensors were above the elbow. Finger flicks could be identified with 97 percent accuracy.

“There's nothing super sophisticated about the sensor itself,” Harrison said, “but it does require some unusual processing. It's sort of like the computer mouse — the device mechanics themselves aren't revolutionary, but are used in a revolutionary way.” The sensor is an array of highly tuned vibration sensors — cantilevered piezo films.

The prototype armband includes both the sensor array and a small projector that can superimpose colored buttons onto the wearer's forearm, which can be used to navigate through menus of commands. Additionally, a keypad can be projected on the palm of the hand. Simple devices, such as MP3 players, might be controlled simply by tapping fingertips, without need of superimposed buttons; in fact, Skinput can take advantage of proprioception — a person's sense of body configuration — for eyes-free interaction.

Though the prototype is of substantial size and designed to fit the upper arm, the sensor array could easily be miniaturized so that it could be worn much like a wristwatch, Harrison said.

Testing indicates the accuracy of Skinput is reduced in heavier, fleshier people and that age and sex might also affect accuracy. Running or jogging also can generate noise and degrade the signals, the researchers report, but the amount of testing was limited and accuracy likely would improve as the machine learning programs receive more training under such conditions.

Harrison, who delights in “blurring the lines between technology and magic,” is a prodigious inventor. Last year, he launched a company, Invynt LLC, to market a technology he calls “Lean and Zoom,” which automatically magnifies the image on a computer monitor as the user leans toward the screen. He also has developed a technique to create a pseudo-3D experience for video conferencing using a single webcam at each conference site. Another project explored how touchscreens can be enhanced with tactile buttons that can change shape as virtual interfaces on the touchscreen change.

Skinput is an extension of an earlier invention by Harrison called Scratch Input, which used acoustic microphones to enable users to control cell phones and other devices by tapping or scratching on tables, walls or other surfaces.

“Chris is a rising star,” said Scott Hudson, HCII professor and Harrison's faculty adviser. “Even though he's a comparatively new Ph.D. student, the very innovative nature of his work has garnered a lot of attention both in the HCI research community and beyond.”

The HCII is a unit of Carnegie Mellon's School of Computer Science, one of the world's leading centers for computer science research and education. Follow the School of Computer Science on Twitter @SCSatCMU.

About Carnegie Mellon: Carnegie Mellon (www.cmu.edu) is a private, internationally ranked research university with programs in areas ranging from science, technology and business, to public policy, the humanities and the fine arts. More than 11,000 students in the university's seven schools and colleges benefit from a small student-to-faculty ratio and an education characterized by its focus on creating and implementing solutions for real problems, interdisciplinary collaboration and innovation. A global university, Carnegie Mellon's main campus in the United States is in Pittsburgh, Pa. It has campuses in California's Silicon Valley and Qatar, and programs in Asia, Australia and Europe. The university is in the midst of a $1 billion fundraising campaign, titled “Inspire Innovation: The Campaign for Carnegie Mellon University,” which aims to build its endowment, support faculty, students and innovative research, and enhance the physical campus with equipment and facility improvements.

Media Contact

More Information:

http://www.cs.cmu.eduAll latest news from the category: Information Technology

Here you can find a summary of innovations in the fields of information and data processing and up-to-date developments on IT equipment and hardware.

This area covers topics such as IT services, IT architectures, IT management and telecommunications.

Newest articles

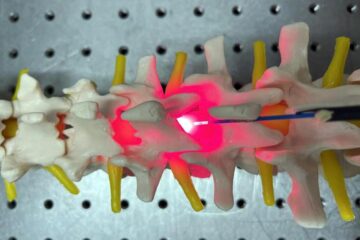

Red light therapy for repairing spinal cord injury passes milestone

Patients with spinal cord injury (SCI) could benefit from a future treatment to repair nerve connections using red and near-infrared light. The method, invented by scientists at the University of…

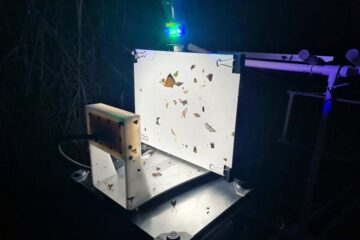

Insect research is revolutionized by technology

New technologies can revolutionise insect research and environmental monitoring. By using DNA, images, sounds and flight patterns analysed by AI, it’s possible to gain new insights into the world of…

X-ray satellite XMM-newton sees ‘space clover’ in a new light

Astronomers have discovered enormous circular radio features of unknown origin around some galaxies. Now, new observations of one dubbed the Cloverleaf suggest it was created by clashing groups of galaxies….