Scientists discover how brain draws and re-draws picture of world

Children usually spill if trying to drink from a full cup, but adults rarely do. How we learn to almost automatically complete complex movements — like how to lift a cup and tip it so the liquid is right at the edge when we’re ready to drink — is one of our brain’s mysterious abilities.

Now, by conducting experiments with robots and humans, scientists at Johns Hopkins have solved part of this mystery and created a new computer model that accurately reflects how the brain uses experience to improve motor control.

“Now we have a much better idea of how the brain uses information from a variety of sources to create a model of the world around us, and how errors modify that model and change subsequent movements,” says Reza Shadmehr, Ph.D., associate professor of biomedical engineering at The Johns Hopkins University School of Medicine. “We don’t just know how to control objects around us, we have to learn how.”

The researchers’ work is described in the November issue of PLoS Biology, a new peer-reviewed journal launched by the Public Library of Science.

In the researchers’ experiments, volunteers grasped the end of a robot arm that precisely tracked their attempts to overcome resistance to reach a target, a stopping point 10 centimeters (about four inches) away. While real-life resistance might be a paperweight or a full mug, in these experiments the researchers programmed forces that would hinder movement of the robot arm in predictable ways. To reach the target in the allotted time (half a second), volunteers had to learn to balance those forces.

To provide the spatial information necessary for the brain to create a model, or map, of forces expected in the “world” of the experiment, subjects started from one of three positions — left, center or right. For different groups of subjects, starting positions were separated by as little as half a centimeter (less than a quarter inch) up to 12 centimeters (about four and three-quarter inches).

In initial trials without resistance, subjects moved the robot arm in a straight line toward the target from each of the starting positions. In the next set of trials, subjects had to overcome resistance when beginning from the left and right starting positions, but not from the center.

At first, the resistance pushed subjects’ movements aside. With practice, most groups of subjects were able to reach the target in a more-or-less straight line again, indicating they had learned to account for the forces applied to the robot arm.

However, if the starting positions were too close together, the brain failed to draw appropriate conclusions about where to expect forces, even though visual cues reinforced whether the subject was starting from the left, middle or right, the researchers report.

“When the starting positions were just half a centimeter apart, the brain couldn’t create an accurate picture of the forces — even with practice — and improve movement,” says Shadmehr. “When the starting positions were farther apart, however, subjects more easily adjusted to the resistance and generalized their experiences to anticipate forces likely outside of the tested space.”

With information from these experiments, the scientists developed a new computer model of how the brain uses experience to create an impression of the world to apply to similar but new situations. The new computer model matches observations from this and all previous experiments, and Shadmehr says it’s the first to show that the brain multiplies, rather than adds, electrical signals from nerve cells that convey the arm’s position and velocity.

“We know the brain transforms sensory cues — the arm’s position and velocity, among other things — into motor commands,” says Shadmehr. “Our model suggests that it does so by multiplying signals of position and velocity to create what we call a gain field — a system that allows the brain to predict appropriate movement for a wide range of new but similar movements.”

In subsequent experiments with volunteers, the researchers proved correct two predictions based on the computer model: how people generalize experience in the tests to other starting positions and under what circumstances people most effectively learn to balance the resistance.

Authors on the paper are Shadmehr, graduate student Eun Jung Hwang and postdoctoral fellows Opher Donchin and Maurice Smith, all of Johns Hopkins. Funding for the study was provided by the National Institute of Neurological Diseases and Stroke, and by postdoctoral fellowships from the National Institutes of Health and the Johns Hopkins Department of Biomedical Engineering.

Media Contact

All latest news from the category: Life Sciences and Chemistry

Articles and reports from the Life Sciences and chemistry area deal with applied and basic research into modern biology, chemistry and human medicine.

Valuable information can be found on a range of life sciences fields including bacteriology, biochemistry, bionics, bioinformatics, biophysics, biotechnology, genetics, geobotany, human biology, marine biology, microbiology, molecular biology, cellular biology, zoology, bioinorganic chemistry, microchemistry and environmental chemistry.

Newest articles

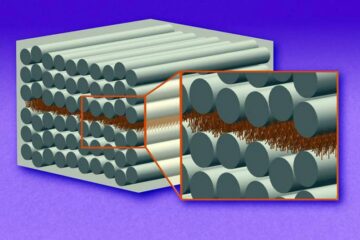

“Nanostitches” enable lighter and tougher composite materials

In research that may lead to next-generation airplanes and spacecraft, MIT engineers used carbon nanotubes to prevent cracking in multilayered composites. To save on fuel and reduce aircraft emissions, engineers…

Trash to treasure

Researchers turn metal waste into catalyst for hydrogen. Scientists have found a way to transform metal waste into a highly efficient catalyst to make hydrogen from water, a discovery that…

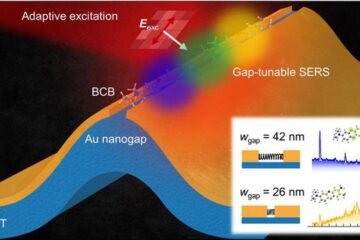

Real-time detection of infectious disease viruses

… by searching for molecular fingerprinting. A research team consisting of Professor Kyoung-Duck Park and Taeyoung Moon and Huitae Joo, PhD candidates, from the Department of Physics at Pohang University…