Researchers disentangle quantum machine learning

Artist's impression of a barren plateau in a quantum machine learning landscape.

Credit: Tony Melov

A group of international researchers have discovered an important barrier that prevents quantum machine learning from being trained – too much quantum entanglement.

Quantum machine learning studies the advantages of quantum computers for Artificial Intelligence (AI). The hope is that in the future quantum neural networks will be able to combine the strengths of quantum computation and traditional neural networks, however, recent theory research points to potential difficulties.

Machine learning requires the algorithms to learn from the data in a phase known as training. During the training, the algorithm progressively improves in the given task. However, a large class of quantum algorithms are mathematically proven to experience only a negligible improvement due to a phenomenon known as a barren plateau, first reported by a team from Google in 2018. Experiencing a barren plateau can stop the quantum algorithm from learning.

The theory research, published in PRX Quantum, further investigates the causes of barren plateaus with a new focus on the impact of too much entanglement. Entanglement of qubits – or quantum bits – is a quantum effect which allows for the exponential speedup of quantum computing power.

“While entanglement is necessary for quantum speedups, the research indicates the need for careful design of which qubits should be entangled and how much,” says research co-author Dr Maria Kieferova, Research Fellow at the ARC Centre for Quantum Computation and Communication Technology based at the University of Technology Sydney.

“This is in contradiction to the common understanding that more quantum entanglement provides faster speedups.’

“We have proven that excess entanglement between the output qubits, or visible units, and the rest of the quantum neural network hinders the learning process and that large amounts of entanglement can be catastrophic for the model,” says lead author Dr Carlos Ortiz Marrero, who is currently a Research Assistant Professor at North Carolina State University.

“This result teaches us which structures of quantum neural networks we need to avoid for successful algorithms.”

“Even though the research showed that a range of straightforward translations from classical machine learning models to the quantum realm isn’t beneficial, there is a way forward,” says Dr Ortiz Marrero.

“By limiting the depth and connectivity of the network, we might be able to avoid the regimes where quantum machine learning algorithms cannot be trained.”

This can be achieved by precisely and deliberately deploying entanglement in quantum machine learning models.

“While entanglement is a powerful tool to add to our models, it must be used like a scalpel and not a sledgehammer,” says co-author Dr Nathan Wiebe, University of Toronto.

Journal: PRX Quantum

DOI: 10.1103/PRXQuantum.2.040316

Method of Research: Computational simulation/modeling

Subject of Research: Not applicable

Article Title: Entanglement-Induced Barren Plateaus

Article Publication Date: 25-Oct-2021

Media Contact

All latest news from the category: Information Technology

Here you can find a summary of innovations in the fields of information and data processing and up-to-date developments on IT equipment and hardware.

This area covers topics such as IT services, IT architectures, IT management and telecommunications.

Newest articles

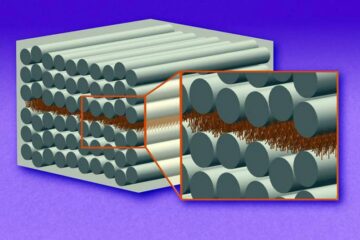

“Nanostitches” enable lighter and tougher composite materials

In research that may lead to next-generation airplanes and spacecraft, MIT engineers used carbon nanotubes to prevent cracking in multilayered composites. To save on fuel and reduce aircraft emissions, engineers…

Trash to treasure

Researchers turn metal waste into catalyst for hydrogen. Scientists have found a way to transform metal waste into a highly efficient catalyst to make hydrogen from water, a discovery that…

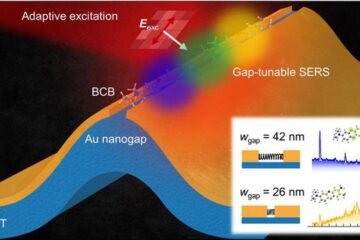

Real-time detection of infectious disease viruses

… by searching for molecular fingerprinting. A research team consisting of Professor Kyoung-Duck Park and Taeyoung Moon and Huitae Joo, PhD candidates, from the Department of Physics at Pohang University…