Mastermind

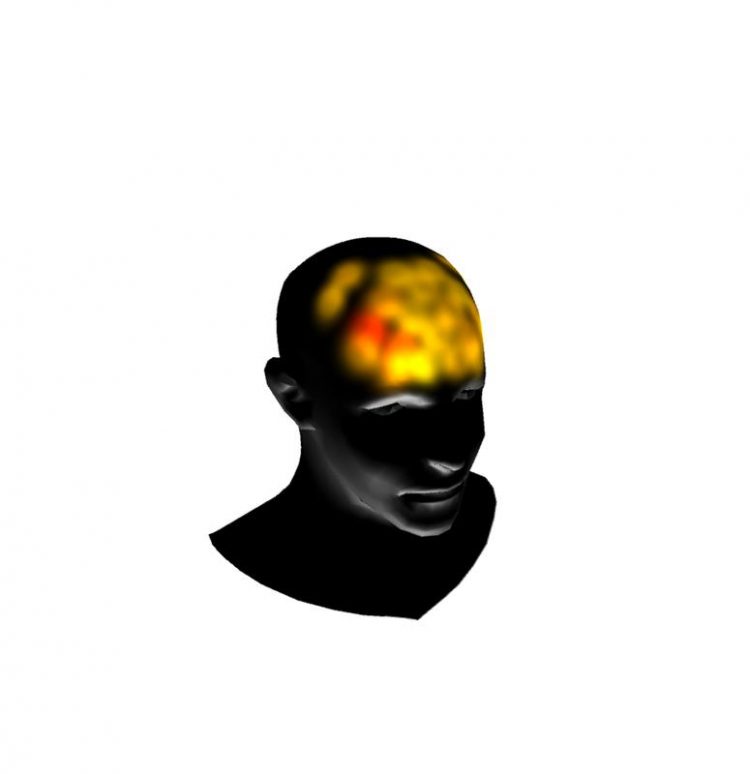

Peeping into the brain with fNIRS: the color of the blots indicates blood flow in that area of the cortex (image courtesy of the visualization tool QVis. Daniel Diers@MPI for Biological Cybernetics

Recent studies have indicated that the brain makes decisions on visual and auditory stimuli using accumulated sensory evidence, and that this process is orchestrated by a network of neurons from the front (the prefrontal area) and the back (the parietal area) of the brain.

Ksander de Winkel and his colleagues from the Department of Prof. Bülthoff at the Max Planck Institute for Biological Cybernetics investigated whether these findings also apply to decisions on self-motion stimuli (passive motion of one’s own body). The results showed that the scientists could predict how well a participant was able to tell the different motions apart, which is an indication that an accumulation of sensory evidence of self-motion was measured.

These findings provide support for the idea that the network of prefrontal and parietal neurons is ‘modality-independent’, meaning that the neurons in this network are dedicated to collect evidence and to make decisions using any type of sensory information and are not dependent on visual and vestibular (concerning the equilibrium) cues.

The scientists placed participants in a motion simulator and rotated them around an earth-vertical axis that was aligned with the spine. More specifically, participants experienced a large number of pairs of such rotations, for which one was always slightly more intense than the other.

The order of the smaller and larger rotations was randomized for each pair, and participants had to judge which rotation of each pair was more intense. While the participants performed the task, the blood flow in the prefrontal and parietal areas were measured using a novel technique: functional Near-Infrared Spectroscopy (fNIRS). The scientists then used these recordings to try whether it was possible to predict the participants’ judgments for every single pair of rotations.

Research on brain activity of participants or patients in motion is scarce, because the readings of common neuroimaging methods, such as electroencephalography (EEG) or functional magnetic resonance imaging (fMRI), are distorted by the body motion and electro-magnetic inference, such as electric noise in vehicles. This is not the case with fNIRS. Infrared light is emitted through the scalp into the brain tissue with the reflection to be measured.

Since the light intensity of infrared light is very low, this method is non-invasive and harmless. The blood flow and the oxygen level increase in the active brain regions (haemodynamic response), which is captured via this method. Through this, it is possible to draw conclusions about activities in these brain areas.

“This method is very promising”, de Winkel gladly announces. “Up to now, we had to rely on what the participants could tell us about their perception. Now we get to glance directly into the brain.” The results showed that they could predict how well a participant could tell the motions apart using the fNIRS recordings, and therefore indicated that the areas under investigation were indeed involved in decision making on self-motion. The more sensory evidence participants collect, the better they will be able to tell two motions apart.

“If we know how the brain makes decisions and what areas are involved, we can relate specific behavioral problems and physical traumata to these areas”, de Winkel explains. Moreover, considering the fact that conventional neuroimaging techniques are not suitable to use with moving participants, the results are encouraging for the use of fNIRS to perform neuroimaging in participants in moving vehicles and simulators. This might pave the way for a completely new line of research.

Original Publication:

https://doi.org/10.1016/j.neulet.2017.04.053

Authors: Dr. Ksander de Winkel, Alessandro Nesti, Hasan Ayaz, Heinrich H. Bülthoff

Contact:

Scientist Dr. Ksander de Winkel

Phone: +49 7071 601- 643

E-mail: ksander.dewinkel[at]tuebingen.mpg.de

Media Liaison Officer Beate Fülle

Head of Communications and Public Relations

Phone: +49 (0)7071 601-777

E-mail: presse-kyb[at]tuebingen.mpg.de

Max Planck Institute for Biological Cybernetics

The Max Planck Institute for Biological Cybernetics deals with the processing of signals and information in the brain. We know that our brain must constantly process an immense wealth of sensory impressions to coordinate our behavior and enable us to interact with our environment. It is, however, surprisingly little known how our brain actually manages to perceive, recognize and learn. The scientists at the Max Planck Institute for Biological Cybernetics are therefore looking into the question of which signals and processes are necessary in order to generate a consistent picture of our environment and the corresponding behavior from the various sensory information. Scientists from three departments and seven research groups work on fundamental questions of brain research using different approaches and methods.

Media Contact

All latest news from the category: Life Sciences and Chemistry

Articles and reports from the Life Sciences and chemistry area deal with applied and basic research into modern biology, chemistry and human medicine.

Valuable information can be found on a range of life sciences fields including bacteriology, biochemistry, bionics, bioinformatics, biophysics, biotechnology, genetics, geobotany, human biology, marine biology, microbiology, molecular biology, cellular biology, zoology, bioinorganic chemistry, microchemistry and environmental chemistry.

Newest articles

Properties of new materials for microchips

… can now be measured well. Reseachers of Delft University of Technology demonstrated measuring performance properties of ultrathin silicon membranes. Making ever smaller and more powerful chips requires new ultrathin…

Floating solar’s potential

… to support sustainable development by addressing climate, water, and energy goals holistically. A new study published this week in Nature Energy raises the potential for floating solar photovoltaics (FPV)…

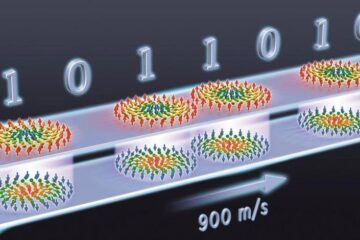

Skyrmions move at record speeds

… a step towards the computing of the future. An international research team led by scientists from the CNRS1 has discovered that the magnetic nanobubbles2 known as skyrmions can be…