Latest Supercomputers Enable High-Resolution Climate Models, Truer Simulation of Extreme Weather

Not long ago, it would have taken several years to run a high-resolution simulation on a global climate model. But using some of the most powerful supercomputers now available, Lawrence Berkeley National Laboratory (Berkeley Lab) climate scientist Michael Wehner was able to complete a run in just three months.

What he found was that not only were the simulations much closer to actual observations, but the high-resolution models were far better at reproducing intense storms, such as hurricanes and cyclones. The study, “The effect of horizontal resolution on simulation quality in the Community Atmospheric Model, CAM5.1,” has been published online in the Journal of Advances in Modeling Earth Systems.

“I’ve been calling this a golden age for high-resolution climate modeling because these supercomputers are enabling us to do gee-whiz science in a way we haven’t been able to do before,” said Wehner, who was also a lead author for the recent Fifth Assessment Report of the Intergovernmental Panel on Climate Change (IPCC). “These kinds of calculations have gone from basically intractable to heroic to now doable.”

Using version 5.1 of the Community Atmospheric Model, developed by the Department of Energy (DOE) and the National Science Foundation (NSF) for use by the scientific community, Wehner and his co-authors conducted an analysis for the period 1979 to 2005 at three spatial resolutions: 25 km, 100 km, and 200 km. They then compared those results to each other and to observations.

One simulation generated 100 terabytes of data, or 100,000 gigabytes. The computing was performed at Berkeley Lab’s National Energy Research Scientific Computing Center (NERSC), a DOE Office of Science User Facility. “I’ve literally waited my entire career to be able to do these simulations,” Wehner said.

The higher resolution was particularly helpful in mountainous areas since the models take an average of the altitude in the grid (25 square km for high resolution, 200 square km for low resolution). With more accurate representation of mountainous terrain, the higher resolution model is better able to simulate snow and rain in those regions.

“High resolution gives us the ability to look at intense weather, like hurricanes,” said Kevin Reed, a researcher at the National Center for Atmospheric Research (NCAR) and a co-author on the paper. “It also gives us the ability to look at things locally at a lot higher fidelity. Simulations are much more realistic at any given place, especially if that place has a lot of topography.”

The high-resolution model produced stronger storms and more of them, which was closer to the actual observations for most seasons. “In the low-resolution models, hurricanes were far too infrequent,” Wehner said.

The IPCC chapter on long-term climate change projections that Wehner was a lead author on concluded that a warming world will cause some areas to be drier and others to see more rainfall, snow, and storms. Extremely heavy precipitation was projected to become even more extreme in a warmer world. “I have no doubt that is true,” Wehner said. “However, knowing it will increase is one thing, but having a confident statement about how much and where as a function of location requires the models do a better job of replicating observations than they have.”

Wehner says the high-resolution models will help scientists to better understand how climate change will affect extreme storms. His next project is to run the model for a future-case scenario. Further down the line, Wehner says scientists will be running climate models with 1 km resolution. To do that, they will have to have a better understanding of how clouds behave.

“A cloud system-resolved model can reduce one of the greatest uncertainties in climate models, by improving the way we treat clouds,” Wehner said. “That will be a paradigm shift in climate modeling. We’re at a shift now, but that is the next one coming.”

The paper’s other co-authors include Fuyu Li, Prabhat, and William Collins of Berkeley Lab; and Julio Bacmeister, Cheng-Ta Chen, Christopher Paciorek, Peter Gleckler, Kenneth Sperber, Andrew Gettelman, and Christiane Jablonowski from other institutions. The research was supported by the Biological and Environmental Division of the Department of Energy’s Office of Science.

Lawrence Berkeley National Laboratory addresses the world’s most urgent scientific challenges by advancing sustainable energy, protecting human health, creating new materials, and revealing the origin and fate of the universe. Founded in 1931, Berkeley Lab’s scientific expertise has been recognized with 13 Nobel prizes. The University of California manages Berkeley Lab for the U.S. Department of Energy’s Office of Science. For more, visit www.lbl.gov.

DOE’s Office of Science is the single largest supporter of basic research in the physical sciences in the United States, and is working to address some of the most pressing challenges of our time. For more information, please visit science.energy.gov

Media Contact

All latest news from the category: Information Technology

Here you can find a summary of innovations in the fields of information and data processing and up-to-date developments on IT equipment and hardware.

This area covers topics such as IT services, IT architectures, IT management and telecommunications.

Newest articles

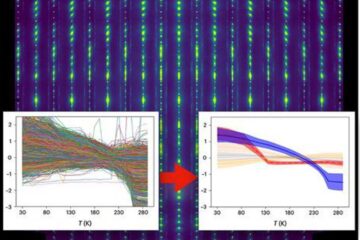

Machine learning algorithm reveals long-theorized glass phase in crystal

Scientists have found evidence of an elusive, glassy phase of matter that emerges when a crystal’s perfect internal pattern is disrupted. X-ray technology and machine learning converge to shed light…

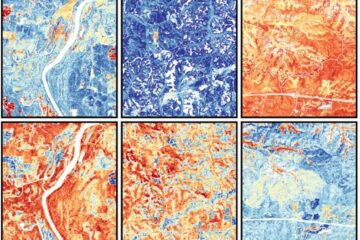

Mapping plant functional diversity from space

HKU ecologists revolutionize ecosystem monitoring with novel field-satellite integration. An international team of researchers, led by Professor Jin WU from the School of Biological Sciences at The University of Hong…

Inverters with constant full load capability

…enable an increase in the performance of electric drives. Overheating components significantly limit the performance of drivetrains in electric vehicles. Inverters in particular are subject to a high thermal load,…