Flying 3D eye-bots

The 3D camera in the flying robot can identify small objects measuring 20 by 15 centimeters from seven meters away. © Fraunhofer IMS<br>

Like a well-rehearsed formation team, a flock of flying robots rises slowly into the air with a loud buzzing noise. A good two dozen in number, they perform an intricate dance in the sky above the seething hordes of soccer fans. Rowdy hooligans have stormed the field and set off flares.

Fights are breaking out all over, smoke is hindering visibility, and chaos is the order of the day. Only the swarm of flying drones can maintain an overview of the situation. These unmanned aerial vehicles (UAVs) are a kind of mini-helicopter, with a wingspan of around two meters. They have a propeller on each of their two variable-geometry side wings, which lends them rapid and precise maneuverability.

In operation over the playing field, their cameras and sensors capture urgently-needed images and data, and transmit them to the control center. Where are the most seriously injured people? What’s the best way to separate the rival gangs? The information provided by the drones allows the head of operations to make important decions more quickly, while the robots form up to go about their business above the arena autonomously – and without ever colliding with each other, or with any other obstacles.

A CMOS sensor developed by researchers at the Fraunhofer Institute for Microelectronic Circuits and Systems IMS in Duisburg lies at the heart of the anti-collision technology. “The sensor can measure three-dimensional distances very efficiently,” says Werner Brockherde, head of the development department. Just as in a black and white camera, every pixel on the sensor is given a gray value. “But on top of that,” he explains, “each pixel is also assigned a distance value.” This enables the drones to accurately determine their position in relation to other objects around them.

Sensor has a higher resolution than radar

The distance sensor developed by the IMS offers significant advantages over radar, which measures distances using reflected echoes. “The sensor has a much higher local resolution,” says Brockherde. “Given the near-field operating conditions, radar images would be far too coarse.” The flying robots are capable of identifying even small objects measuring 20 by 15 centimeters at ranges of up to 7.5 meters. Moreover, this distance information is then transmitted at the very impressive rate of 12 images per second.

Even when there is interfering light, for example when a drone is flying directly into the sun, the sensor will deliver accurate images. It operates according to the time-of-flight (TOF) process, whereby light sources emit short pulses that are reflected by objects and bounced back to the sensor. In order to prevent over-bright ambient light from masking the signal, the electronic shutter only opens for a few nanoseconds. In addition, the sensor also takes differential measurements, in which the first image is captured using ambient light only, a second is taken using the light pulse as well, and the difference between the two determines the required output signal. “All of this happens in real time,” adds Brockherde.

The 3D distance sensors are built into cameras manufactured by TriDiCam, a spin-off company of Fraunhofer IMS. Jochen Noell, TriDiCam’s managing director, admits: “This research project has presented us with new challenges as regards ambient operating conditions and the safety of the sensor technology.” The work falls under the AVIGLE project, one of the winners of the ‘Hightech.NRW’ cutting-edge technology competition which receives funding from both the Land of North Rhine-Westphalia and the EU. The IMS engineers will be presenting their sensor technology at the Fraunhofer CMOS Imaging Workshop in Duisburg on June 12 and 13 this year.

Conducting intelligent aerial surveillance of major events is not the only intended use for flying robots. They could also be of benefit to disaster relief workers, and likewise to urban planners, who could utilize them to produce detailed 3D models of streets or to inspect roofs in order to establish their suitability for solar installations.

Whether deployed to create virtual maps of difficult-to-access areas, to monitor construction sites or to measure contamination at nuclear power plants, these mini UAVs could potentially be used in a wide range of applications, obviating the need for expensive aerial photography and/or satellite imaging.

Media Contact

All latest news from the category: Information Technology

Here you can find a summary of innovations in the fields of information and data processing and up-to-date developments on IT equipment and hardware.

This area covers topics such as IT services, IT architectures, IT management and telecommunications.

Newest articles

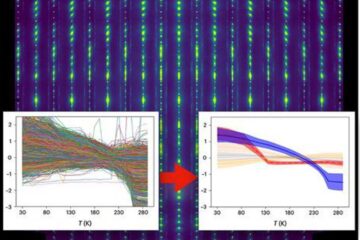

Machine learning algorithm reveals long-theorized glass phase in crystal

Scientists have found evidence of an elusive, glassy phase of matter that emerges when a crystal’s perfect internal pattern is disrupted. X-ray technology and machine learning converge to shed light…

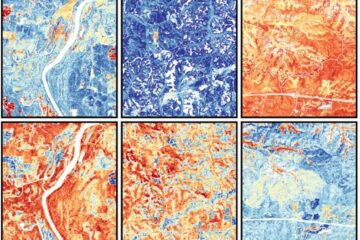

Mapping plant functional diversity from space

HKU ecologists revolutionize ecosystem monitoring with novel field-satellite integration. An international team of researchers, led by Professor Jin WU from the School of Biological Sciences at The University of Hong…

Inverters with constant full load capability

…enable an increase in the performance of electric drives. Overheating components significantly limit the performance of drivetrains in electric vehicles. Inverters in particular are subject to a high thermal load,…