NASA Finds 2011 Ninth-Warmest Year on Record

NASA's Goddard Institute for Space Studies (GISS) in New York, which monitors global surface temperatures on an ongoing basis, released an updated analysis that shows temperatures around the globe in 2011 compared to the average global temperature from the mid-20th century. The comparison shows how Earth continues to experience warmer temperatures than several decades ago. The average temperature around the globe in 2011 was 0.92 degrees F (0.51 C) warmer than the mid-20th century baseline.

“We know the planet is absorbing more energy than it is emitting,” said GISS Director James E. Hansen. “So we are continuing to see a trend toward higher temperatures. Even with the cooling effects of a strong La Niña influence and low solar activity for the past several years, 2011 was one of the 10 warmest years on record.”

The difference between 2011 and the warmest year in the GISS record (2010) is 0.22 degrees F (0.12 C). This underscores the emphasis scientists put on the long-term trend of global temperature rise. Because of the large natural variability of climate, scientists do not expect temperatures to rise consistently year after year. However, they do expect a continuing temperature rise over decades.

The first 11 years of the 21st century experienced notably higher temperatures compared to the middle and late 20th century, Hansen said. The only year from the 20th century in the top 10 warmest years on record is 1998.

Higher temperatures today are largely sustained by increased atmospheric concentrations of greenhouse gases, especially carbon dioxide. These gases absorb infrared radiation emitted by Earth and release that energy into the atmosphere rather than allowing it to escape to space. As their atmospheric concentration has increased, the amount of energy “trapped” by these gases has led to higher temperatures.

The carbon dioxide level in the atmosphere was about 285 parts per million in 1880, when the GISS global temperature record begins. By 1960, the average concentration had risen to about 315 parts per million. Today it exceeds 390 parts per million and continues to rise at an accelerating pace.

The temperature analysis produced at GISS is compiled from weather data from more than 1,000 meteorological stations around the world, satellite observations of sea surface temperature and Antarctic research station measurements. A publicly available computer program is used to calculate the difference between surface temperature in a given month and the average temperature for the same place during 1951 to 1980. This three-decade period functions as a baseline for the analysis.

The resulting temperature record is very close to analyses by the Met Office Hadley Centre in the United Kingdom and the National Oceanic and Atmospheric Administration's National Climatic Data Center in Asheville, N.C.

Hansen said he expects record-breaking global average temperature in the next two to three years because solar activity is on the upswing and the next El Niño will increase tropical Pacific temperatures. The warmest years on record were 2005 and 2010, in a virtual tie.

“It's always dangerous to make predictions about El Niño, but it's safe to say we'll see one in the next three years,” Hansen said. “It won't take a very strong El Niño to push temperatures above 2010.”

Media Contact

All latest news from the category: Earth Sciences

Earth Sciences (also referred to as Geosciences), which deals with basic issues surrounding our planet, plays a vital role in the area of energy and raw materials supply.

Earth Sciences comprises subjects such as geology, geography, geological informatics, paleontology, mineralogy, petrography, crystallography, geophysics, geodesy, glaciology, cartography, photogrammetry, meteorology and seismology, early-warning systems, earthquake research and polar research.

Newest articles

Superradiant atoms could push the boundaries of how precisely time can be measured

Superradiant atoms can help us measure time more precisely than ever. In a new study, researchers from the University of Copenhagen present a new method for measuring the time interval,…

Ion thermoelectric conversion devices for near room temperature

The electrode sheet of the thermoelectric device consists of ionic hydrogel, which is sandwiched between the electrodes to form, and the Prussian blue on the electrode undergoes a redox reaction…

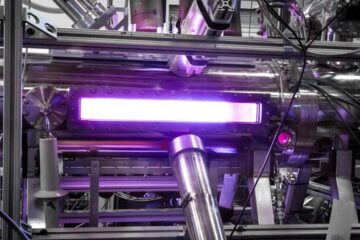

Zap Energy achieves 37-million-degree temperatures in a compact device

New publication reports record electron temperatures for a small-scale, sheared-flow-stabilized Z-pinch fusion device. In the nine decades since humans first produced fusion reactions, only a few fusion technologies have demonstrated…