New technique reveals details of forest fire recovery

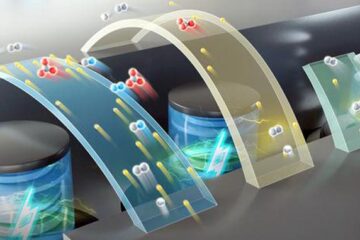

The high-resolution imaging techniques used in this study accurately distinguished live, healthy trees from dead ones, and a healthy canopy from ground-level resprouting and other understory greenery. Credit: Brookhaven National Laboratory Usage Restrictions: For use in articles on this topic

Do you know someone who's so caught up in the details of a problem that they “can't see the forest for the trees?”

Scientists seeking to understand how forests recover from wildfires sometimes have the opposite problem. Conventional satellite systems that survey vast tracts of land burned by forest fires provide useful, general information, but can gloss over important details and lead scientists to conclude that a forest has recovered when it's still in the early stages of recovery.

According to a team of ecologists at the U.S. Department of Energy's Brookhaven National Laboratory, a new technique using a combination of much higher-resolution remote sensing methods provides a more accurate and more detailed picture of what's happening on the ground.

In a paper that will appear in the June 2018 issue of the journal Remote Sensing of Environment, they describe how they used much higher-resolution satellite imagery and aerial measurements collected by NASA to characterize a forested area damaged by a 2012 wildfire that had spread onto the Laboratory's grounds.

“Being able to quantify the relationship between forest recovery and burn severity is critical information for us to understand both forest dynamics and carbon sequestration,” said Ran Meng, a postdoctoral research associate in Brookhaven Lab's Terrestrial Ecosystem Science & Technology (TEST) research group and lead author on the paper.

“This work shows that by using more advanced remote sensing measurements with very high-resolution spectral imaging and LiDAR–a technique that allows us to measure of the forest's 3D physical structure–we can characterize fire effects and monitor post-fire recovery more accurately,” he said.

Alistair Rogers, leader of the TEST group added, “This work is a nice example of the value of high resolution, multi-sensor, remote sensing. The novel combination of data from these sensors enabled deeper understanding of a challenging ecological question and provides a new tool for forest management.”

Ground level, satellite data mismatch

Meng noted the need for improved remote measurements as a graduate student prior to coming to Brookhaven. While tracking vegetation recovery after wildfires in the mountain west and California, his observations on the ground didn't match what the conventional, moderate resolution satellite measurements (such as those obtained by Landsat) were showing.

“Doing field studies, we measure tree parameters and features, and we can see if the canopy–the part of the ecosystem formed by the tops of the trees–is healthy, or if there is just regrowth at the ground level,” Meng said.

The scientists need to be able to distinguish this “understory” growth (for example, shrubs and grasses) from the canopy to determine if the forest has actually recovered to its pre-fire state.

“In terms of managing forests and understanding how much carbon is stored in these systems and how they support biodiversity and change over time, the canopy trees are what's important,” explained Shawn Serbin, Meng's supervisor at Brookhaven.

But traditional satellite imaging, which has been used to study big forest fires since the 1970s, can't distinguish the canopy from the understory, Serbin noted. It produces images with much larger pixel sizes–squares with sides measuring about 30 meters or more–and only measures in a few “channels,” or reflected colors/wavelengths of light, with no sense of depth.

“So, if a fire sweeps through and then a bunch of herbaceous plants that are very green spring up in the newly exposed understory, a traditional satellite system would just see all of that at once–a general pattern of greenness–and confuse that as showing that 'the vegetation has recovered,' even when there are still fully burned trees on the ground,” Serbin said.

“Clearly, we need a way to understand in more detail how the forest recovers in terms of the canopy trees without having to conduct massive ground studies, which would be way too time- and labor-intensive,” he added–or as Meng put it, “mission impossible.”

A fortuitous opportunity

Fortunately, remote sensing technologies have come a long way since the 1970s, particularly over the past 10 years. And thanks to an ongoing collaboration with scientists at NASA's Goddard Space Flight Center and the availability of fine-resolution commercial satellite imagery, Meng and Serbin got a chance to try out these updated technologies and compare the results with ground observations.

Their testbed was a swath of forest in their own backyard that had been damaged when a wildfire in the Long Island Pine Barrens spread onto an undeveloped portion of Brookhaven Lab's property in April 2012. Meng first used fine-resolution commercial imagery purchased by the National Geospatial-Intelligence Agency (NGA), collected before and after the fire, to create a high-resolution map of burn severity (published previously).

Then, he used this map to superimpose detailed measurements of forest characteristics that he extracted from remote sensing imagery collected by the NASA Goddard team in 2015. By comparing the high-resolution remote data with their own on-the-ground observations, Meng and Serbin could test whether the new technologies were conveying an accurate representation of how the trees were recovering in the different areas of burn severity.

“This was an opportunity to study forest dynamics in an unprecedented way,” Serbin said.

The airborne NASA instruments included cameras for very high-resolution digital photography (with pixels measuring one square meter instead of the 30 x 30-meter pixels used by conventional satellites); “hyperspectral” imaging (to pick up light in ~100 colors); thermal infrared imaging (for measuring heat); and LiDAR (which operates like a radar gun speed detector–shooting out beams of near-infrared light and measuring how long they take to bounce back to measure distance, or in this case, the depth into the forest).

Because these instruments make their measurements simultaneously, the scientists can track exactly what color (even subtle variations of green) is reflected back, and from what depth in the forest–all at one-meter resolution.

“This can give us much more information and reduce our uncertainties for understanding the forest dynamics and consequences of fire,” Meng said.

The high-resolution and 3D structural data were able to differentiate the canopy from the understory and gave the scientists an accurate representation of forest recovery in relation to burn severity that matched what they were seeing on the ground.

Instead of a recovery rate that increased with increasing burn severity, as the conventional satellite data–obscured by new understory growth–had suggested, the high-resolution data showed an increasing recovery rate for canopy trees up to a certain threshold.

“Before they reach a certain threshold of damage, trees can recover–create new branches. But after they reach this critical point they get killed and can't recover. They have to start from scratch and it will take a long time,” said Meng. Meanwhile, new understory species taking advantage of the sunlight able to reach the ground through the depleted canopy, rapidly take their place.

Seeing species differences remotely

The scientists were even able to pick out quantitative differences in recovery rates among different species in the canopy.

“Here at the Lab, we have a simple example of pine vs. oak trees. Pine has a conical shape with thin, closely packed, dark green needles. Oak has a rounder structure with broad lighter-colored leaves. They also have different chemistry and water content. All of that changes the way they reflect light, so they each have a unique 'spectral signature' that we can pick out with these new technologies,” Serbin said.

The scientists used machine learning techniques to train computers to recognize the unique spectral and structural features so they could differentiate among these and other species.

“Using a traditional satellite imaging system, it would be impossible to tell these species apart. But now, for the first time, we can use our new technology to quantify these responses over large areas and over a longer time than ever before,” Meng said.

Applying the knowledge

Beyond providing insight into the health of the Long Island Pine Barrens, the method should work to improve remote assessments of fire damage and recovery in different types of forests, and particularly in remote areas where field studies are impractical.

“We think this method should apply across the world. We think it's adaptable, and the data is publicly available, so we could scale this up,” Serbin said.

Understanding the details of forest dynamics would help inform forest management strategies, such as when and where to stage a controlled burn to limit the buildup of fuel for wildfires, or to identify where new trees–and which types–should be planted to maintain biodiversity. It would also provide input for models designed to predict how forest ecosystems will respond to other types of challenge, such as drought or climate change.

“The people tasked with projecting how ecosystems are going to respond to change in the future need very detailed information on the dynamics of forests and vegetation for their models,” Serbin said. “We've learned that the structure of vegetation is highly related to how much carbon can be stored in those ecosystems, and that ecosystems with higher biodiversity store more carbon. So the ability to assess biodiversity and forest structure will be very important to building those models.”

###

Brookhaven Lab's contributions to this study were funded by the Lab's Laboratory Directed Research and Development program. NASA imagery was funded by the U.S. Forest Service.

Media Contact

All latest news from the category: Agricultural and Forestry Science

Newest articles

High-energy-density aqueous battery based on halogen multi-electron transfer

Traditional non-aqueous lithium-ion batteries have a high energy density, but their safety is compromised due to the flammable organic electrolytes they utilize. Aqueous batteries use water as the solvent for…

First-ever combined heart pump and pig kidney transplant

…gives new hope to patient with terminal illness. Surgeons at NYU Langone Health performed the first-ever combined mechanical heart pump and gene-edited pig kidney transplant surgery in a 54-year-old woman…

Biophysics: Testing how well biomarkers work

LMU researchers have developed a method to determine how reliably target proteins can be labeled using super-resolution fluorescence microscopy. Modern microscopy techniques make it possible to examine the inner workings…