What to do with 15 million gigabytes of data

Andreas Hirstius, manager of CERN Openlab and the CERN School of Computing, explains in November’s Physics World how computer scientists have risen to the challenge of dealing with this unprecedented volume of data.

When CERN staff first considered how they might deal with the large volume of data that the huge collider would produce when its two beams of protons collide, in the mid-1990s, a single gigabyte of disk space still cost a few hundred dollars and CERN’s total external connectivity was equivalent to just one of today’s broadband connections.

It quickly became clear that computing power at CERN, even taking Moore’s Law into account, would be significantly less than that required to analyse LHC data. The solution, it transpired during the 1990s, was to turn to “high-throughput computing” where the focus is not on shifting data as quickly as possible from A to B but rather from shifting as much information as possible between those two points.

High-performance computing is ideal for particle physics because the data produced in the millions of proton-proton collisions are all independent of one another – and can therefore be handled independently. So, rather than using a massive all-in-one mainframe supercomputer to analyse the results, the data can be sent to separate computers, all connected via a network.

From here sprung the LHC Grid. The Grid, which was officially inaugurated last month, is a tiered structure centred on CERN (Tier-0), which is connected by superfast fibre links to 11 Tier-1 centres at places like the Rutherford Appleton Laboratory (RAL) in the UK and Fermilab in the US. More than one CD's worth of data (about 700 MB) can be sent down these fibres to each of the Tier-1 centres every second.

Tier 1 centres then feed down to another 250 regional Tier-2 centres that are in turn accessed by individual researchers through university computer clusters and desktops and laptops (Tier-3).

As Andreas Hirstius writes, “The LHC challenge presented to CERN’s computer scientists was as big as the challenges to its engineers and physicists. The computer scientists managed to develop a computing infrastructure that can handle huge amounts of data, thereby fulfilling all of the physicists’ requirements and in some cases even going beyond them.”

Also in this issue:

• President George W Bush’s science adviser, the physicist John H Marburger, asks whether Bush’s eight years in office have been good for science in the US.

• Brian Cox may be the media-friendly face of particle physics, but how does the former D:Ream pop star, now a Manchester University physics professor, find the time for both research and his outreach work?

• Beauty and the beast: in his 100th column for Physics World, Robert P Crease asks whether CERN’s Large Hadron Collider, the biggest experiment of all time, can be dubbed “beautiful”.

Media Contact

More Information:

http://www.physicsworld.comAll latest news from the category: Physics and Astronomy

This area deals with the fundamental laws and building blocks of nature and how they interact, the properties and the behavior of matter, and research into space and time and their structures.

innovations-report provides in-depth reports and articles on subjects such as astrophysics, laser technologies, nuclear, quantum, particle and solid-state physics, nanotechnologies, planetary research and findings (Mars, Venus) and developments related to the Hubble Telescope.

Newest articles

Silicon Carbide Innovation Alliance to drive industrial-scale semiconductor work

Known for its ability to withstand extreme environments and high voltages, silicon carbide (SiC) is a semiconducting material made up of silicon and carbon atoms arranged into crystals that is…

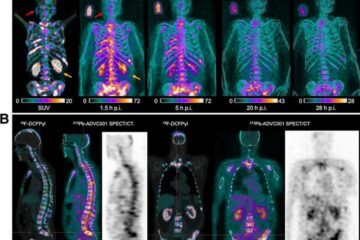

New SPECT/CT technique shows impressive biomarker identification

…offers increased access for prostate cancer patients. A novel SPECT/CT acquisition method can accurately detect radiopharmaceutical biodistribution in a convenient manner for prostate cancer patients, opening the door for more…

How 3D printers can give robots a soft touch

Soft skin coverings and touch sensors have emerged as a promising feature for robots that are both safer and more intuitive for human interaction, but they are expensive and difficult…