Brain controls robot arm in monkey

Research represents big step toward development of brain-controlled artificial limbs for people

Reaching for something you want seems a simple enough task, but not for someone with a prosthetic arm, in whom the brain has no control over such fluid, purposeful movements. Yet according to research presented at the 2005 American Association for the Advancement of Science (AAAS) Annual Meeting, scientists have made significant strides to create a permanent artificial device that can restore deliberate mobility to patients with paralyzing injuries. The concept is that, through thought alone, a person could direct a robotic arm – a neural prosthesis – to reach and manipulate a desired object.

As a step toward that goal, University of Pittsburgh researchers report that a monkey outfitted with a child-sized robotic arm controlled directly by its own brain signals is able to feed itself chunks of fruits and vegetables. The researchers trained the monkey to feed itself by using signals from its brain that are passed through tiny electrodes, thinner than a human hair, and fed into a specially designed algorithm that tells the arm how to move. “The beneficiaries of such technology will be patients with spinal cord injuries or nervous system disorders such as amyotrophic lateral sclerosis or ALS,” said Andrew Schwartz, Ph.D., professor of neurobiology at the University of Pittsburgh School of Medicine and senior researcher on the project.

The neural prosthesis moves much like a natural arm, with a fully mobile shoulder and elbow and a simple gripper that allows the monkey to grasp and hold food while its own arms are restrained. Computer software interprets signals picked up by tiny probes inserted into neuronal pathways in the motor cortex, a brain region where voluntary movement originates as electrical impulses. The neurons’ collective activity is then fed through the algorithm and sent to the arm, which carries out the actions the monkey intended to perform with its own limb.

The primary motor cortex, a part of the brain that controls movement, has thousands of nerve cells, called neurons, that fire like Geiger counters. These neurons are sensitive to movement in different directions. The direction in which a neuron fires fastest is called its “preferred direction.” For each arm movement, no matter how subtle, thousands of motor cortical cells change their firing rate, and collectively, that signal, along with signals from other brain structures, is routed through the spinal cord to the different muscle groups needed to generate the desired movement.

Because of the sheer volume of neurons that fire in concert to allow even the most simple of movements, it would be impossible to create probes that could eavesdrop on them all. The Pitt researchers overcame that obstacle by developing a special algorithm that uses the limited information from relatively few neurons to fill in the missing signals. The algorithm decodes the cortical signals like a voting machine by using each cell’s preferred direction as a label and taking a continuous tally of the population throughout the intended movement.

Monkeys were trained to reach for targets. Then, with electrodes placed in the brain, the algorithm was adjusted to assume the animal was intending to reach for those targets. For the task, food was placed at different locations in front of the monkey, and the animal, with its own arms restrained, used the robotic arm to bring the food to its mouth. “When the monkey wants to move its arm, cells are activated in the motor cortex,” said Dr. Schwartz. “Each of those cells activates at a different intensity depending on the direction the monkey intends to move its arm. The direction that produces the greatest intensity is that cell’s preferred direction. The average of the preferred directions of all of the activated cells is called the population vector. We can use the population vector to accurately predict the velocity and direction of normal arm movement, and in the case of this prosthetic, it serves as the control signal to convey the monkey’s intention to the prosthetic arm.”

Because the software had to rely on a small number of the thousands of neurons needed to move the arm, the monkey did the rest of the work, learning through biofeedback how to refine the arm’s movements by modifying the firing rates of the recorded neurons.

In recent weeks, Dr. Schwartz and his team were able to improve the algorithms to make it easier for the monkey to learn how to operate the arm. The improvements also will allow them to develop more sophisticated brain devices with smooth, responsive and highly precise movement. They are now working to develop a prosthesis with realistic hand and finger movements. Because of the complexity of a human hand and the movements it needs to make, the researchers expect it to be a major challenge.

Others involved in the research include Meel Velliste, Ph.D., a Pitt post-doctoral fellow in the Schwartz lab, and Chance Spalding, a Pitt bioengineering graduate student; and Anthony Brockwell, Ph.D., Valerie Ventura, Ph.D., Robert Kass, Ph.D., and graduate student Cari Kaufman from the Statistics Department at Carnegie Mellon University.

Media Contact

More Information:

http://www.upmc.eduAll latest news from the category: Health and Medicine

This subject area encompasses research and studies in the field of human medicine.

Among the wide-ranging list of topics covered here are anesthesiology, anatomy, surgery, human genetics, hygiene and environmental medicine, internal medicine, neurology, pharmacology, physiology, urology and dental medicine.

Newest articles

High-energy-density aqueous battery based on halogen multi-electron transfer

Traditional non-aqueous lithium-ion batteries have a high energy density, but their safety is compromised due to the flammable organic electrolytes they utilize. Aqueous batteries use water as the solvent for…

First-ever combined heart pump and pig kidney transplant

…gives new hope to patient with terminal illness. Surgeons at NYU Langone Health performed the first-ever combined mechanical heart pump and gene-edited pig kidney transplant surgery in a 54-year-old woman…

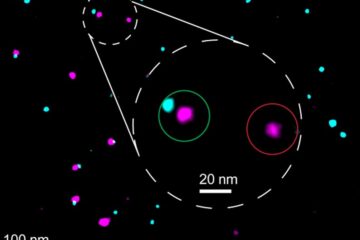

Biophysics: Testing how well biomarkers work

LMU researchers have developed a method to determine how reliably target proteins can be labeled using super-resolution fluorescence microscopy. Modern microscopy techniques make it possible to examine the inner workings…