'Robo Brain' will teach robots everything from the Internet

To serve as helpers in our homes, offices and factories, robots will need to understand how the world works and how the humans around them behave.

Robotics researchers have been teaching them these things one at a time: How to find your keys, pour a drink, put away dishes, and when not to interrupt two people having a conversation. This will all come in one package with Robo Brain.

“Our laptops and cell phones have access to all the information we want. If a robot encounters a situation it hasn't seen before it can query Robo Brain in the cloud,” said Ashutosh Saxena, assistant professor of computer science at Cornell University.

Saxena and colleagues at Cornell, Stanford and Brown universities and the University of California, Berkeley, say Robo Brain will process images to pick out the objects in them, and by connecting images and video with text, it will learn to recognize objects and how they are used, along with human language and behavior.

If a robot sees a coffee mug, it can learn from Robo Brain not only that it's a coffee mug, but also that liquids can be poured into or out of it, that it can be grasped by the handle, and that it must be carried upright when it is full, as opposed to when it is being carried from the dishwasher to the cupboard.

Saxena described the project at the 2014 Robotics: Science and Systems Conference, July 12-16 in Berkeley, and has launched a website for the project at http://robobrain.me

The system employs what computer scientists call “structured deep learning,” where information is stored in many levels of abstraction. An easy chair is a member of the class of chairs, and going up another level, chairs are furniture. Robo Brain knows that chairs are something you can sit on, but that a human can also sit on a stool, a bench or the lawn.

A robot's computer brain stores what it has learned in a form mathematicians call a Markov model, which can be represented graphically as a set of points connected by lines (formally called nodes and edges). The nodes could represent objects, actions or parts of an image, and each one is assigned a probability – how much you can vary it and still be correct. In searching for knowledge, a robot's brain makes its own chain and looks for one in the knowledge base that matches within those limits.

“The Robo Brain will look like a gigantic, branching graph with abilities for multi-dimensional queries,” said Aditya Jami, a visiting researcher art Cornell, who designed the large-scale database for the brain. Perhaps something that looks like a chart of relationships between Facebook friends, but more on the scale of the Milky Way Galaxy.

Like a human learner, Robo Brain will have teachers, thanks to crowdsourcing. The Robo Brain website will display things the brain has learned, and visitors will be able to make additions and corrections.

The project is supported by the National Science Foundation, The Office of Naval Research, the Army Research Office, Google, Microsoft, Qualcomm, the Alfred P. Sloan Foundation and the National Robotics Initiative, whose goal is to advance robotics to help make the United States competitive in the world economy.

Cornell University has television, ISDN and dedicated Skype/Google+ Hangout studios available for media interviews.

Media Contact

More Information:

http://www.cornell.eduAll latest news from the category: Interdisciplinary Research

News and developments from the field of interdisciplinary research.

Among other topics, you can find stimulating reports and articles related to microsystems, emotions research, futures research and stratospheric research.

Newest articles

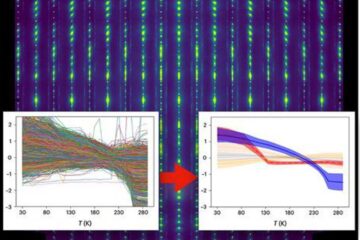

Machine learning algorithm reveals long-theorized glass phase in crystal

Scientists have found evidence of an elusive, glassy phase of matter that emerges when a crystal’s perfect internal pattern is disrupted. X-ray technology and machine learning converge to shed light…

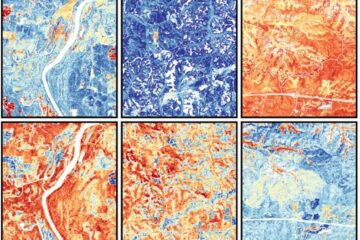

Mapping plant functional diversity from space

HKU ecologists revolutionize ecosystem monitoring with novel field-satellite integration. An international team of researchers, led by Professor Jin WU from the School of Biological Sciences at The University of Hong…

Inverters with constant full load capability

…enable an increase in the performance of electric drives. Overheating components significantly limit the performance of drivetrains in electric vehicles. Inverters in particular are subject to a high thermal load,…