Unlocking the dynamic web

Change is coming to the IT world, says Dave Robertson, coordinator of the EU-funded OpenKnowledge research project.

Just as individuals are now storing, editing and sharing photos and videos on the web, other users from small businesses to CERN’s Large Hadron Collider are moving their data, computation, and collaboration into “the cloud” – the internet’s worldwide network of servers and computers, but also the millions of handheld devices, monitors, sensors and other components linked to it.

“More and more companies are pushing much of what they do out into the cloud,” says Robertson. “If that’s the way things are going, and if it’s going to be very large, then society needs some way to be able to take control of how that gets coordinated.”

Creating a toolkit to access, coordinate and exploit the cloud’s dynamic resources is what OpenKnowledge set out to accomplish.

If the IT world embraces OpenKnowledge, says Robertson, users will no longer have to rely on a small number of big companies to access interactive internet services.

“You would see lots of people who weren’t so specialised writing and using and sharing lots of specific keys that would unlock what’s available on the internet for themselves and others,” says Robertson.

Roles, rules, results

Suppose, says Robertson, a number of potential partners want to create a new service or product. One might manage a database, another can analyse the data, a third can package and present the results, while the fourth has marketing and management skills.

These potential partners could be anywhere in the world and could be using a wide variety of software, natural and computer languages, internet interfaces and devices.

The OpenKnowledge researchers – who, as members of an EU-funded project are themselves scattered from Scotland to Spain – set out to create a user-friendly system that would let these virtual partners find each other, define their respective roles, figure out the rules and sequences that will let them interact smoothly, and get their new enterprise up and running.

To accomplish that goal, the OpenKnowledge team created a new language for specifying the kind of processes that let different systems interact with each other. The language is called LCC, for Lightweight Coordination Calculus.

“We’ve gone for the simplest way to understand a process that we could possibly devise,” says Robertson.

The researchers also found a way to deal with the fact that the same step in a process is likely to be labelled in different ways by different components of the system.

For example, a handheld device might use an asterisk to signal that it is about to send a number while the database where that number is needed might expect to receive an input labelled “price.”

System engineers often approach this semantic problem by building what are called global ontologies – essentially dictionaries that specify the labels and properties of all the objects or events within a system.

In situations where such rules of interaction already exist, OpenKnowledge will find and use them.

Most of the time, however, that approach will not work because there is no way of knowing in advance what devices or systems will be interacting in a particular exchange.

In that case, OpenKnowledge uses statistical regularities to build a much smaller dictionary that defines only the steps that are needed for the purpose at hand.

“You know that you’re at some specific point,” says Robertson, “and you look to see what other people were doing at the same point. As the system gets used, you have a lot of interactions, possibly thousands or millions. That’s where your mapping comes from.”

But can I trust you?

Like anyone using the internet, OpenKnowledge clients are vulnerable. For example, a partner might provide poor quality services, or not be who he claims to be.

The researchers believe they have solved that problem to some degree by building measures of reputation into their software package. One approach is to measure how often interactions with a potential partner have gone well. Another is to see how often they have interacted with other trustworthy partners.

“We do exactly the same things that are used to rate web pages, but with these more complicated forms of information,” says Robertson.

All of the key OpenKnowledge functions – discovering and interpreting interactions, ontology matching, and reputation checking – reside in the OpenKnowledge kernel, an open-source software package that can be downloaded from the project’s website.

Robertson and his colleagues have tested OpenKnowledge in three real-world areas: healthcare coordination, proteomics research, and emergency response. These applications will be featured in a subsequent ICT Results feature on 29 December.

In the meantime, they are eager for others to use OpenKnowledge to unlock the cloud’s capabilities and choreograph their own ideas.

“It will only become revolutionary,” Robertson writes, “if clever people invent interactions that are really useful for lots of other people.”

The OpenKnowledge project received funding from ICT strand of the Sixth Framework Programme for research.

This is the first of a two-part feature on OpenKnowledge.

Media Contact

All latest news from the category: Information Technology

Here you can find a summary of innovations in the fields of information and data processing and up-to-date developments on IT equipment and hardware.

This area covers topics such as IT services, IT architectures, IT management and telecommunications.

Newest articles

Combatting disruptive ‘noise’ in quantum communication

In a significant milestone for quantum communication technology, an experiment has demonstrated how networks can be leveraged to combat disruptive ‘noise’ in quantum communications. The international effort led by researchers…

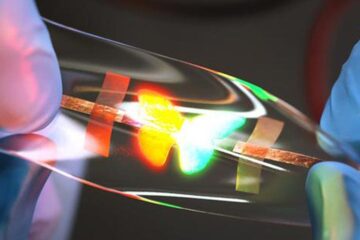

Stretchable quantum dot display

Intrinsically stretchable quantum dot-based light-emitting diodes achieved record-breaking performance. A team of South Korean scientists led by Professor KIM Dae-Hyeong of the Center for Nanoparticle Research within the Institute for…

Internet can achieve quantum speed with light saved as sound

Researchers at the University of Copenhagen’s Niels Bohr Institute have developed a new way to create quantum memory: A small drum can store data sent with light in its sonic…