Researchers give high marks to new technology for fingerprint identification

Prototype systems evaluated by NIST performed surprisingly well for a developing technology: half of the prototypes were accurate at least 80 percent of the time and one had a near perfect score. Automating the manual portion of the work frees up time for trained examiners to spend time on very difficult images that the software has little hope of processing.

As any TV crime series fan knows, latent prints are left behind any time someone touches something. While ubiquitous, “latents” often include only part of the finger—maybe just a few ridges—and sometimes are left on textured materials, adding even more challenges.

To identify the owner, a fingerprint examiner must first carefully mark the distinguishing features of the full or partial print, beginning with the positions where ridges end or branch. Then the latent is entered into a counter-terrorist or law enforcement identification system such as the Federal Bureau of Investigation’s Integrated Automated Fingerprint Identification System (IAFIS). The FBI’s system compares latents against the 55 million sets of ten-print cards taken at arrest.

The IAFIS system was a significant advance. Now the manual, mark-up portion of latent fingerprint identification is being automated with an emerging technology called Automatic Feature Extraction and Matching (AFEM). NIST biometric researchers assessed prototypes that eight vendors are developing.

In the evaluation, researchers used a data set of 835 latent prints and 100,000 fingerprints that have been used in real case examinations.

The AFEM software extracted the distinguishing features of the latent prints, then compared them against 100,000 fingerprints. For each print the software provided a list of 50 candidates that the fingerprint specialists compared by hand. Most identities were found within the top 10.

In order of performance, the most accurate prototypes were furnished by NEC Corp., Cogent Inc., SPEX Forensics, Inc., Motorola, Inc. and L1 Identity Solutions. Results ranged from nearly 100 percent for the most accurate product to around 80 percent for the last three listed.

The evaluations also showed a strong correlation between the number of distinguishing features in a latent print and its ability to match for all prototypes and that the quality of the image data strongly influences accuracy.

“While the testing has demonstrated accuracy beyond pre-test expectations, the potential of the technology remains undefined and further testing is required,” said computer scientist Patrick Grother. “In the future we will look at lower quality latent images to understand the technology’s limitations and we will support development of a standardized feature set that extends the one currently used by examiners for searches.”

The research was funded by the Department of Homeland Security’s Science and Technology Directorate and the FBI’s Criminal Justice Information Services Division. The report, An Evaluation of Automated Latent Fingerprint Identification Technologies, is available at http://fingerprint.nist.gov/latent/NISTIR_7577_ELFT_PhaseII.pdf

Media Contact

All latest news from the category: Information Technology

Here you can find a summary of innovations in the fields of information and data processing and up-to-date developments on IT equipment and hardware.

This area covers topics such as IT services, IT architectures, IT management and telecommunications.

Newest articles

Superradiant atoms could push the boundaries of how precisely time can be measured

Superradiant atoms can help us measure time more precisely than ever. In a new study, researchers from the University of Copenhagen present a new method for measuring the time interval,…

Ion thermoelectric conversion devices for near room temperature

The electrode sheet of the thermoelectric device consists of ionic hydrogel, which is sandwiched between the electrodes to form, and the Prussian blue on the electrode undergoes a redox reaction…

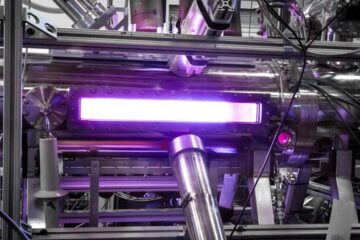

Zap Energy achieves 37-million-degree temperatures in a compact device

New publication reports record electron temperatures for a small-scale, sheared-flow-stabilized Z-pinch fusion device. In the nine decades since humans first produced fusion reactions, only a few fusion technologies have demonstrated…