Personal data protection vital to future civil liberties

Product miniaturisation is fast reaching the level where tiny, intelligent devices can be embedded into virtually any part of our environment. This is the era of ambient intelligence (Aml), where microelectro-mechanical sensors (MEMS) no larger than a grain of sand will be capable of detecting everything from light to vibrations. In an environment of continuous communication surrounding everything we do and where we go, how can our security, personal privacy and civil liberties be protected? These are the issues that the partners in the IST project SWAMI set out to examine.

Ambient intelligence is believed to hold considerable promise for Europe’s economy. Hundreds of millions of euros have already been spent in AmI research, and this high level of spend is expected to continue. While most AmI scenarios paint the promise of the future in sunny colours, there is a dark side to AmI.

“Most people would be shocked to find out just how much information they consider private is already in the public domain,” says project information coordinator David Wright of Trilateral Research & Consulting in London. “Thanks to data aggregators that gather and consolidate a wide range of information about groups – and individuals – in society, our government and commercial organisations already know a great deal about what we do and what motivates us.”

To examine the potential threat to our personal liberties, the SWAMI partners created for analysis a number of “dark scenarios”, everyday situations that indicate how personal data could be misused in a future of intelligent environments. These dark scenarios offer visions of a potential future where safeguards to personal data are inadequate or have not been put in place.

In scenario 1, a man goes off to work at his office in the security company where he is employed, while his son exploits the technology on this father’s computer at home, with all its sophisticated investigative capabilities.

In scenario 2, senior citizens on a bus tour from Italy into Germany are involved in a collision due to a malfunctioning Aml traffic control system. The accident was caused by a hacker who accessed the control system and turned all the traffic lights to green.

Scenario 3 deals with a data-aggregation company that suffers theft of the personal AmI-generated data which fuels its core business. With a dominant position in the market, the company tries to cover this up, but ends up being taken to court two years later by the individuals affected. The scenario focuses on the boardroom discussions taking place as the management tries to decide what to do.

Scenario 4 presents an AmI society as high risk, as portrayed from the studios of a morning news programme. The scenario shows an action group fighting against personal profiling; the global digital divide and related environmental concerns, and the potential vulnerabilities of crowd management and traffic control systems in an AmI environment.

According to Wright, the darkest scenario of all is the threat to our personal space. “The most disturbing aspects of this new technology are already around us today, in the steady erosion of personal privacy,” he says. “Because of threats to our society, most people are willing to compromise on their personal privacy in order to gain greater security. Yet – and this must be a serious concern – is our security actually better than before we gave up this privacy?”

He believes therefore that further safeguards to personal data are needed. The project partners came up with a lengthy list of proposed safeguards to personal data; measures that will be fundamental in a future of ambient intelligence. They believe that legislation will be necessary both at national and at European level if Aml technologies are to be implemented without endangering the fundamental liberties upon which our civilisation is built.

These safeguards need to address technical, socio-economic and legal issues. In the technical arena for example, so-called privacy-enhancing technology (PET) can be built into fourth generation mobile devices, to alert the user to any data-privacy risks present within specific surroundings. Socio-economic safeguards will need to focus on increasing user awareness of the risks, both through improved education and by encouraging journalists to comment on such issues.

In the legal arena, the partners believe that present legal frameworks will not protect individual liberties in an ambient-intelligence future. Existing personal data laws and safeguards will need strengthening to meet the challenges posed by such all-encompassing technology.

Media Contact

More Information:

http://istresults.cordis.lu/All latest news from the category: Information Technology

Here you can find a summary of innovations in the fields of information and data processing and up-to-date developments on IT equipment and hardware.

This area covers topics such as IT services, IT architectures, IT management and telecommunications.

Newest articles

Silicon Carbide Innovation Alliance to drive industrial-scale semiconductor work

Known for its ability to withstand extreme environments and high voltages, silicon carbide (SiC) is a semiconducting material made up of silicon and carbon atoms arranged into crystals that is…

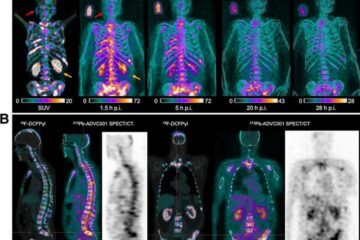

New SPECT/CT technique shows impressive biomarker identification

…offers increased access for prostate cancer patients. A novel SPECT/CT acquisition method can accurately detect radiopharmaceutical biodistribution in a convenient manner for prostate cancer patients, opening the door for more…

How 3D printers can give robots a soft touch

Soft skin coverings and touch sensors have emerged as a promising feature for robots that are both safer and more intuitive for human interaction, but they are expensive and difficult…