Gesture interface device developed by Ben-Gurion University of the Negev

Researchers at Ben-Gurion University of the Negev (BGU) in Israel have developed a new hand gesture recognition system, tested at a Washington, D.C. hospital, that enables doctors to manipulate digital images during medical procedures by motioning instead of touching a screen, keyboard or mouse which compromises sterility and could spread infection, according to a just released article.

The June article,” A Gesture-based Tool for Sterile Browsing of Radiology Images” in the Journal of the American Medical Informatics Association (2008;15:321-323, DOI 10.1197/jamia.M24), reports on what the authors believe is the first time a hand gesture recognition system was successfully implemented in an actual “in vivo” neurosurgical brain biopsy. It was tested at the Washington Hospital Center in Washington, D.C.

According to lead researcher Juan P. Wachs, a recent Ph.D. recipient from the Department of Industrial Engineering and Management at BGU, “A sterile human-machine interface is of supreme importance because it is the means by which the surgeon controls medical information, avoiding patient contamination, the operating room (OR) and the other surgeons.” This could replace touch screens now used in many hospital operating rooms which must be sealed to prevent accumulation or spreading of contaminants and requires smooth surfaces that must be thoroughly cleaned after each procedure – but sometimes aren't. With infection rates at U.S. hospitals now at unacceptably high rates, our system offers a possible alternative.”

Helman Stern, a principal investigator on the project and a professor in the Department of Industrial Engineering and Management, explains how Gestix functions in two stages: “[There is] an initial calibration stage where the machine recognizes the surgeons' hand gestures, and a second stage where surgeons must learn and implement eight navigation gestures, rapidly moving the hand away from a “neutral area” and back again. Gestix users even have the option of zooming in and out by moving the hand clockwise or counterclockwise.”

To avoid sending unintended signals, users may enter a “sleep” mode by dropping the hand. The gestures for sterile gesture interface are captured by a Canon VC-C4 camera, positioned above a large flat screen monitor, using an Intel Pentium and a Matrox Standard II video-capturing device.

The project lasted for two years; in the first year Juan Wachs spent a year working at IMI (Washington D.C.) as an informatics fellow on the development of the system. During the second year, there was a contract which ended between BGU and WHC (Washington Hospital Center) where Wachs continued working at BGU with Professors Helman Stern and Yael Edan, the project's principle investigators.

At BGU, several M.Sc theses, supervised by Prof. Helman Stern and Yael Edan, have used hand gesture recognition as part of an interface to evaluate different aspects of interface design on performance in a variety of tele-robotic and tele-operated systems. Ongoing research is aiming at expanding this work to include additional control modes (e.g., voice) so as to create a multimodal telerobotic control system.

In addition, Dr. Tal Oron and her students are currently using the gesture system to evaluate human performance measures. Further research, based on video motion capture, is being conducted by Prof. Helman Stern and Dr. Tal Oren of the Dept. of Industrial Engineering and Management and Dr. Amir Shapiro of the Dept. of Mechanical Engineering. This system, combined with a tactile body display, is intended to help the vision impaired sense their surroundings.

Media Contact

More Information:

http://web.bgu.ac.il/homeAll latest news from the category: Information Technology

Here you can find a summary of innovations in the fields of information and data processing and up-to-date developments on IT equipment and hardware.

This area covers topics such as IT services, IT architectures, IT management and telecommunications.

Newest articles

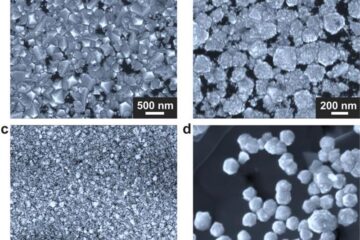

Making diamonds at ambient pressure

Scientists develop novel liquid metal alloy system to synthesize diamond under moderate conditions. Did you know that 99% of synthetic diamonds are currently produced using high-pressure and high-temperature (HPHT) methods?[2]…

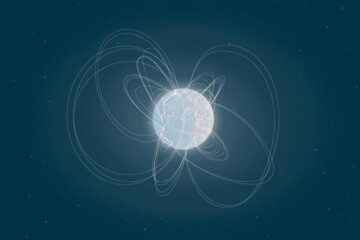

Eruption of mega-magnetic star lights up nearby galaxy

Thanks to ESA satellites, an international team including UNIGE researchers has detected a giant eruption coming from a magnetar, an extremely magnetic neutron star. While ESA’s satellite INTEGRAL was observing…

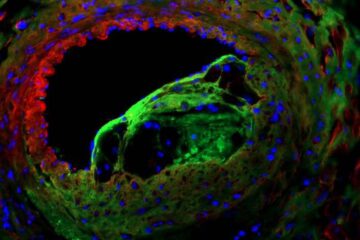

Solving the riddle of the sphingolipids in coronary artery disease

Weill Cornell Medicine investigators have uncovered a way to unleash in blood vessels the protective effects of a type of fat-related molecule known as a sphingolipid, suggesting a promising new…