The invisible network

At one time there was no choice. If you wanted to speak to someone you picked up the phone on the desk and called them. Today, you can also use a mobile cellular phone which could be either GSM or 3G.

Or you could use VOIP from your desktop PC to route the call over the internet. You could do the same with your laptop. And your internet connection could use ADSL, cable, wifi, 3G or even wimax. And then there’s your PDA…

We have never had more choice of how to communicate but neither have we had so many head-spinning acronyms. Wouldn’t it be better if we had one mobile device that could find its own way to set up a call from A to B?

That is the vision of E2RII, an EU-funded project that pulled together 32 organisations in 14 countries to plan a future where such things are possible.

“Most users don’t care about the technology, what they care about is communicating,” says project coordinator Dr Didier Bourse of Motorola Labs near Paris. “You may be in different environments – at home, in the office, on a train, and so on – but what you want is to be connected and to enjoy a seamless experience. At the same time, network operators want to make the best of their networks and use them as efficiently as possible.”

Intelligent phones

They call it ‘end-to-end connectivity’, and to achieve it the exchanges, routers and other hardware between A and B must be able to adapt to several different technologies, hence the principle of ‘end-to-end reconfigurability’ (E2R) which gives the project its name.

E2RII was the second phase of a series of projects that began with E2R itself, which ran between 2004 and 2005. The partners exploited concepts of ‘software-defined radio’, where many functions that are normally hard-wired can be done in software, and ‘cognitive radio’ and ‘cognitive networks’, where communication nodes become more and more intelligent and reconfigurable.

“The idea is to guarantee end-to-end connectivity,” says Bourse. “We are looking both at terminals – such as a phone – and networks. Terminals will be more and more intelligent, so one of the key challenges was to define how in future we will split the intelligence and functionality between the network and the terminal. What do you need on the network side to make these different technologies work together and how far can you distribute the intelligence to the edges?”

Communications cube

At present, most of the intelligence lies in the network. As you travel across Europe with your mobile phone, the local network automatically locates you, routes your calls and then hands you over to the neighbouring network. This is known as ubiquitous access.

In the medium future, the watchword is ‘pervasive services’. “You buy your device and you can update the software, like a PC, but over the air,” Bourse says. “The device can evolve to cope with new technologies, so you can access new services. Developers or vendors will be able to modify the communications standards of equipment without having to invest in a new hardware design.”

Further ahead lies ‘dynamic and flexible resource management’. Bourse asks us to picture a cube – the ‘communications cube’ – where one side represents radio frequency, a second side represents the range of radio technologies available and a third side maps all the possible services.

Today’s devices operate at only a few points within the cube. At any one time, your mobile phone will use a given frequency (perhaps 900 or 1800 MHz), a technology (say, GSM) and a service (such as voice or text). In future, Bourse envisages systems that potentially could use the entire volume of the cube, selecting whatever frequency, technology and service is available to get your message across efficiently. And you won’t even know it’s happening.

Influence on standards

Of course, there are many obstacles to overcome first, not least the present rather rigid allocation of radio spectrum. E2RII included telecom regulators amongst its partners, alongside businesses and universities, to ensure that its innovative technical concepts and solutions made regulatory as well as business sense.

There were even partners in India, China and Singapore, to bring in needed skills and help build a wider consensus on the way ahead. The project also worked with similar initiatives in North America and Japan.

The partners have developed many proposals for equipment, network management and applications. They made more than 450 contributions to conferences, journals and workshops and the effects of the project are already being felt through its input into European and worldwide standards. Some reconfigurable products influenced by E2RII thinking are starting to appear.

Although E2RII finished at the end of 2007 its work is now being carried on by a project called E3 – End-to-End Efficiency – which seeks to build on the concept of cognitive radio systems to make the best use of the communications cube. As Bourse says, “The ultimate goal is really to make the system much more efficient.”

E2RII is one of five large integrated projects in the EU’s Wireless World Initiative and was supported by the Sixth Framework Programme for research.

Media Contact

All latest news from the category: Information Technology

Here you can find a summary of innovations in the fields of information and data processing and up-to-date developments on IT equipment and hardware.

This area covers topics such as IT services, IT architectures, IT management and telecommunications.

Newest articles

Superradiant atoms could push the boundaries of how precisely time can be measured

Superradiant atoms can help us measure time more precisely than ever. In a new study, researchers from the University of Copenhagen present a new method for measuring the time interval,…

Ion thermoelectric conversion devices for near room temperature

The electrode sheet of the thermoelectric device consists of ionic hydrogel, which is sandwiched between the electrodes to form, and the Prussian blue on the electrode undergoes a redox reaction…

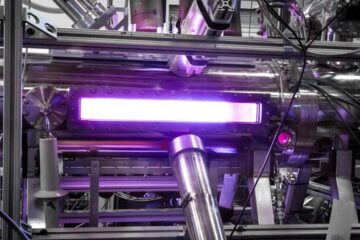

Zap Energy achieves 37-million-degree temperatures in a compact device

New publication reports record electron temperatures for a small-scale, sheared-flow-stabilized Z-pinch fusion device. In the nine decades since humans first produced fusion reactions, only a few fusion technologies have demonstrated…