New, Fast Computing Simulation Tool Nets Best Paper Award

Shukla, a 2004 recipient of a Presidential Early Career Award for Scientists and Engineers (PECASE) and a 2008 recipient of the Freidrich Wilhelm Bessel Award from the Humboldt Foundation of Germany, http://www.ece.vt.edu/faculty/shukla.php

wrote the paper with his current Ph.D. students, Mahesh Nanjundappa and Bijoy A. Jose, also of Virginia Tech, and a past Ph.D. advisee Hiren D. Patel who is now an ECE assistant professor at the University of Waterloo in Canada.

Shukla and his collaborators said that they were able to demonstrate how to speed up the simulation performance of certain SystemC based hardware models “by exploiting the high degree of parallelism afforded by today’s general purpose graphic processor units (GPGPU).” These units have multiple core processors capable of very high computation and data throughput. When parallelism is applied, it means that the processor units can run various parts of the simulations simultaneously, and not just as a single sequence of computations. Their experiments were carried out on an NVIDIA Tesla 870 with 256 processing cores. This equipment was donated to Shukla’s lab by NVIDIA during fall 2008.

Shukla said their preliminary experiments showed they were able to speed up SystemC based simulation by factors of 30 to 100 times that of previous performances.

They named their simulation infrastructure SCGPSim. The Air Force Office of Scientific Research and the National Science Foundation helped support this research.

In the past, Shukla said, “significant effort was aimed at improving the performance of SystemC simulations, but little had been directed at making them operate in parallel. And none of the attempts were ever targeted at a massively parallel platform such as a general purpose graphic processor unit.”

Another aspect of their work was the use of a specific programming model called Compute Unified Device Architecture (CUDA). It is an extension to the C software language that “exploits the processing power of graphic processor units to solve complex compute-intensive problems efficiently,” Shukla explained. “High performance is achieved by launching a number of threads and making each thread execute a part of the application in parallel.”

The CUDA execution model differs from the more commonly known central processing unit (CPU) based execution in terms of how the threads are scheduled. With CUDA, it is possible to have all of the threads execute simultaneously on separate processor cores and intermittently converge on the same path, thus increasing the efficiency.

The work at Virginia Tech was conducted in the Formal Engineering Research with Models, Abstractions and Transformations (FERMAT) Laboratory, founded by Shukla in 2002. Its focus is in designing, analyzing and predicting performance of electronic systems, particularly systems embedded in automated systems. http://www.fermat.ece.vt.edu/

“Speeding up simulation of complex hardware models is extremely important for semiconductor electronics industry to producer newer and newer products in shorter times, thus improving the quality of computing and consumer electronics products faster. If such models can be simulated 10 times faster, then if validating a model took 10 days in the past, now it would take one day. This is why faster simulation performance probably attracted the attention of the ASP-DAC ’10 awards committee.” Shukla said.

ASP-DAC is one of the three conferences sponsored by IEEE Circuits and Systems Society, and ACM Special Interests Group on Design Automation, on the topic of electronics design automation. These three conferences are held every year in the US (DAC) , in Europe (DATE) and in the Asia-pacific region (ASP-DAC).

Virginia Tech’s College of Engineering is internationally recognized for its excellence in 14 engineering disciplines and computer science. As the nation’s third largest producer of engineers with baccalaureate degrees, undergraduates benefit from an innovative curriculum that provides a hands-on, minds-on approach to engineering education. It complements classroom instruction with two unique design-and-build facilities and a strong Cooperative Education Program. With more than 50 research centers and numerous laboratories, the college offers its 2,000 graduate students opportunities in advanced fields of study, including biomedical engineering, state-of-the-art microelectronics, and nanotechnology. http://www.eng.vt.edu/main/index.php

Media Contact

All latest news from the category: Information Technology

Here you can find a summary of innovations in the fields of information and data processing and up-to-date developments on IT equipment and hardware.

This area covers topics such as IT services, IT architectures, IT management and telecommunications.

Newest articles

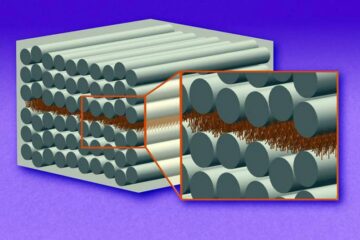

“Nanostitches” enable lighter and tougher composite materials

In research that may lead to next-generation airplanes and spacecraft, MIT engineers used carbon nanotubes to prevent cracking in multilayered composites. To save on fuel and reduce aircraft emissions, engineers…

Trash to treasure

Researchers turn metal waste into catalyst for hydrogen. Scientists have found a way to transform metal waste into a highly efficient catalyst to make hydrogen from water, a discovery that…

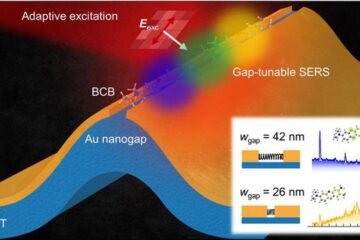

Real-time detection of infectious disease viruses

… by searching for molecular fingerprinting. A research team consisting of Professor Kyoung-Duck Park and Taeyoung Moon and Huitae Joo, PhD candidates, from the Department of Physics at Pohang University…