Conquering the chaos in modern, multiprocessor computers

Unfortunately, that is far from the case with many of today's machines. Beneath their smooth exteriors, modern computers behave in wildly unpredictable ways, said Luis Ceze, a UW assistant professor of computer science and engineering.

“With older, single-processor systems, computers behave exactly the same way as long as you give the same commands. Today's computers are non-deterministic. Even if you give the same set of commands, you might get a different result,” Ceze said.

He and UW associate professors of computer science and engineering Mark Oskin and Dan Grossman and UW graduate students Owen Anderson, Tom Bergan, Joseph Devietti, Brandon Lucia and Nick Hunt have developed a way to get modern, multiple-processor computers to behave in predictable ways, by automatically parceling sets of commands and assigning them to specific places. Sets of commands get calculated simultaneously, so the well-behaved program still runs faster than it would on a single processor.

Next week at the International Conference on Architectural Support for Programming Languages and Operating Systems (http://www.ece.cmu.edu/CALCM/asplos10/doku.php) in Pittsburgh, Bergan will present a software-based version of this system that could be used on existing machines. It builds on a more general approach the group published last year, which was recently chosen as a top paper for 2009 by the Institute of Electrical and Electronics Engineers' journal Micro.

In the old days one computer had one processor. But today's consumer standard is dual-core processors, and even quad-core machines are appearing on store shelves. Supercomputers and servers can house hundreds, even thousands, of processing units.

On the plus side, this design creates computers that run faster, cost less and use less power for the same performance delivered on a single processor. On the other hand, multiple processors are responsible for elusive errors that freeze Web browsers and crash programs.

It is not so different from the classic chaos problem in which a butterfly flaps its wings in one place and can cause a hurricane across the globe. Modern shared-memory computers have to shuffle tasks from one place to another. The speed at which the information travels can be affected by tiny changes, such as the distance between parts in the computer or even the temperature of the wires. Information can thus arrive in a different order and lead to unexpected errors, even for tasks that ran smoothly hundreds of times before.

“With multi-core systems the trend is to have more bugs because it's harder to write code for them,” Ceze said. “And these concurrency bugs are much harder to get a handle on.”

One application of the UW system is to make errors reproducible, so that programs can be properly tested.

“We've developed a basic technique that could be used in a range of systems, from cell phones to data centers,” Ceze said. “Ultimately, I want to make it really easy for people to design high-performing, low-energy and secure systems.”

Last year Ceze, Oskin, and Peter Godman, a former director at Isilon Systems, founded a company to commercialize their technology. PetraVM (http://petravm.com/) is initially named after the Greek word for rock because it hopes to develop “rock-solid systems,” Ceze said. The Seattle-based startup will soon release its first product, Jinx, which makes any errors that are going to crop up in a program happen quickly.

“We can compress the effect of thousands of people using a program into a few minutes during the software's development,” Ceze said. “We want to allow people to write code for multi-core systems without going insane.”

The company already has some big-name clients trying its product, Ceze said, though it is not yet disclosing their identities.

“If this erratic behavior irritates us, as software users, imagine how it is for banks or other mission-critical applications.”

Part of this research was funded by the National Science Foundation and a Microsoft Research fellowship.

For more information, contact Ceze at 206-543-1896 or luisceze@cs.washington.edu.

More information on the research is at http://sampa.cs.washington.edu.

Media Contact

More Information:

http://www.uw.eduAll latest news from the category: Information Technology

Here you can find a summary of innovations in the fields of information and data processing and up-to-date developments on IT equipment and hardware.

This area covers topics such as IT services, IT architectures, IT management and telecommunications.

Newest articles

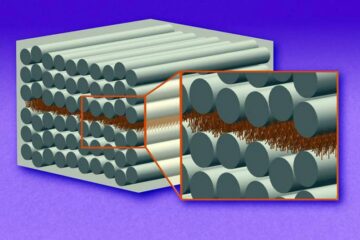

“Nanostitches” enable lighter and tougher composite materials

In research that may lead to next-generation airplanes and spacecraft, MIT engineers used carbon nanotubes to prevent cracking in multilayered composites. To save on fuel and reduce aircraft emissions, engineers…

Trash to treasure

Researchers turn metal waste into catalyst for hydrogen. Scientists have found a way to transform metal waste into a highly efficient catalyst to make hydrogen from water, a discovery that…

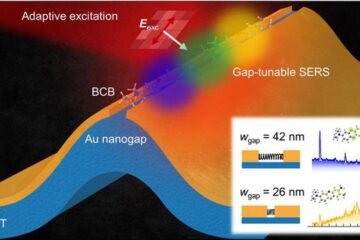

Real-time detection of infectious disease viruses

… by searching for molecular fingerprinting. A research team consisting of Professor Kyoung-Duck Park and Taeyoung Moon and Huitae Joo, PhD candidates, from the Department of Physics at Pohang University…