Booster for Next-Generation Supercomputers

Today supercomputers are an indispensable tool in almost all fields of research. However, present concepts cannot be extended indefinitely without causing an unreasonable increase in effort and costs. For this reason, scientists plan to develop a new platform for next-generation supercomputers as part of the EU DEEP project (Dynamical ExaScale Entry Platform), with applications for brain research, climatology and seismology, to name but a few.

The project will be launched this December and showcased at the world’s most important supercomputing conference, the SC’11 in Seattle, on 17 November 2011.

Even today scientists already need gigantic computing capacity in order to model biological organs and to develop ever more multifaceted models of climate or the universe or complex building blocks of matter.

To ensure that European research continues to have access to the necessary resources for high-performance computing (HPC) in future, Forschungszentrum Jülich is planning to enter the exaflop/s age by 2020 with the DEEP project – together with Intel, ParTec and 12 other European partners from 8 countries. An exaflop/s computer of this type, performing a quintillion (1018) calculations per second, would be a thousand times faster than today’s supercomputers. The scientists expect a first prototype as early as 2014/2015 that will have a capacity of 100 petaflop/s, around one hundred times faster than today’s petaflop/s computers, such as Jülich’s Petaflop computer JUGENE.

With the exaflop/s class, scientists will be able to tackle challenges which still seem unrealistic today, such as detailed simulation of the human brain. However, increases in performance on this scale can only be achieved by parallel computing employing millions of processors. Using today’s technology, this would mean that energy costs would become prohibitive. In order to pave the way for a viable exascale computer, researchers in the DEEP project, funded with € 8 million by the European Commission, will be optimizing the networking of different hardware components and integrating new energy-saving cooling systems.

Scientists at Jülich have designed a new type of “cluster booster architecture” for DEEP. One important element is the processors that are still under development and are specially designed for parallel computing, the Intel® Many Integrated Core Architecture, with 50 plus cores on a single chip. Each of these 512 MIC processors will be linked to a booster that accelerates the entire system via a high-speed network called Extoll developed by the University of Heidelberg. “Working closely with Intel helps us to accelerate the development of cluster architectures for the exascale and to address the hardware and software challenges of building, programming and operating such systems”, explains Prof. Thomas Lippert, head of the Jülich Supercomputing Centre.

The new approach takes into account the fact that large-scale, future simulations will consist of multiple and very diverse tasks with complicated communication patterns between the processors. The underlying idea: the complex components of a program are executed on the “core” of the parallel computer, a cluster with Intel Xeon server processors. In contrast, simple, highly parallel program components that do not rely on such CPUs will be offloaded to the booster modules which, thanks to their large number of more simply structured computer cores, are able to perform the calculations for tasks of this kind with far greater energy efficiency.

“The close collaboration between Intel, Europe's largest scientific computer centre in Jülich and the leading cluster software vendor ParTec presents a unique opportunity to accelerate the evolution of cluster HPC platforms. Work on the novel DEEP architecture will be a key component in the understanding and development of future exascale systems, middleware and applications”, explains Stephen Pawlowski, Intel Senior Fellow and General Manager, Datacenter and Connected Systems Pathfinding.

Hugo R. Falter, Chief Operating Officer at ParTec, reports: “I am glad that the ParaStation Cluster Operating System can contribute to the success of this visionary project.” Based on an expanded version of this cluster operating system, an entire software environment for the new hardware architecture will be created with DEEP. As part of the project, in addition to tools for application developers, application software for brain research, climatology, seismology, high-temperature superconductivity and computational fluid engineering will also be transferred to the platform.

Forschungszentrum Jülich, Intel and ParTec have collaborated closely since 2010 in the Exacluster Laboratory at Jülich on developing novel system architectures and software tools for cluster computers. The main focus is on the scalability of hardware and software up to the exascale class and on ensuring the reliability of these systems. The DEEP project was initiated under the auspices of the ExaCluster Laboratory.

Further information:

SC’11 – International Conference for High Performance Computing, Networking, Storage and Analysis:

http://www.sc11.supercomputing.org/

Research at Jülich Supercomputing Centre (JSC):

http://www.fz-juelich.de/ias/jsc/EN/Home/home_node.html

Projektpartner:

Forschungszentrum Jülich (DE): http://www.fz-juelich.de

Intel GmbH (DE): http://www.intel.de

ParTec Cluster Competence Center GmbH (DE): http://www.par-tec.com/

Leibniz-Rechenzentrum der Bayrischen Akademie der Wissenschaften (DE): http://www.lrz.de/

Universität Heidelberg (DE): http://www.uni-heidelberg.de

German Research School for Simulation Sciences (DE): http://www.grs-sim.de

Eurotech (IT): http://www.eurotech.com

Barcelona Supercomputing Center (ES): http://www.bsc.es

Mellanox (IL): http://www.mellanox.com/

École Polytechnique Fédérale de Lausanne (CH): http://www.epfl.ch

Katholieke Universiteit Leuven (BE): http://www.kuleuven.be

European Centre for Research and Advanced Training in Scientific Computation (FR): http://www.cerfacs.fr

Cyprus Institute (CY): http://www.cyi.ac.cy

Universität Regensburg (DE): http://www.uni-regensburg.de

CINECA (IT): http://www.cineca.it

CCGVeritas (FR): http://www.cggveritas.com

Contact:

Wolfgang Gürich

+49 2461 61-6540

w.guerich@fz-juelich.de

Press Contact:

Tobias Schlößer

+49 2461 61-4771

t.schloesser@fz-juelich.de

Forschungszentrum Jülich…

pursues cutting-edge interdisciplinary research addressing pressing issues facing society today while at the same time developing key technologies for tomorrow. Research focuses on the areas of health, energy and environment, and information technology. The cooperation of the researchers at Jülich is characterized by outstanding expertise and infrastructure in physics, materials science, nanotechnology, and supercomputing. With a staff of about 4,700, Jülich – a member of the Helmholtz Association – is one of the largest research centres in Europe.

Media Contact

More Information:

http://www.fz-juelich.deAll latest news from the category: Information Technology

Here you can find a summary of innovations in the fields of information and data processing and up-to-date developments on IT equipment and hardware.

This area covers topics such as IT services, IT architectures, IT management and telecommunications.

Newest articles

Silicon Carbide Innovation Alliance to drive industrial-scale semiconductor work

Known for its ability to withstand extreme environments and high voltages, silicon carbide (SiC) is a semiconducting material made up of silicon and carbon atoms arranged into crystals that is…

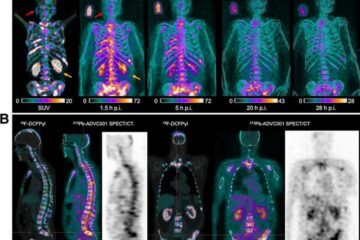

New SPECT/CT technique shows impressive biomarker identification

…offers increased access for prostate cancer patients. A novel SPECT/CT acquisition method can accurately detect radiopharmaceutical biodistribution in a convenient manner for prostate cancer patients, opening the door for more…

How 3D printers can give robots a soft touch

Soft skin coverings and touch sensors have emerged as a promising feature for robots that are both safer and more intuitive for human interaction, but they are expensive and difficult…