3-D Gesture-Based Interaction System Unveiled

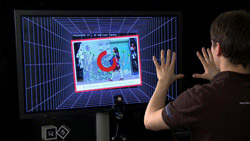

The system detects the hands and fingers in real-time. Source: Fraunhofer FIT <br>

However, scientists at Fraunhofer FIT has developed the next generation noncontact gesture and finger recognition system. The novel system detects hand and finger positions in real-time and translates these into appropriate interaction commands. Furthermore, the system does not require special gloves or markers and is capable of supporting multiple users.

With touch screens becoming increasingly popular, classic interaction techniques such as a mouse and keyboard are becoming less frequently used. One example of a breakthrough is the Apple iPhone which was released in summer 2007. Since then many other devices featuring touch screens and similar characteristics have been successfully launched – with more advanced devices even supporting multiple users simultaneously, e.g. the Microsoft Surface table becoming available. This is an entire surface which can be used for input. However, this form of interaction is specifically designed for two-dimensional surfaces.

Fraunhofer FIT has developed the next generation of multi-touch environment, one that requires no physical contact and is entirely gesture-based. This system detects multiple fingers and hands at the same time and allows the user to interact with objects on a display. The users move their hands and fingers in the air and the system automatically recognizes and interprets the gestures accordingly.

Cinemagoers will remember the science-fiction thriller Minority Report from 2002 which starred Tom Cruise. In this film Tom Cruise is in a 3-D software arena and is able to interact with numerous programs at unimaginable speed, however the system used special gloves and only three fingers from each hand.

The FIT prototype provides the next generation of gesture-based interaction far in advance of the Minority Report system. The FIT prototype tracks the user's hand in front of a 3-D camera. The 3-D camera uses the time of flight principle, in this approach each pixel is tracked and the length of time it takes light to be filmed travelling to and from the tracked object is determined. This allows for the calculation of the distance between the camera and the tracked object.

“A special image analysis algorithm was developed which filters out the positions of the hands and fingers. This is achieved in real-time through the use of intelligent filtering of the incoming data. The raw data can be viewed as a kind of 3-D mountain landscape, with the peak regions representing the hands or fingers.” said Georg Hackenberg, who developed the system as part of his Master's thesis. In addition plausibility criteria are used, these are based around: the size of a hand, finger length and the potential coordinates.

A user study was conducted and found that the system both easy to use and fun. However, work remains to be done on removing elements which confuses the system, for example reflections caused by wristwatches and palms which are positioned orthogonal to the camera.

“With Microsoft announcing Project Natal, it is likely that similar techniques will very soon become standard across the gaming industry. This technology also opens up the potential for new solutions in the range of other application domains, such as the exploration of complex simulation data and for new forms of learning,” predicts Prof. Dr. Wolfgang Broll of the Fraunhofer Institute for Applied Information Technology FIT.

Media Contact

All latest news from the category: Information Technology

Here you can find a summary of innovations in the fields of information and data processing and up-to-date developments on IT equipment and hardware.

This area covers topics such as IT services, IT architectures, IT management and telecommunications.

Newest articles

Bringing bio-inspired robots to life

Nebraska researcher Eric Markvicka gets NSF CAREER Award to pursue manufacture of novel materials for soft robotics and stretchable electronics. Engineers are increasingly eager to develop robots that mimic the…

Bella moths use poison to attract mates

Scientists are closer to finding out how. Pyrrolizidine alkaloids are as bitter and toxic as they are hard to pronounce. They’re produced by several different types of plants and are…

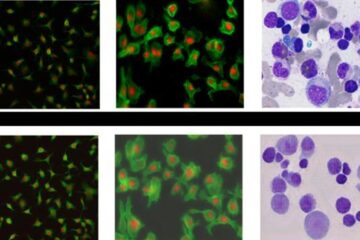

AI tool creates ‘synthetic’ images of cells

…for enhanced microscopy analysis. Observing individual cells through microscopes can reveal a range of important cell biological phenomena that frequently play a role in human diseases, but the process of…