Study Improves Accuracy of Models for Predicting Ozone Levels in Urban Areas

A team of scientists has, for the first time, completely characterized an important chemical reaction that is critical to the formation of ground-level ozone in urban areas. The team's results indicate that computer models may be underestimating ozone levels in urban areas during episodes of poor air quality (smoggy days) by as much as five to 10 percent.

Ground level ozone poses significant health hazards to people, animals and plants; is the primary ingredient of smog; and gives polluted air its characteristic odor. It is known that even small increases in ozone concentrations can lead to increases in death from respiratory problems. Because of the health hazards caused by ozone exposure, the research team's results may have regulatory implications.

The team's research, which was funded by the National Science Foundation (NSF), NASA and the California Air Resources Board CARB), appears in the October 29 issue of Science.

Big role of one reaction in predicting ozone in smoggy air

The reaction studied by the researchers plays an important role in controlling the efficiency of a sunlight-driven cycle of reactions that continuously generates ozone. In this reaction, a hydroxyl radical (OH) combines with nitrogen dioxide (NO2), which is produced from emissions generated by vehicles, various industrial processes and some biological processes.

When a hydroxyl radical and nitrogen dioxide collide, these molecules may stick together to form a stable byproduct known as nitric acid (HONO2). Because of the stability of nitric acid, its formation locks up hydroxyl radicals and nitrogen dioxide, and thereby prevents these molecules from contributing to ozone formation; this reaction thereby slows the formation of ozone.

Although scientists have long recognized the importance of the formation of nitric acid, they have, until now, been unable to agree on the speed, or “rate,” at which hydrogen radicals and nitrogen dioxide combine to form this end product. “This reaction, which slows down ozone production, has been among the greatest sources of uncertainty in predicting ozone levels,” said Mitchio Okumura of the California Institute of Technology–a member of the research team. This uncertainty has affected computer models that simulate air pollution chemistry.

An experimental challenge

Why is there so much uncertainty about the speed or rate of formation of nitric acid? In large part, because instead of combining to form a stable form of nitric acid, a hydroxyl radical may combine with nitrogen dioxide to form a less stable form of nitric acid (HOONO)–a snake-like molecule that quickly breaks apart in the atmosphere. This breakdown of the unstable form of nitric acid releases its hydroxyl radical back into the atmosphere where it may once again become available to form ozone; this breakdown therefore speeds the formation of ozone. Nevertheless questions about the existence, amount, speed and formation of the unstable form of nitric acid have, until now, complicated measurements of the speed or rate of the formation of the more stable form of nitric acid.

But through experiments conducted at the Jet Propulsion Laboratory (JPL) and at the California Institute of Technology using state-of-the-art techniques, Okumura and his colleague, Stanley P. Sander at JPL, led a team of researchers that accurately measured: 1) the overall speed at which hydroxyl radicals and nitrogen dioxide combine, or react, in given atmospheric conditions; 2) the ratio of stable nitric acid to unstable nitric acid that is formed under given atmospheric conditions.

In addition, new laser methods enabled researchers to directly detect the presence of the unstable form of nitric acid in microseconds. And with the help of companion calculations performed at Ohio State by Anne McCoy, they could quantify its yield as soon as it was formed.

The research team's experiments show that the stable form of nitric acid forms slower than previously believed. These results indicate that there is more OH available in polluted, ground-level air for the formation of ozone than previously believed, and thus probably more ozone in the atmosphere than previously predicted.

More ozone than previously believed

To demonstrate the significance of the new results, modelers on the research team led by Robert Harley and William Carter fed their newly quantified reaction rates and ratios into computer models to predict levels of ground-level ozone during the summer of 2010 in the Los Angeles Basin. Their results indicate that many current models have been underestimating ground-level ozone levels in the most polluted areas (where nitrogen dioxide is highest) by about 5 to 10 percent. The research team concluded that relatively small changes in the rates and proportions of reactions forming unstable and stable nitric acid could lead to small but significant changes in ground-level ozone levels.

The importance of the study

“The study illustrates the importance of developing new and improved experimental approaches that interrogate atmospheric systems at the molecular level with high accuracy,” said Zeev Rosenweig, an NSF program officer. “This is imperative to reducing uncertainties in atmospheric model predictions.”

“The determination of a more accurate value of the rate of nitric acid formation from a hydroxyl radical and nitrogen dioxide will be important in future air-quality modeling,” said Anne B. McCoy, a member of the research team. “The research was made possible by bringing together several laboratories with different capabilities and expertise, including my lab at Ohio State, and labs at CalTech, JPL and Berkeley.”

Regulatory implications

The ozone prediction models incorporated into the research team's study are similar to those used by regulatory agencies, such as the Environmental Protection Agency and the California Air Resources Board. Therefore, the team's results may have implications for future predictions of ground-level ozone used by regulatory agencies in developing air quality management plans.

Media Contacts

Lily Whiteman, National Science Foundation (703) 292-8310 lwhitema@nsf.gov

Jon Weiner, California Institute of Technology (626) 395-3226 jrweiner@caltech.edu

Co-Investigators

Mitchio Okumuro, California Institute of Technology (626) 395-6557 mo@caltech.edu

The National Science Foundation (NSF) is an independent federal agency that supports fundamental research and education across all fields of science and engineering. In fiscal year (FY) 2010, its budget is about $6.9 billion. NSF funds reach all 50 states through grants to nearly 2,000 universities and institutions. Each year, NSF receives over 45,000 competitive requests for funding, and makes over 11,500 new funding awards. NSF also awards over $400 million in professional and service contracts yearly.

Media Contact

All latest news from the category: Ecology, The Environment and Conservation

This complex theme deals primarily with interactions between organisms and the environmental factors that impact them, but to a greater extent between individual inanimate environmental factors.

innovations-report offers informative reports and articles on topics such as climate protection, landscape conservation, ecological systems, wildlife and nature parks and ecosystem efficiency and balance.

Newest articles

Superradiant atoms could push the boundaries of how precisely time can be measured

Superradiant atoms can help us measure time more precisely than ever. In a new study, researchers from the University of Copenhagen present a new method for measuring the time interval,…

Ion thermoelectric conversion devices for near room temperature

The electrode sheet of the thermoelectric device consists of ionic hydrogel, which is sandwiched between the electrodes to form, and the Prussian blue on the electrode undergoes a redox reaction…

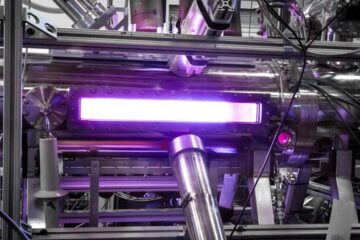

Zap Energy achieves 37-million-degree temperatures in a compact device

New publication reports record electron temperatures for a small-scale, sheared-flow-stabilized Z-pinch fusion device. In the nine decades since humans first produced fusion reactions, only a few fusion technologies have demonstrated…