Scientist models the mysterious travels of greenhouse gas

A University of Michigan researcher is developing a unique way to reconcile these crucial data.

“If we're going to adapt to climate change, we need to be able to predict what the climate will be,” said Anna Michalak, assistant professor in the Department of Civil and Environmental Engineering and the Department of Atmospheric, Oceanic and Space Sciences. “We want to know how the sources and sinks of carbon will evolve in the future, and the only way we can manage climate change is with scientific information.”

Michalak is discussing the work at the symposium “Improving Understanding of Carbon Flux Variability Using Atmospheric Inverse Modeling” Sunday at the American Association for the Advancement of Science annual meeting here. She co-organized the session, “The Carbon Budget: Can We Reconcile Flux Estimates?” with Joyce Penner, a professor in the Department of Atmospheric, Oceanic and Space Sciences.

For some 50 years, scientists have measured the amount of carbon dioxide in the air on a large scale, at an increasing number of locations sprinkled across the globe, and by sampling very small areas. Together with inventories of fossil fuel use, that's given good data about how much carbon is being pumped into the atmosphere—currently approximately 8 billion tons a year.

It's also known that half of that stays in the atmosphere. The rest comes to rest in the oceans, the earth, or is gobbled up by plants during photosynthesis.

But then the data gets harder to come by and scientists have had to make some assumptions. Those flux towers only cover a few places on Earth, and it's too cumbersome to collect data on small areas. Even a powerful new tool Michalak will be using—NASA's Orbiting Carbon Observatory (OCO), a satellite designed to monitor atmospheric carbon—does not paint a perfect picture. She compares the thin data strips it harvests with wrapping a basketball with floss.

The problem: Michalak said the data takes such a big-picture approach that it is difficult to isolate carbon being emitted or taken up in specific regions, or even countries. Scientists are left with an understanding of carbon sources that isn't nimble enough to understand the variability, or to be confident about predicting the future.

Michalak has developed a robust way to use available data to understand this variability called “geostatistical inverse modeling.” This method breaks the globe into small regions and examines how much CO2 must have been emitted in each region to achieve the concentrations measured at atmospheric sample points. This method also allows her and her collaborators to use information from other existing satellites that measure the Earth's surface to supplement the information from the atmospheric monitoring network. Eventually, this method aims to trace the carbon levels at each sample point to a particular source or sink on the surface.

The technique, Michalak says, is like figuring out where the cream was originally poured in a cup of half-stirred coffee.

“Winds and weather patterns mix CO2 in the atmosphere just like stirring mixes cream in a cup of coffee,” she said. “As soon as you start stirring, you lose some information about where and when the cream was originally added to the cup. With careful measurements and models, however, much of this information can be recovered.”

“One of our big questions is how carbon sources and sinks evolve,” Michalak said. “This is all with an eye on prediction and management.”

Media Contact

More Information:

http://www.umich.eduAll latest news from the category: Ecology, The Environment and Conservation

This complex theme deals primarily with interactions between organisms and the environmental factors that impact them, but to a greater extent between individual inanimate environmental factors.

innovations-report offers informative reports and articles on topics such as climate protection, landscape conservation, ecological systems, wildlife and nature parks and ecosystem efficiency and balance.

Newest articles

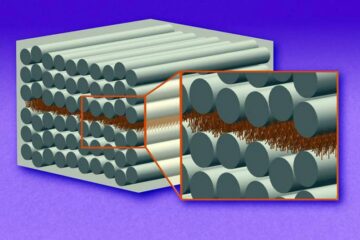

“Nanostitches” enable lighter and tougher composite materials

In research that may lead to next-generation airplanes and spacecraft, MIT engineers used carbon nanotubes to prevent cracking in multilayered composites. To save on fuel and reduce aircraft emissions, engineers…

Trash to treasure

Researchers turn metal waste into catalyst for hydrogen. Scientists have found a way to transform metal waste into a highly efficient catalyst to make hydrogen from water, a discovery that…

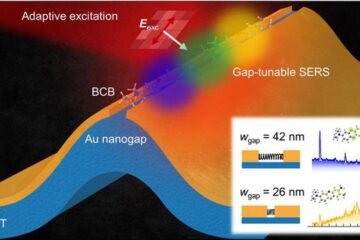

Real-time detection of infectious disease viruses

… by searching for molecular fingerprinting. A research team consisting of Professor Kyoung-Duck Park and Taeyoung Moon and Huitae Joo, PhD candidates, from the Department of Physics at Pohang University…