Better regional monitoring of CO2 needed as global levels continue rising

Monitoring Earth's rising greenhouse gas levels will require a global data collection network 10 times larger than the one currently in place in order to quantify regional progress in emission reductions, according to a new research commentary by University of Colorado and NOAA researchers appearing in the April 25 issue of Science.

The authors, CU-Boulder Research Associate Melinda Marquis and National Oceanic and Atmospheric Administration scientist Pieter Tans, said with atmospheric carbon dioxide concentrations now at 385 parts per million and rising, the need for improved regional greenhouse gas measurements is critical. While the current observation network can measure CO2 fluxes on a continental scale, charting regional emissions where significant mitigation efforts are underway — like California, New England and European countries — requires a more densely populated network, they said.

“The question is whether scientists in the United States and around the world have what they need to monitor regional fluxes in atmospheric carbon dioxide,” said Marquis, a scientist at the Cooperative Institute for Research in Environmental Sciences, a joint institute of CU-Boulder and NOAA. “Right now, they don't.”

While CO2 levels are climbing by 2 parts per million annually — a rate expected to increase as China and India continue to industrialize — effective regional CO2 monitoring strategies are virtually nonexistent, she said. Scientists are limited in their ability to distinguish between distant and nearby carbon sources and “sinks,” or storage areas, for example, by the accuracy of atmospheric transport models that reflect details of terrain, winds and the mixing of gases near observation sites.

“We are in uncharted territory as far as knowing how safe these high CO2 levels are for the Earth,” she said. “Instead of tackling a very complex challenge with the equivalent of Magellan's maps, we need to use the equivalent of Google Earth.”

Marquis and Tans propose increasing the number of global carbon measurement sites from about 100 to 1,000, which would decrease the uncertainty in computer models and help scientists better quantify changes. “With existing tools we could gather large amounts of additional CO2 data for a relatively small investment,” said Marquis. “The next step is to muster the political will to fund these efforts.”

Scientists currently sample CO2 using air flasks, in-situ measurements from transmitter towers up to 2,000 feet high and via aircraft sensors. The authors proposed putting additional CO2 sensors on existing and new transmitter towers that can gather large volumes of climate data. While Europe and the United States have small networks of tall transmitter towers equipped with CO2 instruments, such towers are rare on the rest of the planet, she said.

Satellites queued for launch in the next few years to help monitor atmospheric CO2 levels include the Orbiting Carbon Observatory and the Greenhouse Gases Observing Satellite, said Marquis. The satellites will augment ground-based and aircraft measurements charting terrestrial photosynthesis, carbon sinks, CO2 respiration sources, ocean-atmosphere gas exchanges and CO2 emissions from wildfires.

Mandated by the U.N. Framework Convention on Climate Change in 1994, national emissions inventories for each country are based primarily on economic statistics to estimate greenhouse gases entering and leaving the atmosphere, said the authors. Such inventories are “reasonably accurate” for estimating atmospheric CO2 from burning fossil fuels in developed countries.

But they are less accurate for other sources of CO2, like deforestation, and for emissions of other greenhouse gases, like methane, which is emitted as a result of rice farming, cattle ranching and natural wetlands, said the authors.

There is a growing need to measure the effectiveness of particular mitigation efforts by states or regions involved in pollution caps, auto emission reduction campaigns and intensive tree-planting efforts, Marquis said. The Western Climate Initiative, for example — a consortium of seven western U.S. states and British Columbia — set a goal last year of reducing greenhouse gas emissions by 15 percent as of 2020.

Precise regional CO2 measurements also could help chart the accuracy of carbon trading systems involving “credits” and “offsets” now in use in various countries around the world, said Marquis. In such systems, companies exceeding CO2 emission caps can buy carbon credits from companies under the caps, and groups or companies can buy voluntary carbon offsets to compensate for personal lifestyle choices, such as airline travel.

“Independent verification through regional CO2 monitoring could help determine whether carbon credits or offsets being bought or sold are of value,” Marquis said.

Media Contact

All latest news from the category: Ecology, The Environment and Conservation

This complex theme deals primarily with interactions between organisms and the environmental factors that impact them, but to a greater extent between individual inanimate environmental factors.

innovations-report offers informative reports and articles on topics such as climate protection, landscape conservation, ecological systems, wildlife and nature parks and ecosystem efficiency and balance.

Newest articles

Properties of new materials for microchips

… can now be measured well. Reseachers of Delft University of Technology demonstrated measuring performance properties of ultrathin silicon membranes. Making ever smaller and more powerful chips requires new ultrathin…

Floating solar’s potential

… to support sustainable development by addressing climate, water, and energy goals holistically. A new study published this week in Nature Energy raises the potential for floating solar photovoltaics (FPV)…

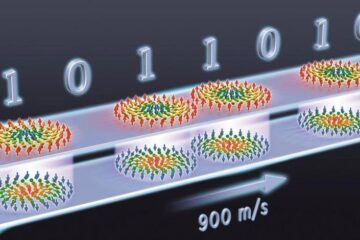

Skyrmions move at record speeds

… a step towards the computing of the future. An international research team led by scientists from the CNRS1 has discovered that the magnetic nanobubbles2 known as skyrmions can be…