Terahertz imaging on the cheap

Terahertz imaging, which is already familiar from airport security checkpoints, has a number of other promising applications — from explosives detection to collision avoidance in cars. Like sonar or radar, terahertz imaging produces an image by comparing measurements across an array of sensors. Those arrays have to be very dense, since the distance between sensors is proportional to wavelength.

In the latest issue of IEEE Transactions on Antennas and Propagation, researchers in MIT's Research Laboratory for Electronics describe a new technique that could reduce the number of sensors required for terahertz or millimeter-wave imaging by a factor of 10, or even 100, making them more practical. The technique could also have implications for the design of new, high-resolution radar and sonar systems.

In a digital camera, the lens focuses the incoming light so that light reflected by a small patch of the visual scene strikes a correspondingly small patch of the sensor array. In lower-frequency imaging systems, by contrast, an incoming wave — whether electromagnetic or, in the case of sonar, acoustic — strikes all of the sensors in the array. The system determines the origin and intensity of the wave by comparing its phase — the alignment of its troughs and crests — when it arrives at each of the sensors.

As long as the distance between sensors is no more than half the wavelength of the incoming wave, that calculation is fairly straightforward, a matter of inverting the sensors' measurements. But if the sensors are spaced farther than half a wavelength apart, the inversion will yield more than one possible solution. Those solutions will be spaced at regular angles around the sensor array, a phenomenon known as “spatial aliasing.”

Narrowing the field

In most applications of lower-frequency imaging, however, any given circumference around the detector is usually sparsely populated. That's the phenomenon that the new system exploits.

“Think about a range around you, like five feet,” says Gregory Wornell, the Sumitomo Electric Industries Professor in Engineering in MIT's Department of Electrical Engineering and Computer Science and a co-author on the new paper. “There's actually not that much at five feet around you. Or at 10 feet. Different parts of the scene are occupied at those different ranges, but at any given range, it's pretty sparse. Roughly speaking, the theory goes like this: If, say, 10 percent of the scene at a given range is occupied with objects, then you need only 10 percent of the full array to still be able to achieve full resolution.”

The trick is to determine which 10 percent of the array to keep. Keeping every tenth sensor won't work: It's the regularity of the distances between sensors that leads to aliasing. Arbitrarily varying the distances between sensors would solve that problem, but it would also make inverting the sensors' measurements — calculating the wave's source and intensity— prohibitively complicated.

Regular irregularity

So Wornell and his co-authors — James Krieger, a former student of Wornell's who is now at MIT's Lincoln Laboratory, and Yuval Kochman, a former postdoc who is now an assistant professor at the Hebrew University of Jerusalem — instead prescribe a detector along which the sensors are distributed in pairs. The regular spacing between pairs of sensors ensures that the scene reconstruction can be calculated efficiently, but the distance from each sensor to the next remains irregular.

The researchers also developed an algorithm that determines the optimal pattern for the sensors' distribution. In essence, the algorithm maximizes the number of different distances between arbitrary pairs of sensors.

With his new colleagues at Lincoln Lab, Krieger has performed experiments at radar frequencies using a one-dimensional array of sensors deployed in a parking lot, which verified the predictions of the theory. Moreover, Wornell's description of the sparsity assumptions of the theory — 10 percent occupation at a given distance means one-tenth the sensors — applies to one-dimensional arrays. Many applications — such as submarines' sonar systems — instead use two-dimensional arrays, and in that case, the savings compound: One-tenth the sensors in each of two dimensions translates to one-hundredth the sensors in the complete array.

Written by Larry Hardesty, MIT News Office

Additional background

Paper: “Multi-coset sparse imaging arrays”: http://ieeexplore.ieee.org/stamp/stamp.jsp?arnumber=06710127

Gregory Wornell: http://allegro.mit.edu/~gww/

Archive: “The blind codemaker”: http://newsoffice.mit.edu/2012/error-correcting-codes-0210

Media Contact

More Information:

http://www.mit.eduAll latest news from the category: Power and Electrical Engineering

This topic covers issues related to energy generation, conversion, transportation and consumption and how the industry is addressing the challenge of energy efficiency in general.

innovations-report provides in-depth and informative reports and articles on subjects ranging from wind energy, fuel cell technology, solar energy, geothermal energy, petroleum, gas, nuclear engineering, alternative energy and energy efficiency to fusion, hydrogen and superconductor technologies.

Newest articles

Properties of new materials for microchips

… can now be measured well. Reseachers of Delft University of Technology demonstrated measuring performance properties of ultrathin silicon membranes. Making ever smaller and more powerful chips requires new ultrathin…

Floating solar’s potential

… to support sustainable development by addressing climate, water, and energy goals holistically. A new study published this week in Nature Energy raises the potential for floating solar photovoltaics (FPV)…

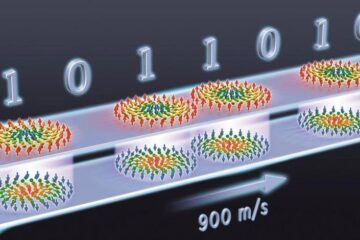

Skyrmions move at record speeds

… a step towards the computing of the future. An international research team led by scientists from the CNRS1 has discovered that the magnetic nanobubbles2 known as skyrmions can be…