Robot can be programmed by casually talking to it

Before you can tell your household robot “Make me a bowl of ramen noodles,” you'll have to teach it how to do that. Since we're not all computer programmers, we'd prefer to give those instructions in English, just as we'd lay out a task for a child.

But human language can be ambiguous, and some instructors forget to mention important details. Suppose you told your household robot how to prepare ramen noodles, but forgot to mention heating the water or tell it where the stove is.

In his Robot Learning Lab, Ashutosh Saxena, assistant professor of computer science at Cornell University, is teaching robots to understand instructions in natural language from various speakers, account for missing information, and adapt to the environment at hand.

Saxena and graduate students Dipendra K. Misra and Jaeyong Sung will describe their methods at the Robotics: Science and Systems conference at the University of California, Berkeley, July 12-16.

Video and abstract available at http://tellmedave.cs.cornell.edu

The robot may have a built-in programming language with commands like find (pan); grasp (pan); carry (pan, water tap); fill (pan, water); carry (pan, stove) and so on. Saxena's software translates human sentences, such as “Fill a pan with water, put it on the stove, heat the water. When it's boiling, add the noodles.” into robot language. Notice that you didn't say, “Turn on the stove.” The robot has to be smart enough to fill in that missing step.

Saxena's robot, equipped with a 3-D camera, scans its environment and identifies the objects in it, using computer vision software previously developed in Saxena's lab. The robot has been trained to associate objects with their capabilities: A pan can be poured into or poured from; stoves can have other objects set on them, and can heat things.

So the robot can identify the pan, locate the water faucet and stove and incorporate that information into its procedure. If you tell it to “heat water” it can use the stove or the microwave, depending on which is available. And it can carry out the same actions tomorrow if you've moved the pan, or even moved the robot to a different kitchen.

Other workers have attacked these problems by giving a robot a set of templates for common actions and chewing up sentences one word at a time. Saxena's research group uses techniques computer scientists call “machine learning” to train the robot's computer brain to associate entire commands with flexibly defined actions. The computer is fed animated video simulations of the action –- created by humans in a process similar to playing a video game – accompanied by recorded voice commands from several different speakers.

The computer stores the combination of many similar commands as a flexible pattern that can match many variations, so when it hears “Take the pot to the stove,” “Carry the pot to the stove,” “Put the pot on the stove,” “Go to the stove and heat the pot” and so on, it calculates the probability of a match with what it has heard before, and if the probability is high enough, it declares a match. A similarly fuzzy version of the video simulation supplies a plan for the action: Wherever the sink and the stove are, the path can be matched to the recorded action of carrying the pot of water from one to the other.

Of course the robot still doesn't get it right all the time. To test, the researchers gave instructions for preparing ramen noodles and for making affogato – an Italian dessert combining coffee and ice cream: “Take some coffee in a cup. Add ice cream of your choice. Finally, add raspberry syrup to the mixture.”

The robot performed correctly up to 64 percent of the time even when the commands were varied or the environment was changed, and it was able to fill in missing steps. That was three to four times better than previous methods, the researchers reported, but “There is still room for improvement.”

You can teach a simulated robot to perform a kitchen task at the “Tell me Dave” website, and your input there will become part of a crowdsourced library of instructions for the Cornell robots. Aditya Jami, visiting researcher at Cornell, is helping Tell Me Dave to scale the library to millions of examples. “With crowdsourcing at such a scale, robots will learn at a much faster rate,” Saxena said.

Cornell University has television, ISDN and dedicated Skype/Google+ Hangout studios available for media interviews.

Media Contact

All latest news from the category: Power and Electrical Engineering

This topic covers issues related to energy generation, conversion, transportation and consumption and how the industry is addressing the challenge of energy efficiency in general.

innovations-report provides in-depth and informative reports and articles on subjects ranging from wind energy, fuel cell technology, solar energy, geothermal energy, petroleum, gas, nuclear engineering, alternative energy and energy efficiency to fusion, hydrogen and superconductor technologies.

Newest articles

Superradiant atoms could push the boundaries of how precisely time can be measured

Superradiant atoms can help us measure time more precisely than ever. In a new study, researchers from the University of Copenhagen present a new method for measuring the time interval,…

Ion thermoelectric conversion devices for near room temperature

The electrode sheet of the thermoelectric device consists of ionic hydrogel, which is sandwiched between the electrodes to form, and the Prussian blue on the electrode undergoes a redox reaction…

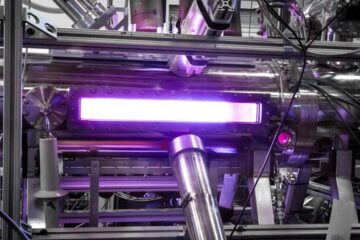

Zap Energy achieves 37-million-degree temperatures in a compact device

New publication reports record electron temperatures for a small-scale, sheared-flow-stabilized Z-pinch fusion device. In the nine decades since humans first produced fusion reactions, only a few fusion technologies have demonstrated…