Mixing Biology And Electronics To Create Robotic Vision

Robots are a long way from being as sophisticated as the movies would have you believe.

Sure they can crush humans at chess. But they can’t beat us at soccer < half the time they can’t even recognize the soccer ball < or defeat us in single combat and walk away from the encounter. "We don’t have robots that can physically compete with humans in any way," says Charles Higgins, assistant professor of Electrical and Computer Engineering (ECE) at the University of Arizona. However, Higgins is working to change that. He hopes to make robots more physical by giving them sight and an ability to react to what they see. “Right now, robots in general are just pitiful in terms of visual interaction,” Higgins said. True, a few of today’s robots can see in some sense, but they aren’t mobile. These vision systems are connected to large computers, which precludes their use in small, mobile robots. Outside of these few vision-only systems, today’s robots see very little. “Wouldn’t it be nice to have a robot that could actually see you and interact with you visually?” Higgins asks. “You could wave at it or smile at it. You could make a face gesture. It would be wonderful to interact with robots in the same way that we interact with humans.” If Higgins has his way, at least some of the first steps toward that goal will be achieved in the next ten to 20 years through neuromorphic engineering, a discipline that combines biology and electronics. Higgins and his students are developing an airborne visual navigation system by creating electronic clones of insect vision processing systems in analog integrated circuits. The circuits create insect-like self-motion estimation, obstacle avoidance, target tracking and other visual behaviors on two model blimps. Higgins is well qualified to combine the radically different disciplines of biology and electronics. In addition to his faculty position in ECE, he’s also on the faculty in UA’s neuroscience program, which is recognized as one of the world’s leaders in studying insect vision. He conducts research in the neuroscience labs to find out how insect vision works and then transfers those results to the ECE lab, where he creates electronic vision circuits based on the insect model. These circuits don’t use standard microprocessors. Instead, they’re based on what’s called “parallel processing” < a bunch of slower, simpler analog processors working simultaneously on a problem. In traditional digital computers, problems are solved in serial fashion, where a single fast digital processor flashes through a series of steps to solve the problem sequentially. In fact, today’s digital computers < as good as they are at playing chess, working spreadsheets and solving math problems < can’t tackle the much more complex activities that we, as humans, take almost for granted. The human eye, for instance, processes information at the equivalent of about 100 frames per second (fps) < much faster than a movie camera, which trundles along at 24 fps or a video camera that runs at 30 fps. Each frame is processed for luminance, color, and motion, and the resulting images aren’t blurred or smeared. Doing that with a conventional computer is extremely complicated, requiring expensive processors and huge gulps of power, Higgins says. “It requires a lot of data moving at a very high rate of speed and in a very small instant of time.” It’s a little like sending a digital computer out to play baseball. It has to continually rush between all nine positions on the field sequentially, catching the ball at shortstop, for instance, and then rushing to first to catch the throw it made from the shortstop position. Parallel processing < which mimics the way biological systems solve problems < would play baseball by stationing a slower processor at every position. Higgins hopes to see robotic vision develop in the same way that robotic speech processing has during the past 30 years. "Think of all the toys today that have some sort of speech interaction," he said. "In the ’70s and ’80s that would have required a bunch of expensive hardware. But in the ’90s toy manufacturers started using a microchip set that allowed them to do that very cheaply. Now some toy sets have excellent, very clear voices. I’m hoping to do the same thing with vision." Higgins wants to develop a microchip-based vision system that could follow a moving object like a soccer ball without getting confused by similarly shaped or colored objects, or a chip that would recognize different objects < a sidewalk crack it could roll over, for instance, from a ditch that it couldn’t. "I’m not talking about a vision system that will do everything our vision system will do, or even everything an insect’s visual system will do," he said. "I’m looking at a lot less < a very specific vision subsystem that accomplishes a specific task." Building vision systems for toys might sound a bit frivolous, particularly coming from high-powered university laboratories, but toys account for a huge amount of money in the U.S. economy. And toys have much in common with satellites, missiles, automotive systems and home electronics. “Toys are big enough that if you make a popular vision processor and you’re able to sell it to Hasbro to put on their toys and it’s a successful product, you could be a millionaire quite easily,” Higgins said. “In fact, a millionaire wouldn’t even cover it.” The key to all this is packing a huge amount of highly efficient processing in a small space, which is the goal of Higgins’ research. Once that’s done, the possibilities are nearly endless. “I’d like to give engineers a vision chip set like this and see what they would do with it,” Higgins said. “My bet is that they would use it for things we could never imagine now. And I know it would be a really big thing.” The first chip set might cost $30,000 to produce. Then the price might drop quickly to $200 a set and then down to $20 a set, Higgins said. “When you get that vision chip down to $20, people will be buying millions of them for their products,” he said. “I’d like to see that.”

Media Contact

All latest news from the category: Power and Electrical Engineering

This topic covers issues related to energy generation, conversion, transportation and consumption and how the industry is addressing the challenge of energy efficiency in general.

innovations-report provides in-depth and informative reports and articles on subjects ranging from wind energy, fuel cell technology, solar energy, geothermal energy, petroleum, gas, nuclear engineering, alternative energy and energy efficiency to fusion, hydrogen and superconductor technologies.

Newest articles

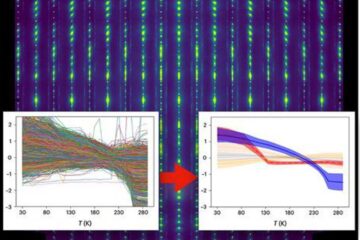

Machine learning algorithm reveals long-theorized glass phase in crystal

Scientists have found evidence of an elusive, glassy phase of matter that emerges when a crystal’s perfect internal pattern is disrupted. X-ray technology and machine learning converge to shed light…

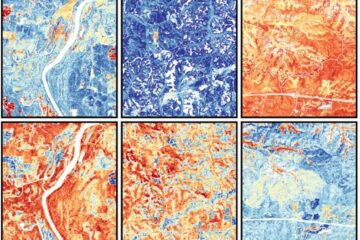

Mapping plant functional diversity from space

HKU ecologists revolutionize ecosystem monitoring with novel field-satellite integration. An international team of researchers, led by Professor Jin WU from the School of Biological Sciences at The University of Hong…

Inverters with constant full load capability

…enable an increase in the performance of electric drives. Overheating components significantly limit the performance of drivetrains in electric vehicles. Inverters in particular are subject to a high thermal load,…