Keeping the lights on

A method of assessing the stability of large-scale power grids in real time could bring the world closer to its goal of producing and utilizing a smart grid. The algorithmic approach, developed by UC Santa Barbara professor Igor Mezic along with Yoshihiko Susuki from Kyoto University, can predict future massive instabilities in the power grid and make power outages a thing of the past.

“If we can get these instabilities under control, then people won't have to worry about losing power,” said Mezic, who teaches in UCSB's Department of Mechanical Engineering, “And we can put in more fluctuating sources, like solar and wind.”

While development of more energy efficient machines and devices and the emergence of alternative forms of energy give us reason to be optimistic for a greener future, the promise of sustainable, reliable energy is only as good as the infrastructure that delivers it. Conventional power grids, the system that still distributes most of our electricity today, were built for the demands of almost a century ago. As the demand for energy steadily rises, not only will the supply become inadequate under today's technology, its distribution will become inefficient and wasteful.

“Each individual component does not know what the collective state of affairs is,” said Mezic. Current methods rely on a steady, abundant supply, producing enough energy to flow through the grid at all times, regardless of demand, he explained. However, should part of a grid already operating at capacity fail — say in times of disaster, attack or malfunction — widespread blackouts all over the system can occur.

“Everybody shuts down,” Mezic said. The big surges of power left unregulated by the malfunctioning component can either overload and burn out other parts of the grid, or cause them to shut down to avoid damage, he explained. The result is a massive power outage and subsequent economic and physical damage. The Northeast Blackout of 2003 was one such event, affecting several U.S. states and part of Canada, crippling transportation, communication and industry.

One alternative to solve the situation could be to build more power plants to produce the steady supply to feed the grid and have the capacity to handle unpredictable failures, fluctuations and shutdowns. It's a solution that's costly both for the environment and for the checkbook.

However, the method developed by Mezic and partners promises to prevent the cascade of blackouts and their subsequent effects by monitoring the entire grid for early signs of failure, in real time. Called the Koopman Mode Analysis (KMA), it is a dynamical approach based on a concept related to chaos theory, and is capable of monitoring seemingly innocuous fluctuations in measured physical power flow. Using data from existing monitoring methods, like Supervisory Control And Data Acquisition (SCADA) and Phasor Measurement Units (PMUs) KMA can track power fluctuations against the greater landscape of the grid and predict emerging events. The result is the ability to prevent and control large-scale blackouts and the damage they can cause.

Additionally, this approach can also lead to wider development of, demand for and use of renewable sources of energy, said Mezic. Because energy from systems like wind, water and sun are weather-dependent, they tend to fluctuate naturally, and this ability to respond to fluctuations can dispel what reservations utilities may have about relying on them to a greater degree.

Mezic's research is published in the Institute of Electrical and Electronics Engineers journal Transactions of Power Systems. Other collaborators in Koopman Mode Analysis research include researchers from Princeton University, Tsinghua University in China and the Royal Institute of Technology in Sweden.

Media Contact

More Information:

http://www.ucsb.eduAll latest news from the category: Power and Electrical Engineering

This topic covers issues related to energy generation, conversion, transportation and consumption and how the industry is addressing the challenge of energy efficiency in general.

innovations-report provides in-depth and informative reports and articles on subjects ranging from wind energy, fuel cell technology, solar energy, geothermal energy, petroleum, gas, nuclear engineering, alternative energy and energy efficiency to fusion, hydrogen and superconductor technologies.

Newest articles

Silicon Carbide Innovation Alliance to drive industrial-scale semiconductor work

Known for its ability to withstand extreme environments and high voltages, silicon carbide (SiC) is a semiconducting material made up of silicon and carbon atoms arranged into crystals that is…

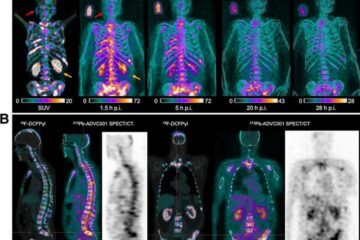

New SPECT/CT technique shows impressive biomarker identification

…offers increased access for prostate cancer patients. A novel SPECT/CT acquisition method can accurately detect radiopharmaceutical biodistribution in a convenient manner for prostate cancer patients, opening the door for more…

How 3D printers can give robots a soft touch

Soft skin coverings and touch sensors have emerged as a promising feature for robots that are both safer and more intuitive for human interaction, but they are expensive and difficult…