Study Shows Bank Risk-Assessment Tool Not Responding Adequately to Market Fluctuations

The study finds that the tests used by regulators do not detect when VaRs inaccurately account for significant swings in the market, which is significant because VaRs are key risk-assessment tools financial institutions use to determine the amount of capital they need to keep on hand to cover potential losses.

“Failing to modify the VaR to reflect market fluctuations is important,” study co-author Dr. Denis Pelletier says, “because it could lead to a bank exhausting its on-hand cash reserves.” Pelletier, an assistant professor of economics at NC State, says “Problems can come up if banks miscalculate their VaR and have insufficient funds on hand to cover their losses.”

VaRs are a way to measure the risk exposure of a company's portfolio. Economists can determine the range of potential future losses and provide a statistical probability for those losses. For example, there may be a 10 percent chance that a company could lose $1 million. The VaR is generally defined as the point at which a portfolio stands only a one percent chance of taking additional losses.

In other words, the VaR is not quite the worst-case scenario – but it is close. The smaller a company's VaR, the less risk a portfolio is exposed to. If a company's portfolio is valued at $1 billion, for example, a VaR of $15 million is significantly less risky than a VaR of $25 million.

The NC State study indicates that regulators could use additional tests to detect when the models used by banks are failing to accurately assess the statistical probability of losses in financial markets. The good news, Pelletier says, is that the models banks use tend to be overly conservative – meaning they rarely lose more than their VaR. But the bad news is that bank models do not adjust the VaR quickly when the market is in turmoil – meaning that when the banks are wrong and “violate” or lose more than their VaR – they tend to be wrong multiple times in a short period of time.

This could have serious consequences, Pelletier explains. “For example, if a bank has a VaR of $100 million it would keep at least $300 million in reserve, because banks are typically required to keep three to five times the VaR on hand in cash as a capital reserve. So it could afford a bad day – say, $150 million in losses. However, it couldn't afford several really bad days in a row without having to sell illiquid assets, putting the bank further in distress.”

Banks are required to calculate their VaR on a daily basis by various regulatory authorities, such as the Federal Deposit Insurance Corporation. Pelletier says the new study indicates that regulatory authorities need to do more to ensure that banks are using dynamic models – and don't face multiple VaR violations in a row.

The study, “Evaluating Value-at-Risk Models with Desk-Level Data,” was co-authored by Pelletier, Jeremy Berkowitz of the University of Houston and Peter Christoffersen of McGill University. The study will be published in a forthcoming special issue of Management Science on interfaces of operations and finance.

Media Contact

More Information:

http://www.ncsu.eduAll latest news from the category: Business and Finance

This area provides up-to-date and interesting developments from the world of business, economics and finance.

A wealth of information is available on topics ranging from stock markets, consumer climate, labor market policies, bond markets, foreign trade and interest rate trends to stock exchange news and economic forecasts.

Newest articles

Silicon Carbide Innovation Alliance to drive industrial-scale semiconductor work

Known for its ability to withstand extreme environments and high voltages, silicon carbide (SiC) is a semiconducting material made up of silicon and carbon atoms arranged into crystals that is…

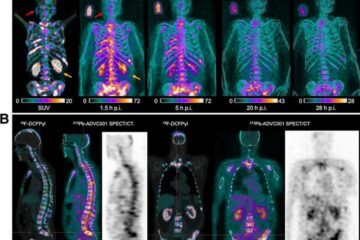

New SPECT/CT technique shows impressive biomarker identification

…offers increased access for prostate cancer patients. A novel SPECT/CT acquisition method can accurately detect radiopharmaceutical biodistribution in a convenient manner for prostate cancer patients, opening the door for more…

How 3D printers can give robots a soft touch

Soft skin coverings and touch sensors have emerged as a promising feature for robots that are both safer and more intuitive for human interaction, but they are expensive and difficult…