ESMT Competition Analysis report presents pros and cons of net neutrality regulation

The growing demand for bandwidth for data-intense applications, such as online videos and cloud gaming, and the increasing commercial relevance of the Internet have stimulated a global debate on the viability of the Internet's economic model and the role of net neutrality regulation.

The consultation process of the European Commission in the second half of 2010 resulting in over 300 responses shows the vivid interest of policy makers and regulators, industry, and the general public on this matter in Europe. “However, a thorough analysis of the implications of net neutrality regulation on some possible Internet business models adapted to the different market conditions in Europe, foremost European access regulation, is missing,” states Hans W. Friederiszick, coauthor of the study “Assessment of a sustainable Internet model for the near future.”

In the independent academic study by Hans W. Friederiszick, Jakub Kaluzny, Simone Kohnz, and Lars-Hendrik Röller and commissioned by Deutsche Telekom, ESMT Competition Analysis (CA) presents four Internet business models and deliberates on the potential regulatory implications of each. Each business model focuses on a different aspect, like congestion management, new services or consumer choice, thus covering a broad universe of potential future scenarios. Each model affects congestion, innovation, as well as consumer and

social welfare which a regulatory approach should take into account. Since it is difficult to predict with any certainty which business models will dominate in the future, the prudent approach requires authorities to apply an informed “wait and see” approach: closely monitoring market developments and forcefully reacting to any emerging competitive threats rather than acting pre-emptively and therewith preventing some beneficial business models from developing.

Lars-Hendrik Röller, ESMT president and senior advisor at CA, said, “European regulators should carefully consider the economic consequences of regulation of the Internet. This report provides them with comprehensive insights on how different business models are affected by different regulatory frameworks.”

The four profitable Internet business models identified by the authors:

The “Congestion Based Model” tackles congestion problems through congestion-based pricing; however no quality differentiation is introduced. Specifically, in this business model ISPs are assumed to charge content providers higher prices for traffic in peak periods than in off-peak periods. For example, the cost for a provider of movie downloads could be significantly higher when an end user downloads a HD movie during the peak evening period than in the early morning hours or within a 24-hour period. End users in this business model can choose between flat rates with differentiated data caps.

The “Best Effort Plus Model” preserves the traditional best effort network for existing services and assumes that content providers and end users are priced as in the status quo if they operate on the best effort level. However, these restrictions do not apply to innovative future services, for which pricing and minimum service requirements follow individual negotiations between the eyeball ISP and the content provider.

The “Quality Classes – Content Pays Model” stresses the perceived need of different applications for various degrees of quality of service and offers different quality classes open for different applications. Unlike in the previous business model, the quality classes encompass all services, including currently available traditional services. Depending on their requirements, a content provider could purchase a transit quality most appropriate for its type of content. For example, a content provider offering HD movie streaming or gaming services requiring low latency would purchase a more expensive premium quality class to ensure the quality of experience for end users. End users would still pay a uniform flat rate in this model and experience the quality as chosen by the content provider.

The “Quality Classes – User Pays Model” focuses on consumer choice for higher quality levels and offers multiple quality classes for end users that are designed to match their different usage patterns. For example, end users who frequently use interactive applications might choose the quality class which is more suitable for dealing with such applications, i.e., that offers a low level of delay and jitter.

To download the study: http://www.esmt.org/info/latest

Press contact

Farhad Dilmaghani, +49 (0)30 21 231-1042, farhad.dilmaghani@esmt.org

Martha Ihlbrock, +49 (0)30 21 231-1043, martha.ihlbrock@esmt.org

About ESMT Competition Analysis

ESMT Competition Analysis is an economic consultancy working on central topics in the field of competition policy and regulation. These include case-related work on European competition matters, e.g., merger, antitrust or state aid cases, economic analysis within regulatory procedures, and studies for international organizations on competition policy issues. Competition Analysis applies rigorous economic thinking with a unique combination of creativity and robustness, in order to meet the highest quality standards of international clients. As partner of the international business school ESMT European School of Management and Technology, Competition Analysis works closely together with ESMT professors and professionals on leading-edge research in industrial organization and quantitative methods.

Media Contact

All latest news from the category: Business and Finance

This area provides up-to-date and interesting developments from the world of business, economics and finance.

A wealth of information is available on topics ranging from stock markets, consumer climate, labor market policies, bond markets, foreign trade and interest rate trends to stock exchange news and economic forecasts.

Newest articles

Bringing bio-inspired robots to life

Nebraska researcher Eric Markvicka gets NSF CAREER Award to pursue manufacture of novel materials for soft robotics and stretchable electronics. Engineers are increasingly eager to develop robots that mimic the…

Bella moths use poison to attract mates

Scientists are closer to finding out how. Pyrrolizidine alkaloids are as bitter and toxic as they are hard to pronounce. They’re produced by several different types of plants and are…

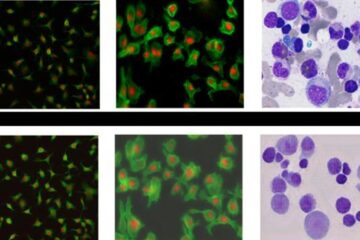

AI tool creates ‘synthetic’ images of cells

…for enhanced microscopy analysis. Observing individual cells through microscopes can reveal a range of important cell biological phenomena that frequently play a role in human diseases, but the process of…