Overconfidence leads to bias in climate change estimations

“Climate researchers often use a scenario approach,” says Dr. Klaus Keller, assistant professor of geosciences, Penn State. “Nevertheless, scenarios are typically silent on the question of probabilities.”

The Intergovernmental Panel on Climate Change, which is in its third round of climate assessment, uses models that scenarios of human climate forcing drive. These forcing scenarios are, the researchers say, overconfident.

“One key question is which scenario is likely, which is less likely and which they can neglect for practical purposes,” says Keller who is also affiliated with the Penn State Institutes of Energy and the Environment. “At the very least, the scenarios should span the range of relevant future outcomes. This relevant range should also include low-probability, high-impact events.”

The researchers provide evidence that the current practice neglects a sizeable fraction of these low probability events and results in biased outcomes. Keller; Louis Miltich, graduate student; Alexander Robinson, Penn State research assistant now on a Fulbright Fellowship in Berlin, and Richard Tol, senior research officer, Economic and Social Research Institute, Dublin, Ireland, developed an Integrated Assessment Model to derive probabilistic projections of carbon dioxide emissions on a century time scale. Their results extended far beyond the range of previously published scenarios, the researchers told attendees today (Dec. 15) at the fall meeting of the American Geophysical Union in San Francisco.

Noting that overconfidence is an often observed effect, Keller cites a study reviewing estimates of the weight of an electron as an example. The reported range for the weight of an electron from 1955 to the mid-1960s did not include the weight considered correct today. On a more closely related topic, the range of energy use projections in the 1970s typically missed the observed trends.

“We need to identify key sources of overconfidence and critically reevaluate previous studies,” says Keller.

According to their study, past scenarios of carbon dioxide emissions can miss as much as 40 percent of probabilistic projection, missing a large number of low-probability events. The omitted scenarios may include low-probability, high-impact events.

“If low-probability, high-impact events exist, such as threshold responses of ocean currents or ice sheets, omitting these scenarios can lead to poor decision making,” says Keller. “We need to see the full range of possible scenarios, because the actual outcome may not be contained in the central estimate.

“New tools and faster computers enable a considerably improved uncertainty analysis,” he adds. “If you do not tell how likely the probability of a scenario is, people are left to guess. A sound scientific analysis can at least tell how consistent these guesses are with the available observations and simple, but transparent assumption.”

Media Contact

More Information:

http://www.psu.eduAll latest news from the category: Earth Sciences

Earth Sciences (also referred to as Geosciences), which deals with basic issues surrounding our planet, plays a vital role in the area of energy and raw materials supply.

Earth Sciences comprises subjects such as geology, geography, geological informatics, paleontology, mineralogy, petrography, crystallography, geophysics, geodesy, glaciology, cartography, photogrammetry, meteorology and seismology, early-warning systems, earthquake research and polar research.

Newest articles

Superradiant atoms could push the boundaries of how precisely time can be measured

Superradiant atoms can help us measure time more precisely than ever. In a new study, researchers from the University of Copenhagen present a new method for measuring the time interval,…

Ion thermoelectric conversion devices for near room temperature

The electrode sheet of the thermoelectric device consists of ionic hydrogel, which is sandwiched between the electrodes to form, and the Prussian blue on the electrode undergoes a redox reaction…

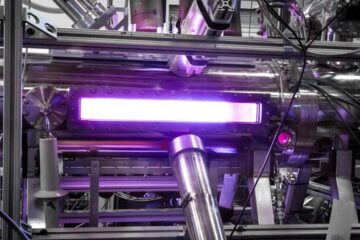

Zap Energy achieves 37-million-degree temperatures in a compact device

New publication reports record electron temperatures for a small-scale, sheared-flow-stabilized Z-pinch fusion device. In the nine decades since humans first produced fusion reactions, only a few fusion technologies have demonstrated…