Scientists offer new model for forecasting the likelihood of an earthquake

“This is the most realistic model to date,” said Kaj Johnson, assistant professor of geological sciences at Indiana University, who worked on the modeling project several years ago when he was a Stanford graduate student. “This is something people had been asking for years now. It's the next step.”

Johnson and Stanford geophysics Professor Paul Segall will present their new probability model at 11:35 a.m. PT on Dec. 14, at the annual meeting of the American Geophysical Union in San Francisco during a talk titled “Distribution of Slip on San Francisco Bay Area Faults” in Room 307, Moscone Center South.

Measuring faults

An important component of earthquake-probability assessment is determining how fast a fault moves. One technique involves the use of GPS, which allows seismologists to measure the movement of various points on the surface of the Earth, then use these data to extrapolate underground fault movement. Another way to determine fault slip rates is to dig a trench across the fault and find the signatures of past earthquakes, a method called paleoseismology.

“People say, let's compare rates of fault movement from GPS to rates of fault movement from geologic studies,” Segall said. “But it's as if you're measuring different parts of the same thing with different tools. The discrepancy can be quite big.”

To bridge the gap, Segall and Johnson created a new model that weaves together everything known about how a fault moves. The idea for the model came when Segall was asked to speak at a conference on the “rate debate,” which is how geophysicists refer to the GPS-paleoseismology discrepancy. That's when he realized that the standard model doesn't take into account that fault-slippage rates vary over time.

This time dependence is important, because GPS doesn't measure fault slippage directly. Rather, it measures how quickly points on the surface of the Earth are moving. Then scientists try to fit these data into mathematical models to estimate the rate of slip. “Because of the time-dependent rate, your estimate depends on where you are in the earthquake cycle,” Segall said. “So if you use a model that doesn't take that into account, you will get a slip rate that's different.”

The scientists hope that their new updated model can give a more accurate picture of slip rates and reconcile the two pieces of fault data.

California and Asia

With the new model, the team confirmed that the slip rates from GPS and from the geological record for the San Francisco Bay Area are relatively consistent. “Along the San Andreas system, the numbers tend to come out in reasonable agreement,” Segall said.

The next step for the scientists is to use their time-dependent model to scrutinize faults in other tectonically active regions, such as China, where there is a large disparity between contemporary GPS data and the paleoseismological record. “We want to take the same philosophy and procedure and apply it to different places in the world where the discrepancy can be quite big,” Segall noted. “We're developing a strategy for how to move forward. We're still very much in the progress phase.”

Johnson is working on applying the new model to faults in Taiwan and Tibet, where the earthquake hazard is great. “This can help inform people who make the forecasts,” Johnson said. “These new time-dependent models are going to become the norm, I think.”

Media Contact

More Information:

http://www.stanford.eduAll latest news from the category: Earth Sciences

Earth Sciences (also referred to as Geosciences), which deals with basic issues surrounding our planet, plays a vital role in the area of energy and raw materials supply.

Earth Sciences comprises subjects such as geology, geography, geological informatics, paleontology, mineralogy, petrography, crystallography, geophysics, geodesy, glaciology, cartography, photogrammetry, meteorology and seismology, early-warning systems, earthquake research and polar research.

Newest articles

Silicon Carbide Innovation Alliance to drive industrial-scale semiconductor work

Known for its ability to withstand extreme environments and high voltages, silicon carbide (SiC) is a semiconducting material made up of silicon and carbon atoms arranged into crystals that is…

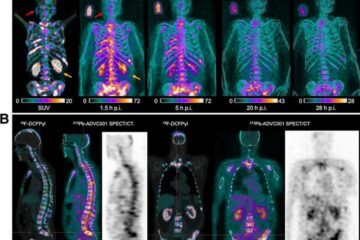

New SPECT/CT technique shows impressive biomarker identification

…offers increased access for prostate cancer patients. A novel SPECT/CT acquisition method can accurately detect radiopharmaceutical biodistribution in a convenient manner for prostate cancer patients, opening the door for more…

How 3D printers can give robots a soft touch

Soft skin coverings and touch sensors have emerged as a promising feature for robots that are both safer and more intuitive for human interaction, but they are expensive and difficult…